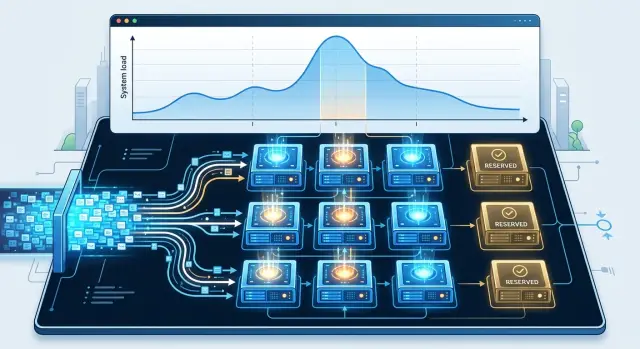

Warm model pool: how to calculate reserve for peak hours

A warm model pool helps you get through peak hours without unnecessary cost. We show how to estimate GPU reserve, watch the queue, and avoid paying for idle capacity.

Where the money is lost at the peak

The most common mistake is looking at average GPU utilization for the day and thinking the buffer is already enough. The average almost always gives false comfort. If the cards are 45% busy from 10:00 to 16:00, that does not mean the system will calmly survive a spike that is three times the normal level at 11:20.

The peak does not hit the average; it hits the short window. A few minutes are enough for many users to send long requests at the same time, and the queue starts growing faster than the models can respond. From the outside, it looks simple: “everything was fine, and then it suddenly got slow.” Inside, a backlog is already building, and it does not disappear right away even if traffic starts to fall.

Money usually leaks in four places. The team keeps the reserve too small and loses requests during the most expensive hour. The system starts models too late, and cold start adds extra seconds. The queue grows, users resend requests, and the load only gets worse. Or, on the contrary, the team sets aside too much reserve and pays for almost idle GPUs for hours.

Cold start is especially painful during working hours. The model has to be loaded into memory, warmed up, and sometimes adapters or a separate configuration must be pulled in. On paper, that is 20–40 seconds. In a live flow, that is already enough for the queue to get far ahead. If the service answers in a support chat, the operator simply waits. If it is scoring, document review, or a call center assistant, every extra second quickly turns into direct losses.

Extra reserve is quieter, but no less costly. Suppose the team keeps a warm model pool for a rare peak that happens once a week and lasts 10 minutes. The rest of the time, part of the cards sit idle, while the infrastructure bill keeps running every hour. This is especially noticeable when traffic is uneven by shift, day of week, or region.

At the peak, a business almost always pays either for customer waiting or for idle reserve. You cannot remove the risk entirely. The goal is simpler: keep enough reserve to survive a normal spike without a long queue, and do not turn calm hours into expensive downtime.

What affects the reserve size

The reserve breaks not at average traffic, but at the moment when queueing, long responses, and a heavy model all come together. The same 300 requests per hour can flow smoothly or overload the GPU if half of them arrive within two minutes.

The first factor is concurrency. If you usually have 8 active dialogs at peak, that is one reserve size. If there are 30 dialogs and some users expect a real-time answer, the reserve has to be very different. That is why it helps to count not only requests per hour, but also how many requests the system is handling at the same time.

The second factor is the model itself. A smaller model takes less memory and frees the GPU faster. A heavy reasoning model keeps the card busy longer, especially if the answer is long and the token limit is high. Because of that, two models with the same request volume can produce very different GPU loads, sometimes by several times.

The third factor is response length. A short answer of 80 tokens passes almost unnoticed. A 1200-token answer takes longer to generate, and the queue grows even with the same incoming traffic. If the team allows long answers across all scenarios, the reserve almost always has to be higher.

There is also a simple product boundary: how much latency are you willing to tolerate? For an internal assistant, 6–8 seconds can sometimes be fine. For an operator chat or voice scenario, it usually is not. The stricter the target latency, the bigger the warm pool you need, because you cannot simply “wait out” the spike in the queue.

Warm-up and tear-down time should be counted separately. If the model takes 60–90 seconds to load, it will not save you from a short 3-minute spike. If you keep it in memory for another 10 minutes after demand drops, the GPUs start idling. That is often where the extra cost hides.

In practice, it helps to keep five numbers in view: how many requests arrive at the same time in the worst hour, how much memory each model needs, how many tokens a normal answer and a long tail use, what latency is acceptable for the product, and how many seconds it takes to load and unload the model.

If traffic goes through a single gateway like AI Router, this view is easier to collect by model, route, and access key instead of by total traffic. That makes it clear where reserve is really needed and where GPUs do not need to be warmed in advance.

What data to collect before calculating

Average daily traffic is almost useless. A warm pool fails not on the “average” hour, but in the short moment when requests arrive in a dense burst. That is why it is better to export load by minute, and for the most sensitive services, even more often.

First, collect a series of incoming requests: how many came in each minute, how the flow changed during the shift, and where the sharp spikes were. Then calculate P95 and P99. These metrics show not the usual minute, but the top 5% and 1% of load, where capacity usually runs out.

If you only look at the average, the calculation will almost always come out too calm. Over a day, you may have 20 requests per minute, and then at 11:30 it becomes 75. For GPUs, that is no longer a small deviation; it is a completely different operating mode.

Do not lump all traffic into one group. A short chat, a long analytics request, and bulk document classification load models in very different ways. It is enough to split at least two views: by model type and by usage scenario.

Then you can see which requests can share one pool and which should be counted separately. One stream can live comfortably on a small reserve, while another regularly creates a queue even at the same request rate.

Look not only at successful responses. Cancellations, timeouts, retries, and queue length often reveal the problem earlier than average latency. If the queue grows during the busiest 10 minutes and some customers abandon the request after a few seconds, capacity is already not enough, even if the day-long graph looks acceptable.

Mark the days when traffic behaves differently from usual. In retail, these are promotions; in banking, payout days; in SaaS, releases and mass activation of a new feature. Such dates should not be mixed with quiet days, otherwise capacity planning will give a false picture.

If you calculate load through a single LLM gateway, it helps to keep the same slices by model, provider, and key. Then you can see not only the overall peak, but also the exact scenario that created it. That is more accurate than buying reserve “just in case.”

How to calculate the warm pool step by step

It is better to calculate the warm pool not from the number of GPUs, but from the response time you are willing to give the user. The same reserve may be enough for short text extraction and too small for a chat with long generation. So first set the target latency for each scenario. For example, up to 2 seconds for extracting fields from a document and up to 8 seconds for a response in a work chat.

Then calculate in one sequence, without guesswork.

- Take the heaviest hour from the last 2–4 weeks and count not only requests per minute, but also tokens. For LLMs, this is more honest: 80 short requests and 80 long dialogs load GPUs in completely different ways.

- Convert tokens into the throughput of one model replica. If one replica consistently produces 35 tokens per second at the required context, and the service needs 90 tokens per second at peak, the reserve should cover at least three warm replicas.

- Add buffer for long answers and tails. The average is most misleading here. If 10–15% of requests use a large context or ask for long generation, you usually need another 20–30% on top, otherwise the queue will start growing at the worst possible moment.

- Account for warm-up when switching models. Loading weights, preparing memory, and starting a new process can take from seconds to minutes. If a model cannot be started quickly, keep at least one copy ready in advance, even if it is not needed every hour.

- Check the calculation against the heaviest hour of the week. Look not at the average day, but at the window where you have both high incoming traffic and the longest answers. A healthy result looks simple: the queue does not keep growing for more than a few minutes at a time, and P95 latency stays within the target.

A short example. If on Monday from 10:00 to 11:00 the team gets 120 requests per minute, and half of them go into a long chat, it is risky to calculate from the average response length. It is better to take that hour as a whole and check the reserve specifically against it. In windows like this, it usually becomes clear where the GPUs will sit idle and where they are already not enough.

If you route traffic through AI Router, it is better to calculate the reserve separately for each model or route group. The total request count almost always hides the imbalance: one model can take most of the load and break the entire calculation.

How to tell a normal peak from noise

Money burns not on regular load, but on reacting to the loudest spike. If one unusual hour forces you to keep extra GPUs warm all day, the calculation is already off.

A normal peak repeats. It has a clear time, a similar duration, and a similar volume. Noise looks different: one sharp jump, then silence; or a spike after a mailing, month-end reporting, a release, or an issue in a neighboring service.

Compare not the best and worst day, but 20–30 similar days in a row. Look at load in short windows, for example 15 minutes. If, at the same hour, the median and the 90th percentile keep rising, that is a real operating peak. If only the maximum shoots up and the normal level barely changes, it is noise.

Seasonality can also be confused with a one-time campaign. Month-end, payday periods, Monday mornings, and evening hours in a contact center are recurring events. A one-day promotion, importing old dialogs, or a mass test run should not determine the reserve size.

A simple rule helps here: keep reserve for spikes that happen several times a week. Mark campaign days and incidents separately. Turn on the queue if wait time has crossed your threshold, for example 2–3 seconds. Do not warm a rare model in advance if it is called only a couple of times a day.

The queue threshold is better set by the cost of waiting, not by intuition. If a spike lasts 5 minutes and warming a new model replica takes almost the same amount of time, a queue is often cheaper. If the queue keeps growing for 20–30 minutes and is already hurting SLA, the reserve is genuinely needed.

The rule is even stricter for rare models. They should not stay in memory all day for one team or a rare scenario. Keep the frequent model warm, and load the rare one on a schedule, on the first request, or route it externally. In a setup with AI Router, that is convenient: constant traffic can go through a predictable warm pool, while rare spikes do not need to be paid for with idle GPUs.

If, after this filtering, the peak still repeats and hits the queue, it is no longer noise. It is part of your normal workload.

Example calculation for a work shift

For a bank, the support chat is busiest from 12:00 to 14:00, when customers check transfers, limits, and card blocks. Simple questions are sent by the team to a lighter model, while disputed cases with long chat history and documents go to a heavier one.

For the calculation, the team does not use the highest spike of the month. That kind of maximum is often tied to a mailing, a mobile app outage, or a one-time promotion. For a warm pool, it is more useful to look at the peak of a normal work shift, for example the 95th percentile in five-minute windows over the last few weeks.

Suppose the work peak at lunchtime is 30 requests per minute. Of those, 83% go to the light model and 17% to the heavy one. With current settings, one warm replica of the light model handles 12 requests per minute, and the heavy one handles 3.

Light model: 30 x 0.83 = 24.9 -> 24.9 / 12 = 2.1 -> need 3 replicas

Heavy model: 30 x 0.17 = 5.1 -> 5.1 / 3 = 1.7 -> need 2 replicas

That gives a warm pool of 5 model replicas for the lunch peak. If the light model uses 1 GPU and the heavy one uses 2 GPUs, the total reserve is 7 GPUs. That is already much more accurate than a calculation with reserve for any rare spike.

Now compare that with the monthly maximum. On one day, the bank saw a jump to 44 requests per minute, but it lasted less than a minute after an app error. If you orient the reserve around that maximum, you would need 4 light and 3 heavy replicas. Two extra replicas would sit idle almost every day.

A short queue of 30–60 seconds usually solves this problem. It smooths the sharp jump while some sessions finish, and it lets you avoid buying reserve for every noisy minute. That is why, for a work shift, it is more reasonable to keep 3 light and 2 heavy replicas rather than inflate the pool to the monthly record.

If latency rises moderately at lunch and the queue does not last more than a minute, the reserve is chosen well. If the queue lasts longer, add capacity to the model that first hit the limit, not to the whole group at once.

Mistakes that leave GPUs idle

Most often, the warm pool grows not because traffic increased, but because of bad assumptions. The team sees one heavy day, applies the same reserve to every weekday evening, and then keeps excess capacity almost empty for weeks.

The most expensive mistake is calculating reserve from the highest day of the month. That spike is often tied to a one-time mailing, report, release, or an issue with a neighboring service. If you treat it as normal, you pay for hardware that is needed for a couple of hours, not for the whole month. It is much more useful to look at the typical peak by shift and separately plan for rare spikes.

Another common problem is mixing batch jobs and chat in one pool. Their rhythm is different. Chat needs low latency and a short queue, while batch jobs can process large volumes in the background. If you put them on the same GPUs, overnight batch work will take memory and decode slots, and daytime chat will stall. In response, the team adds more cards, even though it only needed to separate the traffic.

Many people count only requests per minute and ignore response length. That is a trap. One hundred short replies and one hundred long reports load the pool very differently. If users ask for concise answers during the day and run long generations in the evening, the average RPS barely changes, while GPU time rises noticeably.

Failure of one card should also be planned for in advance. If the pool is sized exactly for the normal peak, any outage turns a normal shift into queues and timeouts. After that, teams often draw the wrong conclusion and keep extra reserve permanently, when what they actually need is a clear N+1 buffer, not constant overprovisioning.

The same warm-up for every model also hurts the budget. Frequent models should stay warm almost all the time. Rare ones should be started only on a schedule, on a router signal, or after the first requests. If you warm a rare model the same way as the main one, it will occupy memory for hours just to handle a few calls a day.

A good sign of a healthy pool is simple: frequent models run steadily, rare models start quickly when demand appears, and the reserve is calculated from the real traffic profile, not from the noisiest day on the calendar.

Quick checks before launch

Before launch, it is worth checking not only the formula, but also the discipline around it. Even an accurate calculation breaks if the team looks at yesterday’s graph, does not know the real latency per model, and keeps an endless queue.

First, check the data horizon. If you have at least 2–4 weeks of history, you can already see repeats: shift start in the morning, lunch spike, evening drop, reporting days, or mass mailings. With only two or three days, it is easy to mistake random noise for a pattern and buy extra reserve.

Then look at latency for each model separately. The average again often lulls people into complacency. For launch, it is more useful to know P95: that is what a noticeable share of users will actually see during a busy hour. If one model answers in 1.2 seconds and another drifts to 4–5 seconds, the pool cannot be calculated as if they were the same.

Another mandatory filter is the queue. It should be limited both by length and by waiting time. Otherwise, the system will tolerate overload for too long, and you will see the problem too late. In practice, a short queue with a clear failure response is almost always better than a long queue that eats up SLA and hides a GPU shortage.

Before launch, it is enough to check five things:

- whether you have at least 2–4 weeks of traffic history;

- whether you have P95 latency for each model, not just average numbers;

- whether queue limits are set by request count and by wait time;

- whether the team knows what to turn off first during overload;

- whether there is a scheduled review point after traffic growth.

The last point is often missed. Traffic grew by 20–30%, but the pool was left unchanged because “it is still holding.” That is an expensive habit: first you start seeing latency tails, and then you rush to add extra GPUs with a buffer on top of fear.

If you work through AI Router, part of this check can be done faster: pull data from audit logs, review rate limits by key, and separate real growth from a spike caused by a single customer. After that kind of review, the warm pool usually looks smaller, but it behaves more calmly.

What to do next

After the calculation, do not reserve the whole buffer at once. It is better to take one group of models with similar load, such as a chat for operators or an internal assistant for employees. A pilot like that makes it easier to see where the estimate failed: in response length, in the number of simultaneous requests, or in warm-up time.

Look not only at GPU utilization, but also at the cost of waiting. Sometimes a 2–5 second queue costs less than one more always-warm instance that sits idle for half the shift. If the latency does not break the scenario, a small buffer is often more economical than extra reserve.

At the start, four steps are enough: run a pilot for 2–4 weeks and turn on logs for incoming requests, response time, and generation length; separately calculate the cost of reserve and the cost of a short queue during peak hours; split the scenarios that are better kept locally from the scenarios that are easier to send through external routing; after a month, recalculate the pool from the new logs, not from the first estimate.

That kind of separation quickly removes unnecessary spending. Local hosting is usually needed where low latency, predictable pricing, and your own storage boundary matter. External routing is better suited to rare, expensive, or seasonal models that do not make sense to keep warm all day.

If the team needs data to stay in Kazakhstan, the pilot can be tested through AI Router on airouter.kz. The service has a single OpenAI-compatible endpoint, data can be stored inside the country, and production features include audit logs, PII masking, and key-level limits. It is a convenient way to run real requests without changing the SDK or code, then decide which models should stay on your own infrastructure and which should go through the gateway.

After a month, the picture should become simple: during the right hours, the queue is shorter and fewer GPUs are idle. If that did not happen, do not expand the warm pool automatically. First check the routes, limits, response length, and the hours when peak LLM load actually occurs.

Frequently asked questions

What matters more when calculating reserve: average traffic or peak traffic?

Look at short spikes by the minute and at P95 or P99, not the daily average. That is when the queue grows fastest, and when you can see how many warm copies the model can actually handle.

How can I tell that the GPUs are already not enough?

The reserve is too small if, during busy minutes, queue length, timeouts, retry attempts, and P95 latency all rise. Average latency often looks fine even when some users are already waiting too long.

How much traffic history do I need before calculating?

For a first calculation, 2–4 weeks of logs are usually enough. Over that period, you can see repeating patterns like shift starts, lunch spikes, month-end activity, and other normal windows without the distortion of a single noisy day.

Why not calculate reserve based only on requests per minute?

Because response length and context size change the load a lot. The same request volume can be easy with short answers and can quickly saturate GPUs with long generations.

When should a model stay warm, and when should it be loaded on demand?

Keep a frequently used model in memory during stable demand hours. A rare model is better started on a schedule, on the first request, or routed externally, otherwise the GPUs will sit idle for most of the day.

Should I plan reserve for the highest spike of the month?

Usually not. If a spike happened only once because of an outage, a mass mailing, or a promo, it is cheaper to absorb it with a short queue than to pay every day for extra copies.

What reserve should I add on top of the base calculation?

Start with reserve for long answers and for one-card failure. In practice, a moderate buffer above the typical peak is often enough if you also limit the queue by both length and wait time.

What if the model takes a long time to warm up?

If warm-up takes tens of seconds or minutes, keep at least one copy warm during working hours. Otherwise, a short spike will be over before the new copy can clear the queue.

Should chat and batch be mixed in one pool?

It is better to separate those workloads. Chat needs fast responses, while batch jobs can wait; if you put them on the same GPUs, background processing will start interfering with live conversations and inflate the reserve without any benefit.

How does AI Router help calculate the warm pool more accurately?

If traffic goes through AI Router, look at slices by model, route, and access key. That makes it easier to see which scenario is creating the peak and avoid warming extra GPUs where demand is almost zero.