Shadow Traffic for a Model Migration Without Breaks or Surprises

Shadow traffic for model migration helps compare answers, latency, and cost before launch. Learn how to measure differences and switch calmly.

Why a direct model swap brings surprises

A direct model replacement almost never goes unnoticed. Even if you keep the same prompt, the same SDK, and the same API, the new model responds differently. The tone changes, the length changes, the order of sections changes, and the output format changes. To a person, the difference may look small, but for a real workflow it is already a different logic.

A good example is JSON and any strict templates. The old model may have consistently returned a short object with no extra text, while the new one suddenly adds an explanation before the JSON, changes field names, or phrases the answer more cautiously. A user will hardly notice. The parser will notice right away.

It is not only the text quality that changes. A new model often has different latency, a different average response size, and a different streaming cost. In a test of twenty requests, that is easy to miss. On live traffic, an extra 700–900 ms and a few dozen more tokens per response quickly turn into a queue, a higher bill, and an impatient rollback.

Old checks also often give people false confidence too early. Teams usually build tests around the errors they have already seen: empty response, broken JSON, forbidden words, limit overrun. A new model fails in its own way. It may fail less often in a formal sense, but more often drift into evasive answers, confuse roles, lose details from long context, or refuse to answer too early.

Usually the surprises show up only after launch. Support notices that the bot answers longer and slower. Analysts see a spike in tokens and cost. The integration breaks on rare output formats. The business sees the problem not in tests, but in conversion or CSAT.

Even if the technical switch is very simple, the risk does not disappear. In an OpenAI-compatible gateway like AI Router, you can quickly switch the model or provider without rewriting the code. That is convenient, but precisely because it is so easy, teams sometimes underestimate behavioral differences and move traffic too early.

It is better to treat a model migration not as a small adjustment, but as a separate product change. If user requests are live, a direct switch almost always costs more than a short shadow-check period.

When shadow traffic is truly needed

Parallel checking is not needed for everyone. If a model writes drafts for an internal team, the risk is often low: a person will notice a strange answer and fix it. But if the answer affects money, timing, or risk, you should not change models blindly.

The first obvious case is when something happens automatically after the model answers. For example, an LLM chooses a ticket category, sets priority, drafts a customer reply, or extracts fields for a contract. In this case, the same mistake costs more than time. It can send a request to the wrong place, delay a payment, or create a dispute with a customer.

A shadow launch is especially useful if you already have long-standing prompts built for the old model. On paper, the new model may be stronger. In practice, it reads instructions differently, handles format differently, and changes the tone of the answer. A prompt that worked calmly for months can suddenly start adding extra text, skipping fields, or hallucinating too confidently.

There is also a more practical reason: you want to compare several providers honestly on the same data. Without a parallel run, the comparison quickly gets distorted. One provider gets easy requests in the morning, another gets hard ones in the evening, and the conclusions become shaky. Shadow mode removes that confusion: both versions see the same stream.

The risk is usually higher in support, sales, finance, and compliance, as well as in any scenario where the next system expects a strict output format. If the model error affects support or sales, the cost of a miss becomes visible quickly. One bad answer is not a disaster on its own. The problem starts when there are hundreds of them, and the team learns about it from customers.

How to run parallel requests

Start not with the whole stream, but with a copy of part of the real traffic. For the first run, one segment is often enough: for example, only support requests or only data extraction tasks. If you mix all scenarios at once, you will get a lot of numbers and very little value.

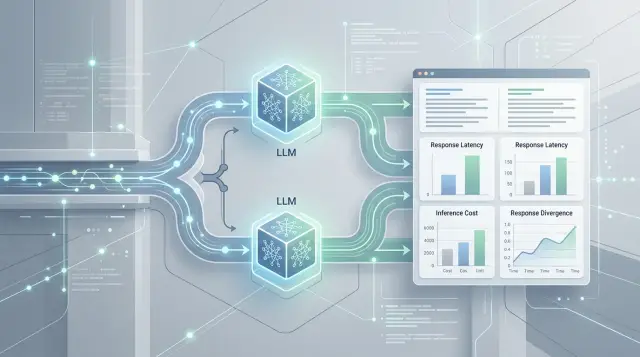

Send each request to two models at the same time. The first model remains the live one and answers the user. The second one runs in shadow mode and is never shown to the user. This lets you test the new model on live data without risking the interface, the SLA, or customer complaints.

Such a test only works when both models receive the same input. Do not change the prompt, temperature, token limit, or tool set along the way if the goal is an honest comparison. Otherwise, later it will be hard to tell whether the model answered differently or you simply gave it different conditions.

Store not only the final text. For each request, you need the same set of data: the prompt and system instructions, call parameters, the current model response and the new model response, latency on each side, tokens, and cost. Add an experiment ID, prompt version, and scenario type. In a few days, that will save hours of analysis.

It is better to split the stream by task type before launch. Look at chat, classification, summarization, field extraction, and JSON generation separately. A model may write good text, but struggle with structured output. If you put everything into one sample, that failure is easy to miss.

Parallel requests are easier to run through a single gateway where the call format is already the same for different providers. If the team works through AI Router, you can send a copy of the request to another model without building a new integration for each vendor. This is useful not for making the experiment look neat, but for seeing the difference on live tasks before switching.

What to compare besides the overall impression

Overall impression is often misleading. A new model may write in a livelier and more confident way, but still confuse facts, break JSON, or answer more slowly during peak hours. In a shadow launch, it is more useful to look not at "like or dislike," but at the metrics that actually affect the product.

First check how well the model follows instructions. Count cases where it did not meet an explicit requirement separately from cases where it invented a fact or drifted off topic. These are different types of failures. A support bot, for example, may sound convincing but still violate the rule of "answer only from the knowledge base."

If the answer is processed by code afterwards, format matters more than style. For JSON, look not only at validity, but also at required fields, allowed tags, value types, and empty fields where they should not be. One extra paragraph before the JSON can break the whole scenario, even if the text itself looks fine.

It helps to keep a short checklist:

- share of answers with instruction violations

- share of factual errors on a validation set

- percentage of invalid JSON and missing fields

- average answer length and share of cut-off responses

- p50 and p95 latency

- request cost and total flow cost, including retries

Response length also affects the result. Too long means extra tokens, higher cost, and more latency. Too short often means a missed step, an omission, or a missing field. Separately track the share of cut-off answers: when the model hits the token limit, ends mid-sentence, or fails to close JSON.

For speed, you almost always need two numbers: p50 and p95. p50 shows the usual user experience. p95 shows the unpleasant tail that creates complaints. If the median barely changed but p95 doubled, the new model may already be a bad choice for chat, an agent, or an API chain.

The same goes for cost. Look not only at the cost of one request, but at the cost of the whole flow. A cheap model can easily become more expensive if it writes 25% longer, triggers retries more often, or asks for a repeat request because of broken formatting.

A good replacement rarely wins on one number alone. Usually it has to pass all four at once: accuracy, format, latency, and budget. If even one of them drops, surprises after switching are almost guaranteed.

How to measure differences without confusion

Shadow launches usually fail not on the requests themselves, but on the evaluation. The team sees a 90% average match and thinks the new model is ready, even though it may regularly fail in one narrow but expensive scenario.

First define three thresholds and lock them in before the test. Otherwise, people will start arguing after the first questionable answers.

- Success: the answer matches in meaning, passes structure checks, does not violate safety rules, and does not expose PII.

- Failure: the model changed the decision, broke the format, produced risky text, or missed sensitive data.

- Gray area: the meaning is almost the same, but the answer needs manual review. For example, the model chose the right category, but added an unnecessary explanation or phrased the conclusion too cautiously.

Automated tests should check not only the text, but also the response form. If the application expects JSON, check the schema, required fields, value types, enum values, and tool call names. One broken bracket does not look scary in a report, but in production it can stop an entire scenario.

Security and PII should be measured separately from normal quality. Here, the average score says very little. If the new model stops masking an ID number, card number, or phone number even 0.5% of the time, that is not a small difference; it is a stop signal.

Average numbers across all requests often hide the problem. Split traffic at least by task type, language, prompt length, and user segment. A hypothetical 93% match overall means nothing if the model drops to 74% on long requests in Kazakh.

It is also useful to look at the cost of an error. For a draft email, a style difference can go into the gray area. For a banking chatbot, the wrong ticket status or an extra field in JSON should be counted as a failure right away.

Do not collect questionable cases in one common pile. Take a manual sample for each segment and review it on one scale. Usually 30–50 examples per segment are enough if you choose the most frequent and the most risky requests.

When the team keeps a separate score for structure, meaning, security, and PII, the switching decision becomes calmer. It becomes clear not just that "the model seems better," but where it truly passes the test and where it is still too early to release it.

A simple real-world example

Imagine an e-commerce support bot. Most often, it answers a simple question: "Where is my order 483921?" The user expects one short answer with the status, not a long conversation. The team wants to try a new model because it is noticeably faster for messages like this.

Switching everyone at once is risky. The old model already reads the order number well, even from messy text where the customer uses no punctuation or adds extra words. If the new model gets the number wrong even once, the bot will show someone else’s status or say the order was not found. For support, that means extra complaints and manual work.

So the team enables shadow mode. The bot still shows the old model’s answer, but the system also sends the same request to the new model and stores both results. For each case, the team looks at four simple things: which order number each model extracted, whether the final status matched, how long the response took, and whether the model asked for any unnecessary clarification.

After a few days, the picture becomes clear. For short questions like "Order status 483921," the new model answers on average 0.7 seconds faster and almost never differs from the old one. But in longer threads, where the customer first argues about delivery, then remembers an old number, and at the end asks to check a new order, the new model loses context more often. It may take the first number from the conversation instead of the last one, or ask again for something that was already in the chat.

At that point, the team does not need one final verdict for the whole model. It splits the traffic into two types. Simple cases, where there is one clear order number and no more than two messages, can be moved to the new model fairly quickly. More complex conversations are better left on the old model for now, while the new one gets a refined prompt, a stricter response format, or a separate order-number check before sending.

This approach removes unnecessary risk. The team is not arguing about which model is better overall. It sees where the new model is already ready for production and where it is still too early to put it in front of live customers.

Common mistakes in shadow launches

The most common mistake looks harmless: teams compare two models under different conditions. One has temperature 0.2, the other 0.8. One has a 300-token limit, the other 1,200. Sometimes they even change the system prompt and then argue about which model is worse. That test proves nothing. If you are checking a new model in shadow mode, first align all generation parameters, response format, and post-processing rules.

Even the same SDK does not save you from confusion. If the team simply changes the base_url to another LLM gateway, the code may stay the same, but the model behavior can still be different. So you should compare not "the old integration" with "the new integration," but two models under the same wrapper.

The second mistake is drawing conclusions from too small a sample. Ten or twenty requests are only good for a first look. They do not show rare but expensive failures: missing a required field, a response that is too long, the wrong tone, or drifting away from the instruction. Usually the problem appears on the hundredth or thousandth request, when long conversations, noisy input, and odd phrasing enter the stream.

Another trap is looking only at average latency. The average often looks fine even if the model has a long response-time tail. Users do not notice the average; they notice freezes, timeouts, and spikes. Look at least at p95, error share, answer length, and the number of repeated requests after a failure.

Teams often mix safe and risky scenarios in one report. Simple FAQs, field extraction from a form, and email drafts cannot be judged by the same rules as credit scoring, medical prompts, or government-service answers. These tasks have different costs of error. A model may write short summaries very well and still handle strict JSON poorly in forms or confuse required warnings.

The last mistake is the most expensive: the new model passes the shadow test, and the team switches everyone over immediately. It is better to move step by step. First internal users, then a small share of real traffic, then separate segments. If something goes wrong, you can roll back quickly without noise and without a long list of incidents.

A quick checklist before switching

You should move traffic to a new model only after a short but strict check. If quality improved on part of the requests, but latency doubled, you will get new complaints instead of value. The same applies to cost: a small gain in accuracy does not always justify a bigger bill.

Before switching, the team should lock the thresholds. Usually this is a simple set: a minimum quality level, an upper latency limit, and a clear cap on the cost of one request or the whole chain of calls. If the new model fails even one threshold, it is too early to move it into the main traffic.

You need a dashboard or at least a table with thresholds for quality, latency, and cost for each important request type. You need a log of questionable and bad answers with the prompt, response, reference answer, and a short note on what went wrong. You need a rollback plan: who makes the decision, how the old model is restored, and how many minutes it takes. And you need monitoring by request type, not just by the average number. A single aggregate metric hides failures in narrow scenarios far too often.

A log of questionable answers is useful even when average metrics look good. That is where the dangerous small problems show up: the new model confidently invents details, misses a step in the instruction more often, or handles JSON formatting worse. Five such examples often say more than a pretty overall chart.

The rollback plan is worth testing by hand. One responsible person should know what to do if, ten minutes after increasing traffic share, the error rate rises or conversion drops. If the model is switched through a compatible gateway like AI Router, make sure in advance that returning to the old model is only a routing or configuration change, not a code fix under pressure.

Average values rarely save you. Look separately at search, summarization, data extraction, support, code generation, and your other scenarios. When the team already knows which signal should increase traffic share and which should trigger an immediate rollback, the transition goes much more smoothly.

What to do after a successful test

Even after a good result, do not switch the whole stream at once. Any test misses rare cases. Real traffic almost always brings new wording, long conversations, and unexpected data combinations.

Start with a small share of live requests. Often 1–5% is enough for the first stage, then 10%, 25%, 50%, and only after that 100%. Between stages, let the system run for at least a few hours, and for important scenarios, for a full day.

A simple sequence usually helps:

- turn on the new model for a small share of traffic

- watch errors, latency, cost, and user complaints

- increase the share only if the metrics stay stable

- keep the old model as a quick rollback

- review questionable cases separately after each stage

It is better not to remove the old model right away. Keep it nearby for a few more days, and for sensitive tasks, for a week. This is especially useful when a rare scenario appears: for example, the new model handles chat well but performs worse on short classification requests.

A good practice is to keep a simple switch flag. Then the on-call engineer or service owner can restore the previous model in minutes, without rushed code changes.

After the first week, revisit the prompts. Often the problem is no longer the model itself, but old instructions that were written for a different response style. The new model may ask for extra explanation, change JSON format, or handle long context differently. A small prompt edit sometimes has a bigger effect than another round of model selection.

If you often compare providers or test several models in parallel, it is more convenient to keep one OpenAI-compatible endpoint. In AI Router, the team changes the base_url to api.airouter.kz and continues using the same SDKs, code, and prompts. This makes gradual rollout and rollback much easier.

For teams in Kazakhstan, there is one more practical check before full launch: make sure data storage, audit logs, and PII masking are configured just as strictly as they were during the test. On paper, the migration may look successful, but production often breaks on exactly these details.

If, after a few days, the metrics are stable, manual review finds no unpleasant surprises, and support sees no new complaints, then the new model can be considered the main one.

Frequently asked questions

Why is shadow traffic needed when changing models?

Because a direct model swap changes not only the text, but the service behavior too. Shadow traffic lets you look at live requests, compare answers, latency, and cost, without breaking the user experience.

When can you avoid a shadow launch?

You can skip it if the model only writes drafts for an internal team and a person checks everything by hand before sending. If the response triggers an action, affects money, deadlines, or a strict format, switching blindly is not a good idea.

Which traffic should be used for the first run?

For the first run, take one clear segment instead of the whole stream at once. Most often, one request type is enough, such as support or field extraction, so you can quickly spot real failures without getting lost in noise.

What should match between the main and shadow model?

Keep the prompt, system instructions, temperature, token limit, and tools the same. If you change both the model and the calling conditions, no one will be able to tell what actually caused the difference.

Which metrics should you look at first?

First look at instruction following, format validity, p50 and p95 latency, answer length, and total flow cost. A general impression is not enough: a model may sound nicer, but still break JSON more often or respond more slowly during peak load.

How do you know a difference has become dangerous?

Look at the cost of an error, not just how similar the text is. If the model changes a ticket status, reveals PII, breaks JSON, or moves away from the rule, count it as a failure even if the wording looks almost the same.

How should JSON and other strict formats be checked?

Check not only whether the JSON is valid, but the full schema: required fields, value types, enums, and any extra text before the object. One paragraph before the JSON or an empty field in the wrong place can break the scenario just as fast as a broken bracket.

How many requests are needed to make a decision?

Ten or twenty requests are enough only for a quick look. To make a switching decision, you need a stream with long conversations, noisy input, and rare cases; it also helps to manually review at least 30–50 examples per risky segment.

How do you safely move users to a new model?

Do not turn on the new model for everyone at once after the test. Give it a small share of live traffic, watch errors, latency, cost, and user complaints, and keep the old model nearby as a quick rollback.

What if the new model is good in only some scenarios?

Do not look for one overall verdict for the whole model. Split traffic by scenario and move only the cases where the new model already holds quality, format, and speed; leave the rest on the old model or improve the prompt.