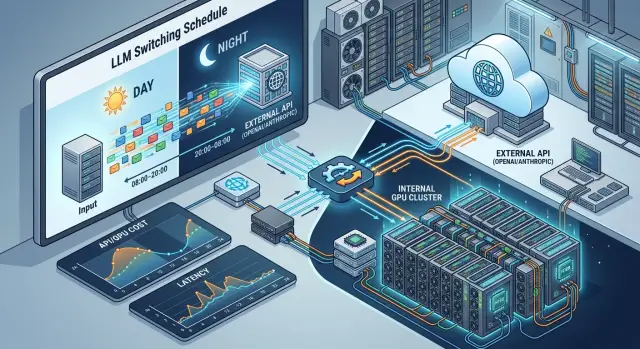

Scheduled switching between hosted and self-hosted models

Switching between hosted and self-hosted models can reduce cost and latency if you separate use cases by time of day, data sensitivity, and load spikes.

What the real problem looks like

During the day, the system lives by one set of rules; at night, by another. If the chat for operators, knowledge base search, or CRM suggestions freezes during working hours, the business loses time immediately. At night, the cost of a failure is lower: batch processing can wait 10-20 minutes if that does not break the morning cycle.

Load is different too. Online requests arrive in bursts, need low latency, and require a predictable response. Batch jobs such as nighttime dialogue labeling, document review, or large-scale summarization can tolerate a queue and longer processing. If both types of load go through one model and one environment, the team quickly gets extra costs, queues, and disputes over priorities.

Data is even stricter. In banking or telecom, some requests contain PII, internal notes, contract numbers, and operational fields. Such data often makes sense to keep within the country and within your own environment. Anonymized text, request classification, or nighttime reports can be sent to an external LLM API if the price is better there or the model choice is broader.

That is why switching between hosted and self-hosted models usually looks less like an architect’s whim and more like a normal operational task. The same model rarely remains the best choice all day. In the morning, stability and a fast response matter most. At night, the price for large volume matters more. In the middle of the day, customer request traffic may spike, and then batch work is better moved outside temporarily or paused until a quiet window.

Usually it comes down to three questions: which scenario must not fail, which data must not leave, and during which hours tokens cost the most. If you do not answer these in advance, the team either overpays for always-on internal GPU capacity or sends sensitive tasks to the external environment without a clear reason.

That is why the switching schedule is built around the business operating mode, not around the "favorite" model. It is most convenient when routing lives separately from the application: during the day, sensitive and urgent requests go to internal models, and at night part of the batch load goes to external providers without rewriting code.

When the internal environment makes sense

The internal environment is not needed "for prestige," but for very practical reasons. The first common case is when you process PII, customer requests, medical data, contracts, or internal documents that security does not want to send outside without a clear reason. Local execution lowers risk and makes control over storage, masking, and auditing easier.

The second case is when you need steady latency during working hours. For an employee chat assistant, form review, or operator suggestions, the difference between a response in 1.2 seconds and 4 seconds is very noticeable. An external LLM API may sometimes look good on average, but real traffic likes unpleasant spikes: provider queueing, an overloaded region, a sudden limit.

There is also a third practical option: your GPUs are idle in the night window. If the hardware is already there and unused from 22:00 to 08:00, it is convenient to run batch jobs on it - document parsing, summarization, classification, knowledge base reindexing. In this setup, nighttime processing often costs less than an external API.

Another case is a model tuned for one flow. If a team solves the same task every day, a general model with a long prompt quickly starts to lose out. A model inside the environment can be more accurate and cheaper if you shape it for a specific format: for example, extracting fields from contracts, routing requests, or labeling complaints.

For companies in Kazakhstan, this often also aligns with data placement requirements. If some scenarios need to stay inside the country, it makes sense to keep those requests on your own infrastructure or on locally deployed open-weight models. This is where a single gateway like AI Router can be useful: it lets you keep local models and external providers under one API, while preserving data inside the country and keeping audit trails for sensitive scenarios.

In general, the internal environment makes sense where repeated and sensitive scenarios stay inside. External models are better connected for rare requests, experiments, and tasks where a wide choice of models matters more than full control over the environment.

When the external API is more profitable

An external API is often cheaper and simpler when load moves by the hour instead of staying flat throughout the day. If you have request spikes in the morning and evening, but quiet periods during the day and at night, your own GPUs will sit idle for a noticeable part of the time. You still have to pay for them.

This option is especially convenient when complex requests appear rarely but require a strong model. For example, 90% of requests can be handled by a standard model, while the remaining 10% need a more accurate contract review, long context, or good performance on mixed Russian and Kazakh. Keeping separate internal infrastructure for such cases is usually not worth it.

Rare scenarios almost never pay for their own servers. If the team runs a batch document check once a week or occasionally turns on an expensive reasoning model for disputed cases, the external LLM API removes unnecessary costs. You pay for actual use, not for waiting for load.

Another advantage is choice. You can quickly connect different models for different tasks without buying new hardware or rebuilding inference. For teams testing hypotheses and not yet committed to one stack, this lowers the risk of choosing the wrong infrastructure.

Operations also benefit. Supporting inference 24/7 is not only about GPUs. It is also about monitoring, queues, rate limits, updates, model degradation, and nighttime incidents. If the business does not need a permanent internal environment for all scenarios, variable and rare load is easier to move outside.

An external API is usually chosen when demand jumps a lot by hour or day, strong models are not needed all the time, rare tasks do not reach a stable volume, and the team does not want to bloat the infrastructure layer for occasional peaks.

In practice, the setup often ends up mixed. The basic flow stays inside, while rare, expensive, or peak requests go outside.

How to split scenarios by schedule

One route for the whole day almost always leads either to extra cost or to slower performance. It is much more practical to split traffic by task type and by time of day. Then daytime dialogs get a fast response, and heavy batch jobs do not interfere with users.

Start by separating online scenarios from background jobs. An online chat dialog, operator suggestions, form validation, or short CRM generation require low latency. Nightly re-evaluation of forms, large-scale call summarization, archive labeling, or quality scoring can usually wait for a window with cheaper or more available capacity.

Then set several time windows instead of one general rule. For example, from 9:00 to 20:00 customer requests can go to the place that handles peak latency better. After 20:00, part of the load can already be moved to the internal environment, especially if it is batch processing, data with storage restrictions, or tasks where an extra second does not matter.

A schedule without thresholds breaks quickly. You need at least simple conditions:

- queue length

- p95 response time

- error rate over the last 5-10 minutes

- budget limit for the current window

A simple logic example: during the day, the online chat goes to the external API as long as p95 stays below 2 seconds and the queue is not growing. If the queue in the internal environment drops below the set threshold, some traffic can be moved back there. At night, batch jobs go inside by default, but if the queue exceeds the limit and the window starts to slip, the system temporarily sends part of the jobs outside.

Manual override is needed too. During a sale, salary day in a bank, or a GPU incident, automation may choose the wrong mode. The team should be able to lock the route for a few hours, stop background processing, or, on the contrary, offload batch jobs to the external environment.

Even if you have one gateway and one endpoint for the application, the schedule must be tested on real load, not on average monthly traffic. The average number almost always looks better than the peak hour.

What to measure before switching

Switching traffic by schedule blindly is expensive. If you do not have numbers for each scenario, the picture quickly becomes strange: at night the internal model sits idle, during the day the queue grows, and the expensive external API takes requests that a simpler model could have handled easily.

First, split traffic into flows instead of counting everything together. Support, knowledge base search, internal employee chat, document extraction, and long-form generation behave differently. Calculate the price per thousand tokens for each flow separately. Otherwise, the average number will hide where you are really losing money. It is better to separate input and output tokens right away, and if you use prompt caching, account for it separately.

Then look at latency by hour. Average latency is useful, but it almost always misleads. Users notice not the average, but the bad spikes. So track both average and p95 latency for each hour, separately for hosted and the internal environment. If p95 for self-hosted rises twofold from 10:00 to 12:00, the schedule should already take that into account.

Another layer is data. Track the share of requests containing sensitive information: personal data, financial fields, medical data, operational documents. Even if the external provider is cheaper and faster, some traffic simply cannot be sent outside. In Kazakhstan, this is often not a matter of convenience, but of storage and audit rules.

If you run your own models on GPUs, do not stop at the metric "the server is up." You need GPU utilization, queue length, timeout rate, and the number of repeat requests after failures. Sometimes the model itself responds quickly, but the queue in front of it adds another 8-12 seconds. For the user, it makes no difference: they are still waiting.

Another common mistake is sending everything to the strong model. Check where it is really needed. Take a sample of requests and compare the result of the expensive model with a simpler one by answer quality, number of corrections by the operator, and the share of successful scenario completions. Sometimes the complex model is needed in only 15% of cases, and the rest can be handled much cheaper inside.

A good minimum log for each request looks like this:

- scenario type

- hour and day of week

- hosted or self-hosted route

- tokens, price, and latency

- sensitive data flag and final quality result

If external providers and internal models are already brought together in one OpenAI-compatible gateway, these comparisons are easier to make. Then the decision is based not on guesses, but on a clear table: how much each flow costs, where p95 slows down, and which traffic must stay inside.

How to roll out the setup step by step

Start not with the most important function, but with the clearest one. For the first launch, it is better to take a scenario where mistakes are easy to count and results are easy to check. For example, call-center summarization or a draft reply for an operator. If the model gives a weak answer, the employee will notice it immediately, and the business risk stays low.

Next, the goal is not an argument about what is cheaper, but a week of proper hourly measurements. One average metric almost always lies. During the day, the external API may respond steadily, while at night your internal environment sits idle and costs noticeably less. Or the other way around: the internal model handles the night well, but in the morning peak it introduces latency that cannot be ignored.

- Choose one scenario with predictable traffic volume and a simple quality check.

- Collect at least 7 days of hourly metrics: number of requests, average and 95th percentile latency, cost per response, share of manual edits, failure rate.

- Set a simple switching rule. For example: from 01:00 to 07:00 requests go to the internal model, during peak hours - to the external API.

- Send a small share of traffic to this rule, for example 5-10%, and keep the rest on the current option.

- Compare the two branches on three things: cost, speed, and answer quality. If even one parameter goes outside your tolerance, return to the old setup.

It is better to write down limits in advance rather than invent them after the test. For example: latency no higher than 2.5 seconds, no more than 1% timeouts, at least 15% savings, and the rate of manual edits does not increase. Then switching stops being a "rough guess" idea and becomes a normal operating rule.

A rollback plan is needed on day one. If the internal model is overloaded, the external provider changes behavior, or quality drops on a new batch of requests, the team should be able to return all traffic in minutes. The fewer places where someone has to change things manually, the better.

A good pilot rarely looks impressive. It simply gives numbers after which it is already clear where to keep a scenario inside and where to pay the external API.

Example for a bank with two load windows

A bank often has two different work rhythms. During the day, when operators answer customers in chat, requests come almost without pauses. People expect a response within seconds, and any delay is immediately noticeable.

In this window, the bank usually keeps the main flow in the internal environment. That is where dialogs with full names, IIN, contract numbers, account balances, and transaction history go. This route helps avoid sending PII outside and keeps system behavior under tighter control.

At night, the picture changes. Operators almost do not write, but the system prepares shift summaries, processes requests in batches, tags complaints, and drafts replies for the morning. A response in one second is no longer needed, so the bank can shift part of the tasks to the external LLM API if the price is lower there or the quality is better on long summaries.

The setup looks simple:

- during the day, the internal environment handles customer chat with personal data

- at night, batch jobs move to a cheaper or stronger external model

- rare complex questions from operators that need deeper analysis are escalated directly to the external API

Imagine a typical case. A customer asks why a payment did not go through and why the fee changed. The dialog contains personal data, so the internal model handles it. But if the operator adds an open-ended question like "explain the possible causes across the whole transaction chain and suggest a reply to the customer," the system may send an anonymized summary to the external API. That way, the bank does not send the whole flow outside and uses the external resource only where it is truly needed.

In the morning, the team looks at three things: how much the overnight run cost, where errors increased, and how often operators escalated a question to the external route. If the escalation share is growing, the internal model can no longer handle part of the tasks. If nighttime cost spikes upward, the routing rule should be reviewed.

Where teams most often make mistakes

Problems rarely start with the model itself. Usually the team breaks the switching logic. Yesterday one scenario went to the external API, today it was sent to the internal environment by schedule, and along with it another ten neighboring tasks were moved. After that, it is no longer clear what exactly failed: limits, latency, answer quality, or data format.

The most common mistake is changing everything at once. If you move summarization, support chat, and internal search in one day, the cause of the incident gets lost. It is better to move one scenario at a time and keep a control group for at least a week.

The second mistake seems small, but it hurts badly: the team looks only at token price. On paper, internal execution may look cheaper, but downtime during a peak hour quickly eats up that difference. If employees wait 20-30 seconds for an answer instead of 3-5, the business pays not only for GPU or API, but also for lost human time.

Another miss is averaging load and forgetting the worst hour of the day. The internal environment often runs fine until the first sharp spike. For example, in the morning and after lunch, request traffic may grow by almost half. If capacity was not reserved in advance, the switching schedule will only make the queue worse.

Teams often build routing only by time and forget about data type. That is a bad compromise. The same 19:00 slot can be safe for anonymized requests and unsuitable for dialogs with personal data. Time is only one sign. The second sign is what exactly you are sending, where, and in what form.

There is also a quiet mistake that is noticed late: quality is checked on short tests. The model passes ten simple requests, and the team decides the setup works. But long dialogs behave differently. There, context is lost more often, latency rises, and strange answers appear on the sixth or eighth message.

One gateway and a base_url swap greatly simplify the launch, but they do not replace testing. The same route should be tested separately for short tasks, long chains, and peak hours. Otherwise you will get convenient switching but inconvenient incidents.

Quick check before launch

Before turning on the schedule, check not the idea, but the numbers for each time slot. Morning and daytime often look one way, nighttime a completely different way. If you do not have that breakdown, the schedule will quickly become a guess.

First, compare cost by hour. An external API is often more profitable in low or uneven load windows, when keeping your own GPUs just for rare requests is too expensive. Count not only tokens, but also the idle time of your own infrastructure.

Then check peak latency. The internal environment makes sense where queueing on the external provider or the network adds extra seconds. For chats, operator suggestions, and antifraud, this is noticeable immediately.

Separate traffic by data as well. If some requests contain PII, banking data, or internal documents, the routing rule should account for that separately from price. Such scenarios need a clear route, audit logs, and masking of sensitive fields.

Do not collapse cost, answer quality, and errors into one average number. If the model became cheaper but failures increased or quality dropped on real tasks, the savings disappear quickly.

And finally, check rollback. The team should be able to quickly return to the old route if the external provider starts failing or the internal model cannot handle the load. It is better when this is done with one switch at the gateway level, rather than edits across several services.

If at least two of these points are still handled manually, do not put the schedule into production right away. First collect a week of proper measurements, then enable the rule for one scenario, and only after that expand it to the rest of the traffic.

What to do next

Do not move the whole platform at once. Take one scenario where the schedule is already obvious without debate. For example, nighttime batch document processing or a daytime employee chat with a strict latency limit.

That kind of start is easier to defend to both the team and finance. You will understand faster whether the setup brings real savings or just adds unnecessary rules.

Put the decision into one table. Without it, confusion almost always starts: one team thinks about cost, another about data, a third about SLA. The table should contain specific conditions for each load window:

- scenario operating time

- data type and whether it can be sent to the external API

- acceptable latency and minimum SLA

- budget limit per day, week, or 1,000 requests

- default route and fallback route

After that, check the most practical thing: can the route be changed without editing client code? If every switch requires a new release, the setup will quickly become a burden. It is much more convenient when the app uses the same endpoint and the model selection rules live separately.

If you have both an external API and an internal environment, a single entry point makes life much easier. For teams in Kazakhstan, this is especially useful when you need to combine access to external models with local data placement. In that case, AI Router can cover both sides of the setup through one OpenAI-compatible endpoint: external providers, locally deployed open-weight models, audit logs, PII masking, and routing without replacing the SDK, code, or prompts.

Then run a short pilot on one scenario. Two weeks is usually enough to see the picture without guessing. Compare four things: cost, latency, error rate, and the number of times the fallback route had to be enabled.

If the numbers do not change, do not complicate the architecture. If nighttime traffic consistently moves to the internal environment at a lower cost, and daytime traffic meets the SLA through the external API, take the next scenario and repeat the same setup with a new rule table.

Frequently asked questions

When does it make sense to keep a model in the internal environment?

Keep a scenario inside when requests contain personal data, internal documents, or operational fields, and also when you need a steady response during working hours. Such a setup is easier to control for data storage, masking, and audit logs.

It also makes sense if you have a repeated task with a clear format, such as extracting fields from contracts or labeling complaints. For that kind of flow, an internal model is often cheaper and more stable.

When is an external API more cost-effective than self-hosted?

An external API usually wins where load swings heavily by the hour and an expensive model is needed only sometimes. You pay for actual usage, not for GPUs sitting idle.

This option is convenient for rare complex requests, experiments, and nighttime tasks without strict data storage requirements. It is also useful when the team wants a wide choice of models without supporting inference 24/7.

Should hosted and self-hosted be switched by time of day?

Yes, and in practice this is often the calmest option. Daytime online requests can go to the place with better latency, while nighttime batch jobs can go to the place with a lower cost per volume.

Time alone does not solve everything. Also look at the data type, queue length, p95 latency, and the budget limit for the current window.

What should be considered besides time when choosing a route?

One schedule is not enough. First separate online scenarios from background jobs, then add simple thresholds for queue length, p95 response time, error rate, and budget.

Without those conditions, the setup breaks quickly: users wait during the day, and batch jobs miss the morning deadline at night. It is better when the app uses one endpoint and the model selection rules live separately.

What metrics are needed before the first switch?

Count cost for each scenario separately, not for the whole system at once. Support, knowledge base search, document processing, and long-form answers behave differently.

Also watch p95 latency by hour, error rate, input and output token volume, the presence of sensitive data, and the queue in front of the model. The average value almost always hides the problem.

How can this be launched without unnecessary risk?

Start with one clear scenario where quality is easy to check and mistakes are easy to see. Good options are call-center summarization, a draft reply for an operator, or simple classification.

Collect at least a week of hourly measurements, turn on the new rule for only a small share of traffic, and set limits for latency, cost, and manual edits in advance. That way you will know whether the setup brings value or just adds noise.

How should requests with personal data be routed?

Requests with PII, banking fields, medical data, and internal notes should be marked and routed by a separate rule right away. For these cases, price should not outweigh storage, masking, and audit requirements.

In many cases it is wiser to keep such scenarios inside the country and within your own environment. Anonymized text and batch jobs without sensitive fields can then be considered for the external API.

Do you need a rollback plan if the schedule is simple?

Yes. Without it, the setup quickly becomes a source of nighttime incidents. If the internal model hits a queue or the external provider starts failing, the team should be able to restore the old route within minutes.

It is better when rollback is handled by the gateway rather than by several services manually. That way you do not touch the client code and do not stretch the incident across the whole stack.

How do you know the internal model is no longer handling the load?

The first signal is not the average, but a rising p95 latency during peak hours. After that, timeouts, repeat requests, and a long queue in front of the model usually follow, even if the model itself responds quickly.

Also watch business signs: operators edit answers more often, escalations increase, and batch jobs no longer fit the window. That means the current route needs to be reconsidered.

Why do you need a single gateway for hosted and self-hosted models?

A single gateway removes extra work during switching. The app keeps using the same OpenAI-compatible endpoint, while the team changes routing rules separately from the code.

This is convenient for a mixed setup where part of the traffic goes to external models and part stays on local open-weight models. For teams in Kazakhstan, this approach also helps combine local data storage, auditing, and access to different providers through one API.