SQL Agent Without Risk to the Production Database: Read-Only and Limits

SQL agent without risk to the production database: how to set up read-only access, a SQL query allowlist, timeouts, and quick checks before launch.

Why an SQL agent is dangerous without boundaries

The phrase "SQL agent without risk to the production database" only sounds believable when the agent has strict boundaries. Without them, it tries to answer the question at any cost. For a model, that is normal. For a live database, it is not.

The problem is that an agent rarely stops at one table. A person asks: "Why did revenue drop yesterday?" The agent may look not only at sales, but also at returns, discounts, users, app events, and system logs. It builds the full picture instead of protecting the database. One question quickly turns into a series of heavy queries.

Even one mistake is expensive. If the agent forgets the date filter or chooses the wrong table, it reads extra data. Sometimes that means millions of rows. Sometimes it means personal data that was never supposed to be touched at all. Even read-only access does not remove the risk: load goes up, connections get tied up, and the application's regular queries start running slower.

The difference between normal and dangerous behavior is usually simple:

- Normal: a narrow SELECT on known tables, with a date filter, LIMIT, and a clear goal.

- Bad: SELECT * without conditions, a JOIN "just in case," reading system tables, scanning the full history.

- Very bad: UPDATE, DELETE, INSERT, ALTER, DROP, and any commands that change data or schema.

Production suffers right away. First, speed drops: the database spends CPU and memory on the agent's heavy query, and the user application responds more slowly. Then come timeouts, connection queue buildup, and complaints from a team that did not even know the analytics agent had launched something.

There is also a less visible risk. The agent may answer confidently, but on extra or unsuitable data. For example, it may join orders and sessions with a bad JOIN condition and show fake conversion growth. The mistake is not obvious right away, because the query technically ran.

In banking, retail, or telecom, this is especially painful. Sensitive fields often sit next to the metrics there, and extra load quickly hurts live services. That is why read-only access alone is not enough. If there is no SQL query allowlist, no time limits, and no restrictions on read volume, the agent has been given too much freedom.

A good SQL agent should not be bold. It should be boring, predictable, and suspicious of every query it writes.

What restrictions you need from the start

The first rule is simple: the agent changes nothing in the production database. It gets read access only, and you immediately remove any commands that carry risk: INSERT, UPDATE, DELETE, TRUNCATE, ALTER, DROP, and other DDL operations. If the agent makes a wording mistake, it should hit a block, not your data.

But that is not enough. You should not open the whole database to the agent even in read-only mode. For analytics, one or two schemas and a few tables or views are usually enough. If you leave access to everything, the agent will start visiting system tables, archives, and data that is not needed for the answer at all.

A good practice is to create a separate user with narrow permissions. Such a user, for example, sees only reporting.sales, reporting.orders, and a couple of reference tables. If the database has personal data, it is better to hide extra fields right away or give the agent separate views with already cleaned-up structure.

You also need a hard limit on volume. The agent should live with limits on rows and response size. Otherwise, even a normal SELECT * on a large table will pull hundreds of thousands of rows, clog the channel, and give the model too much noise instead of a result.

Usually four restrictions are enough: no more than 100-1000 rows in one response, no SELECT * for wide tables, a limit on the number of columns, and trimming overly long text fields.

Another required barrier is a timeout for each query. If a query runs longer than a few seconds, the system should stop it and return a clear error. This protects the database from heavy scans and accidental queries without filters.

A normal starting scenario looks like this: an analyst asks for revenue by region for last month, the agent goes only to allowed tables, selects the needed fields, gets up to 500 rows, and finishes in 3-5 seconds. If it tries to do a JOIN with a huge archive or pull the entire reference table, the system cuts it off by time or by volume.

Teams often make the same mistake: they spend a long time discussing prompts and barely touch SQL-side restrictions. That is the wrong order. First permissions, limits, and allowlist. Then query wording quality.

How to set up read-only access

Read-only access is best configured as if the agent will definitely make a mistake one day. Then even a bad query will not be able to delete rows, update an order status, or accidentally launch a heavy system function.

Start with a separate role for read-only access. Do not give the agent an analyst login, a backend account, or the team's shared password. The agent should have its own role, its own secret, and its own set of permissions. That makes it easier to see who accessed the database and easier to shut access down quickly if something goes wrong.

It is better to give the agent views instead of raw tables. In a view, you can remove extra fields in advance, hide personal data, and keep only the columns needed for answers. If the sales team asks about revenue by region, the agent does not need direct access to the table with customer profiles and internal comments.

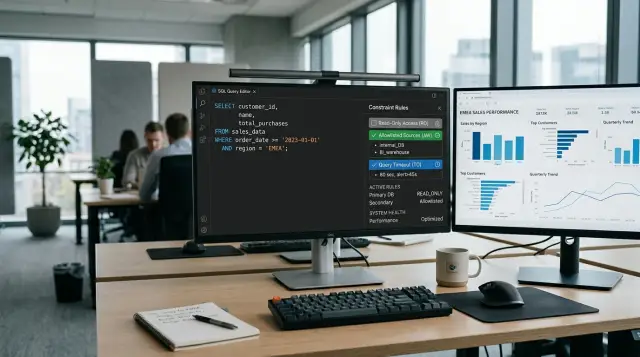

A basic permission scheme usually looks like this:

- the agent role gets USAGE only on the needed schemas;

- SELECT is granted only on prepared views;

- access to source tables is closed;

- EXECUTE on functions is checked separately and is almost always disabled;

- creating tables, temporary objects, and writing to the database is forbidden.

Function permissions should be checked separately. This is a common hole. Formally, the role may be read-only, but an old function with owner rights can provide a bypass to data or even to record changes. If the agent does not need a function, it should not have access to it.

Another weak point is old credentials. Teams often launch the agent on a new read-only user, but the config still contains the old password for a service account with broad rights. The agent does not bypass anything itself, but the application can easily do it for it if it keeps several connection strings and picks the wrong one.

Before launch, it helps to check four things: the agent connects only under the new role, there are no old accounts in secrets and environment variables, this login cannot read raw tables, and a query on behalf of the agent cannot call a dangerous function.

How SQL query allowlisting works

An SQL agent allowlist is a set of clear rules: which queries the agent may build and send to the database, and which ones the system rejects immediately. This approach is more reliable than filtering dangerous SQL by words like DELETE or DROP. You define normal behavior in advance, and everything else is considered forbidden.

For analytics, SELECT and a few simple operations on top of it are usually enough: COUNT, SUM, AVG, MIN, MAX, GROUP BY, ORDER BY, LIMIT. If the team does not need subqueries, UNION, or window functions, do not open them "just in case." The narrower the boundaries, the fewer surprises.

A good allowlist works on three levels. First, you define the permitted tables. Then the fields the agent can read. After that, you describe the allowed JOINs: which tables can be joined and under what conditions.

For example, you can give the agent access only to orders, customers, and regions. In customers, allow customer_id and segment, but not phone and email. Open JOIN only on orders.customer_id = customers.customer_id. Then a revenue-by-segment query will pass, while an attempt to pull extra personal data will hit a rule before the database is even touched.

Another layer of protection is removing constructions that analytics often does not need but that often break boundaries. Usually comments, UNION, multiple expressions in one query, DDL, DML, dangerous function calls, and access to system schemas are blocked. Comments are worth stripping too: people hide filter bypasses and junk in them that break simple control.

The most common mistake is checking SQL with a simple string search. That is a weak spot. The agent can bypass such a filter with spaces, case changes, comments, or nested structures. It is better to parse the query, get the AST, and then check the operation type, table and column list, JOIN type, join condition, allowed functions, and number of expressions by structure.

If a query fails even one rule, the system should not try to run a "nearly suitable" version. It is better to return a clear error immediately and ask the agent to build the SQL again within allowed boundaries. That keeps analytics useful and stops the production database from becoming a test ground.

Controlling execution time and response size

Read-only access does not help if the agent can run heavy queries without limits. One bad JOIN on a large table or a query without filters can easily consume database resources and slow down live services. That is why execution time and response size are better limited from the start, not after the first failure.

A short timeout is needed for each run. Not for the whole session, but for each separate SQL query. For analytics questions, a few seconds is often enough. If the agent does not make it in time, the system should stop the query, return a clear error, and not keep pushing the database with retries.

LIMIT is also needed almost always, even when the question seems simple. A user writes: "Show the latest orders with payment errors," and the model can easily build a query that pulls thousands of rows instead of 50. For the first answer, it is better to return a small slice and then refine the query. That way the analyst sees the picture faster, and the database is not working for nothing.

Usually these rules are enough:

- set a 3-10 second timeout for one query;

- add a default

LIMIT, for example 100 or 500 rows; - stop long queries during peak hours;

- log the question, SQL text, and the reason for stopping it.

Logs are often underestimated. They are not only for incident analysis. They show which questions the agent misunderstands, which tables it touches too often, and where the prompt itself pushes the model toward heavy queries.

A simple example: an analyst asks to compare sales by region for a year. The agent builds a query with extra fields and sorting over a large result set. The timeout cuts it off after 5 seconds, LIMIT prevents extra rows from being returned, and the log saves the original question and SQL. After that, the team removes the unnecessary sorting and adds a narrower query template.

It is better to treat such restrictions as a normal part of the architecture. This is not a backup brake, but a basic layer of protection.

How to launch it step by step

Start narrow. Do not give the agent access to all tables and all questions at once. Pick one report that the team already builds manually, and one data source for it.

A good first scenario looks boring, and that is a plus. For example, a daily sales report from one replica of the database, where you need only a few tables, clear filters, and a daily total. The narrower the task, the easier it is to see where the agent makes mistakes.

Then build the allowlist not for "all analytics," but only for that scenario. Allow reading from a specific schema, keep the needed fields, and decide in advance which query types are allowed. Usually simple SELECTs with a date filter, grouping, and a row limit are enough.

Next, run test questions on a copy of the data or on a safe replica. Use not only normal queries, but also awkward ones: vague wording, overly wide dates, attempts to request extra fields. Watch three things: what SQL the agent generates, how long the query takes, and how many rows it returns.

A practical order usually looks like this:

- One report, one source, one group of tables.

- A narrow allowlist for that report.

- Tests on a copy of the data with real team questions.

- Manual approval for each query at the first stage.

- Access for a small group of users, not the whole company.

Manual approval at the start almost always pays off. An analyst or engineer looks at the SQL before it runs and quickly catches strange constructions: an extra date range, an unexpected JOIN, or a query without LIMIT. After a week of such checks, it usually becomes clear what needs to be restricted more tightly and what can already be opened.

When the scenario behaves calmly, give access to a small group. 3-5 people is a good fit: people who will actually use it every day and leave useful feedback. A full rollout only gets in the way here. First you need a clean query log, clear errors, and a few allowlist iterations.

If the first scenario runs without surprises for two or three weeks, add the next one. Not earlier.

Example for an analytics team

A sales manager writes in chat: "Show sales by region for the last 7 days." For a person, that is a normal question. For an SQL agent, it should be a narrow and predictable query, not a reason to wander through the whole production database.

That is why the agent is not given access to raw tables of orders, customers, and payments. It reads only one view that already contains the needed fields: date, region, sales amount, and number of orders. That is enough to answer quickly and without extra risk.

The query is simple: select data for the last 7 days, group by region, and return the total. The agent does not see personal data, does not touch a year of history, and does not go into neighboring tables "just in case."

In practice, such a scenario usually relies on four rules: access to one analytics view only, a required date filter, a row limit, and a short timeout.

If the agent accidentally tries to pull too many rows, the protection kicks in immediately. For example, it builds a query without a date filter or asks for detail by every store, product, and day. The system either adds a hard limit or cuts the query off before a response. The user gets a short message, not a frozen database.

Sometimes the question is too broad from the start. A manager writes: "Compare all sales and find where there are deviations." A normal agent does not guess. It asks for the period, metric, and dimension: regions, stores, or categories.

That is exactly how a safe agent should behave: read only what is needed, not pull extra data, and ask a follow-up question if the request is vague.

Common mistakes

On paper, everything looks simple: give read-only access, add a few rules, and let the agent go to work. In practice, teams usually make mistakes in the basics.

The first trap is giving the agent the same role that an analyst already has. A person usually knows which tables are better left alone and often notices a strange query. An agent does not think that way. If a role has access to dozens of schemas, system tables, and old data marts, it will start going everywhere the door is open.

The second mistake is relying on read-only as full protection. It does not damage data, but it can easily pull extra information. If the team needs one order report, there is no reason to open the whole sales schema, CRM, and support at the same time. It is better to give access to one data mart or a few views with fields already hidden.

The third mistake is letting SQL through as is, without proper parsing and without an allowlist. Then the agent may build a heavy JOIN, go into system tables, or send a query nobody planned. Even a strong model sometimes takes a strange path if the user's question is vague.

The fourth mistake costs money and nerves: teams forget about LIMIT, timeout, and response-size caps. One bad query against a large table can take minutes, fill up the connection pool, and return so many rows that nobody will ever read them.

There is also a quiet problem that people notice late. They look only at the agent's nice answer and almost never open the logs. But you need to look not just at the final text, but at the SQL itself, execution time, number of rows, rejected queries, and attempts to go beyond the allowed tables.

A good sign of a mature setup is easy to check: the agent works only under a separate read-only role, sees not the whole schema but the needed views, the service cuts queries by allowlist, LIMIT, and timeout, and the team regularly checks logs instead of only answers.

A small example. Analysts need a daily sales report. The team opens the full working role to the agent because it is faster. A day later, it answers ten questions correctly, and on the eleventh it builds a query against a huge raw table without LIMIT. The database does not crash, but the report freezes, the dashboard slows down, and the reason is visible only in the logs. Such failures are easier to prevent than to hunt down later.

Short pre-launch checklist

Before the first launch, check access and limits, not the prompt. If they are missing, the agent quickly turns into a regular source of problems: extra data, heavy queries, and long post-incident investigations.

You only need to go through five points:

- The agent has a separate role for read-only access. It sees not the whole database, but only the needed tables or, better yet, prepared analytics views.

- PII data is closed by default. Names, phone numbers, IIN, addresses, and other sensitive fields are opened only for a specific scenario.

- Every query gets a hard LIMIT and a timeout.

- Logs save the original question, generated SQL, execution time, and the error text.

- The team has already assigned a person or on-call role that can disable access if something fails.

It is useful to check this with a simple test. Let the agent answer three normal questions, one question that tries to request extra fields, and one heavy query without a filter. If it stays within limits everywhere, trims the response size, and writes clear logs, the launch already looks reasonable.

If even one item is still not covered, do not put the agent into production. First narrow access, turn on limits, and agree on the response to a failure. That is the boring part of the work, but it is what saves hours and nerves later.

What to do next

Do not open the whole database and all question types to the agent at once. The working path is simpler: take one scenario, get it to run calmly, then add the next one. That makes it easier to see where the logic breaks, where queries go out of bounds, and where people need more data.

The usual order is this: first give access to 1-2 safe data marts or views, then test 20-30 real questions from analysts, then add one more scenario, and only after that expand the allowlist and the set of tables.

This launch looks less impressive than a big rollout, but it is almost always more reliable. If the agent confidently answers questions about daily sales or order statuses, that is already a working result. There is no need to let it into finance, personal data, and raw tables on day one.

Also check where PII masking is needed and who reads the audit logs. IIN, phone, email, address, contract number - all of this is better to close in advance, not after the first questionable report. It is useful to agree right away on what trace remains after each query: who ran it, which table it accessed, how many rows it got, and how long it took.

If limits and logs live in different places, the team quickly loses control. It is more convenient when model access, audit, and key-level restrictions are collected in one layer, while database rules remain in the DBMS itself. For such a setup, AI Router on airouter.kz can be useful as a single LLM API gateway: it makes it easier to centralize audit, rate limits, and model usage, but read-only, allowlist, and SQL limits still need to be configured close to the database.

The idea of a safe SQL agent does not rest on one read-only user. It rests on discipline: small scope at the start, a clear activity log, strict limits, and a slow expansion of access. This approach rarely looks impressive in a demo, but it does not create extra problems in production.