Patient consent for LLMs in a clinic: what to record

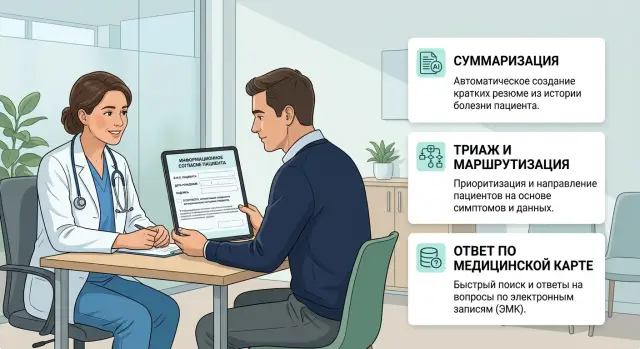

We explain how to document patient consent for LLM use in a clinic: what to record before summarization, triage, and chart-based answers.

Why one general signature is not enough

A standard signature for treatment does not cover everything a clinic does with patient data through an LLM. Treatment and data processing are different things. A patient may agree to an appointment, tests, and charting, but that does not mean they understood and approved chart summarization, automated triage, or system-generated answers based on their history.

The problem is not the paperwork. These actions have different goals and different risks. If the clinic combines them into one broad item, the patient does not see the difference between a situation where the doctor uses the visit note and a situation where the model reads the chart and prepares a draft reply. In medicine, that approach is too rough.

Summarization usually compresses already known data so the doctor can quickly see complaints, test results, and past prescriptions. The risk here is a missed detail or wording that changes the meaning.

Triage works differently. The system sorts requests by urgency, suggests the patient path, and may advise whether an in-person visit is needed. A mistake affects not only the text, but also how quickly the clinic responds.

Answers based on the medical record need separate consent for the same reason. When a patient asks, "What prescriptions did I have last month?" or "What did the doctor write after my last visit?", the system does more than just read the chart. It selects fragments, rewrites them, and with weak rules may say more than it should.

One broad item almost always hides four questions: what data the system reads, what task it uses that data for, who checks the result, and when the doctor must step in manually.

The patient needs simple text without bureaucratic language. They should understand that the system prepares a draft, a suggestion, or a preliminary assessment, not a diagnosis on its own. If the answer or triage affects scheduling, urgency, or a message to the patient, that needs to be stated clearly.

The doctor also needs more than one general signature. They need to see the boundary of responsibility. If the model prepared a summary or answer, the interface should show that the text was generated, what it was based on, and where manual review is needed. Otherwise, the doctor gets a neat paragraph without a clear source.

Even if the clinic stores data inside the country, keeps audit logs, and masks PII, a broad signature alone does not solve the problem. Technology reduces some risks, but consent still needs to be tied to a specific scenario.

How the three scenarios differ

Summarization, triage, and chart answers have different goals, different error risks, and different data volumes. That is why consent for an LLM in a clinic should not be collected with one phrase for every case.

Medical chart summarization compresses an existing record: doctor notes, discharge summaries, and sometimes a transcript of a conversation. Usually the model does not make decisions for the doctor; it helps build a clear text quickly. The main risk here is not urgency, but accuracy. The system may miss a detail, mix up a dosage, or confidently add something that was never in the record. For this scenario, the clinic usually needs access only to materials from the current episode and a required human review of the result.

Triage in a clinic works differently. It sorts requests by urgency and suggests who needs a quick response, an in-person exam, or an ambulance call. Here, the mistake is more dangerous because of time: if the system underestimates urgency, the patient may get help too late. At the same time, triage often does not need the full chart. A complaint, age, a few symptoms, and sometimes data about chronic conditions are enough. The consent should state separately that this is a preliminary assessment, not a diagnosis, and that alarming signs will route the case to a doctor.

Answers based on the medical record are the third and usually the most sensitive scenario. The system searches past visits, test results, prescriptions, and discharge notes to answer a question like "When was HbA1c last elevated?" or "Which antibiotic was already prescribed?" This requires the broadest access to data, and therefore the strictest control: who asks the question, which parts of the chart can be read, whether the sources are shown, and whether the request is logged.

The difference is easy to keep in mind. Summarization changes the form of the record, but not the treatment goal. Triage affects urgency and the patient path. Chart answers open up a large amount of past data.

That also means different controls. For summarization, doctor review matters most. For triage, escalation thresholds and a ban on independent medical conclusions matter most. For chart answers, access boundaries, masking of unnecessary personal data, and auditing matter most.

What to record before summarization

Before sending a chart to the model, the clinic should record not a "general consent for AI," but the exact data set and the task boundaries. This is especially important for summarization: the system can easily receive more than it needs if the team has not described the document set in advance.

It is better to tie consent to specific sources. The clinic should state which data will be processed: discharge summaries, lab results, exam notes, prescriptions, visit history. It should not silently include everything. If passport data, contact details, insurance fields, or bills are not needed for a draft summary, they should not be sent.

It is also useful to define the level of detail. Sometimes the clinic needs a summary of just the latest hospitalization, not the entire history. Sometimes structured fields are enough, while free-text doctor notes are better excluded. This should be visible both in the consent form and in the internal policy.

In practice, the clinic usually defines separately which documents and fields may be sent to the model, which time period is used, who sees the draft, and who approves the final version.

The draft summary should not exist on its own. Both patients and staff should understand that it is a working draft, not a final medical conclusion. Usually, the attending doctor sees it first, and sometimes a senior doctor or quality control staff member does too. One person should be responsible for editing and confirming the result.

Free-text doctor notes and attachments require a separate decision. Notes often contain assumptions, personal shorthand, family details, or phrases that the model may take too literally. If the clinic wants to include such notes, that should be stated clearly. The same applies to attachments: the clinic should decide in advance whether PDFs, scans, images, and audio transcripts may be sent, and what to do with the OCR version of the document.

Storage location matters too. Draft and final summary should be kept separate. The final summary is usually saved in the medical record as a separate note with a mark showing who reviewed it. It makes sense to store the draft for a limited time and delete it according to the internal retention period. If the clinic uses a separate LLM gateway, it should also define where the audit logs live, whether PII is masked, and whether traffic stays inside Kazakhstan. Otherwise, the arguments will start only after launch.

What to record before triage

Before triage, a clinic needs more than a broad phrase like "I agree to data processing." It should clearly record what the patient enters into the form or chat, why the system reads it, and where the line is between routing and a medical decision.

The first question is the data set. The patient should see which details they enter themselves: complaints, symptom duration, temperature, pain, blood pressure, age, pregnancy, chronic conditions, medications, allergies. If there is a free-text field, that should also be stated. Otherwise, the person may write something extra: policy number, address, someone else’s data, or details the clinic does not need for triage.

It is also worth deciding whether the clinic accepts only structured input or also free-form text. That directly changes the risk of error. A short form with clear fields is usually safer than a long dialogue where the patient writes everything in one go.

The boundary of triage

The consent and the text shown before the scenario should explain the triage purpose in simple words. If the system is only for routing, say so: it helps decide where to send the patient, how quickly the clinic should respond, and whether a live staff member is needed right now. It does not make a diagnosis and does not prescribe treatment.

This is not a small detail. If the clinic uses triage to sort requests, the patient should not take the model’s answer as a medical conclusion. The phrase "preliminary urgency assessment" sounds more honest and clearer than a vague promise that "a smart assistant will tell you what’s wrong."

When the model does not answer

The clinic should define in advance the cases where the system does not continue the conversation and instead calls a staff member or suggests emergency help. These rules are better not hidden in policy text; they should be built into the scenario itself and the consent text.

For example, automatic mode should be turned off if the patient reports chest pain, shortness of breath, loss of consciousness, signs of stroke, heavy bleeding, suicidal thoughts, seizures, a sudden decline in a child, or simply cannot describe their condition clearly.

That way, the patient understands that automated triage has limits. That matters more than any polished wording.

Another issue is the conversation history. It can be stored for error review, staff training, and AI audit in medicine, but that also needs to be described separately. It is better to state clearly the retention period, who can access it, whether the clinic removes PII, and whether parts of the conversation are used in manual review.

If the clinic wants to keep the chat only for service quality, it should not automatically expand the purpose of processing. For the patient, these are different things: getting a route based on symptoms now versus allowing the conversation to be stored for months for internal review.

A good formulation here is short: what symptoms the person enters themselves, that the system only routes, when a staff member joins, and whether the conversation is saved for error review.

What to record before chart answers

When the system answers questions based on the medical chart, the clinic should define the boundaries in advance. Otherwise, the patient may ask about one thing, and the model may go too deep, give away too much, or say something the doctor should say.

For this mode, consent is better collected separately, not hidden in a general data processing form. In the consent record, the clinic should capture not only the fact of consent, but also the set of permissions.

It is usually helpful to split them into four blocks: which questions the system may answer on its own, which parts of the chart it may read, when it must stay silent and call a human, and how the patient will be shown that the answer was prepared by the system.

Without a doctor, the system should usually answer only reference questions about already recorded information. For example, it may provide the date of the last visit, a list of ordered tests, the text of a discharge summary, or previously issued recommendations without changes. If the question asks for advice, interpretation of a new symptom, a dosage change, or a risk assessment, the clinic should immediately move it out of automatic mode.

It also needs to be clear which parts of the chart the model can access. A common mistake is opening the entire record. It is better to give access only to the sections needed for the answer: visit history, lab results, prescriptions, discharge notes, allergies. Especially sensitive parts, such as psychiatric notes, reproductive history, or free-text doctor notes, should be included only under a separate rule and with explicit consent.

Blocking rules should be simple. If the patient reports a new symptom, says they are getting worse, asks "Is this dangerous?", asks for a diagnosis, or if the chart lacks enough data, the system should not answer the substance of the question. It should pass the conversation to a doctor or operator. The same rule is useful for pediatric patients and questions about strong medications.

The patient should see that the answers based on the medical chart were produced by a system. This message is best shown in every channel: in the app, in chat, and in the personal account. A short note is usually enough: the answer was prepared automatically based on your chart, and a doctor did not review it. A clear way to request manual review should be nearby.

For AI audit in medicine, three more fields are useful: the consent text version, the confirmation date, and a log of which part of the chart the system opened for the answer. If these boundaries cannot be described in two or three simple rules, it is better not to launch the mode yet.

How to collect and update consent

One checkbox for every case almost always breaks the process. If the clinic wants to use LLMs carefully, it should collect consent by separate scenarios: chart summarization, triage, and answers based on the medical chart. A patient may agree to one scenario and refuse another. That is a normal choice, and the form should support it.

Before any data is entered, the clinic should show a short and clear text, not a long legal wall. The patient should immediately understand three things: what data the system will receive, what task it will be used for, and who will see the result.

The basic process is simple. First, split the scenarios into separate items with separate checkboxes. Before each item, add two or three short sentences about the goal and the risk of error. Save the date, form version, and the channel where the patient gave consent: desk, app, call center, or website. Give a way to withdraw consent or keep only one scenario. If the clinic changes the scenario itself, the model provider, or the data transfer path, the consent should be reviewed again.

This minimum is often enough to avoid later disputes about what exactly the person agreed to. It is also convenient for the clinic: during a review, you can quickly open the record and see what the patient allowed, when, and in what form.

It is helpful to store not only the fact of consent, but also the text of the form version the person saw. Otherwise, in six months you may find the date but not be able to show what exactly was written that day. If the clinic uses an LLM platform or a gateway with audit logs, the consent record should be linked to the request log. Then you can see which scenario was launched and whether the process stayed within the allowed scope.

A simple example: a patient allowed chart summarization for a doctor visit, but did not agree to triage in chat. A month later, the clinic added chart answers. The old consent is no longer enough. The clinic needs to show the text again, explain the purpose, and give the person a choice.

A good consent form should not be long. It should be precise, readable, and usable in real work.

Example from clinic operations

A patient writes to the clinic chat: "I’ve had a temperature of 38.5 and a sore throat for three days." The chat should not silently send this message to the model. First, the clinic shows separate consent for triage: the complaint text will go to AI for a preliminary urgency assessment, and this is not a diagnosis but a routing recommendation.

The patient clicks consent, and the system immediately records it in the log: time, channel, consent text version, and session ID. After that, the model suggests a doctor appointment for the same day because the fever and pain have been going on for several days. If the patient does not agree to that scenario, the clinic should offer another path, such as a standard booking through an administrator.

At the visit, the doctor collects complaints, examination findings, and prescriptions. When the visit ends, the model prepares a draft discharge note for the doctor. This is no longer triage, but medical chart summarization, so the clinic asks for consent again. The text should clearly say that AI creates a draft, and the doctor reviews it before it is saved in the chart and sent to the patient.

At this stage, the log usually stores who gave consent and when, what the model was used for, which data was processed, and who reviewed the final text.

A few days later, the patient returns to chat with the question: "When should I take the repeat test?" On the surface, it is a short question, but in substance it is the third scenario: answers based on the medical chart. The system again shows separate consent: it will take data from the chart, prepare a reference answer, and, if that is how the process is designed, send it to a doctor for review.

This example shows a simple thing very well: consent should not be hidden behind one general button. Triage, summarization, and chart answers need different wording because the processing purpose, error risk, and review path are different at each step. When the clinic records that separately, it is easier to pass audits and handle disputed cases without guessing.

Where clinics most often make mistakes

Most failures start not with the model, but with the wording of the consent. The clinic writes one broad item into the service agreement and assumes that is enough for any AI scenario. In practice, it does not work that way.

A common mistake is to take one consent for all future scenarios at once. Today the clinic uses the LLM only for a short visit summary, and three months later it adds chart answers or preliminary triage. The patient did not agree to that. If the wording is too broad, the doctor and administrator later have no clear answer about what is actually allowed.

Another weak point is consent withdrawal. It is often accepted verbally or in chat, but not recorded in the log or reflected in the interface. As a result, the doctor already sees the refusal, but the integration still sends the record to the model. In medicine, this is one of the most unpleasant mistakes: it quickly becomes an audit issue, not just a service issue.

The same problems tend to repeat. Consent is hidden inside general terms without a separate choice for AI scenarios. One permission is reused for new tasks even though the risk is higher. Consent withdrawal does not block data transfer. The model answers from the chart without a rule for when the conversation must move to a doctor. And perhaps the most common mistake: the entire chart is sent, even though the task only needs a small fragment.

Clinics often underestimate the last point. If the patient asked about a recent test result, there is no need to send the entire five-year history to the model. The more data you send, the greater the chance of unnecessary leakage, confusion in the answer, and a dispute about what the patient actually agreed to.

It is also dangerous to have no clear threshold for handing the case to a doctor. For example, the system keeps answering from the chart even though it contains oncology, pregnancy, psychiatric status, or questionable prescriptions. In such cases, it should stop and pass the question to a person, not try to answer at any cost.

Even a good technical setup will not save the process if the consent is vague and the patient status is not recorded anywhere.

A short check before launch

If the clinic cannot pass this check in 10 minutes, it is better not to turn the scenario on. For triage, summarization, and chart answers, this is normal protection for both the patient and the clinic.

The patient should clearly see where the system does triage, where it prepares a draft from the chart, and where a staff member replies after review. If these roles are mixed together, complaints are almost inevitable.

Before launch, it is worth checking five things:

- The interface does not hide the scenario. The patient sees when an automated mode is working with their data and when a human wrote the message.

- The consent form explains in simple words the purpose, the data set, and the retention period for input, answers, and logs.

- The log stores not only the consent mark, but also the text version, confirmation time, validity period, and the channel used by the patient.

- The team has already tested PII masking on real examples and assigned manual review for disputed, incomplete, and risky answers.

- A doctor or responsible staff member can quickly turn off the scenario for a specific patient without filing an IT request or waiting.

The most common failure looks simple: the form is written neatly, but the process does not match what it promises. For example, the patient agreed to visit summarization, and later the clinic uses the same data for triage or for answering a question from an old chart. For the patient, that is already a different scenario, even if the model and provider did not change.

You also need to check edge cases separately. What does the team do if the patient writes something ambiguous, if the chart contains a psychiatric episode, an HIV status, or someone else’s data from an attached file? If the answer sounds like "we’ll sort it out as we go," the launch is still too rough.

A practical sign of readiness is straightforward: the on-call doctor can understand in one minute how to stop the scenario, compliance can see the consent version in the log, and the patient can easily tell the automatic step from the human reply.

What to do next

Do not try to cover every case at once. It is better to choose one scenario with a clear risk and an easy-to-follow flow. For most clinics, that is either medical chart summarization or chart answers inside the doctor’s room. One scenario at the start is almost always better than one general consent form for everything.

Describe that scenario on one page in plain language. What exactly the LLM does, what data it receives, who sees the result, where a human checks the answer, how long the logs are kept, and how the patient can withdraw consent. This kind of draft quickly reveals weak spots. They usually appear in phrases like "we use it to improve service" or "we process anonymized data" if the team cannot clearly explain what that means in real work.

After that, the text should be reviewed by three people: a doctor who understands the clinical risk and the real visit flow, a lawyer who checks the consent wording, withdrawal, and data retention, and a product owner who is responsible for the patient journey and prevents the form from becoming too long and confusing.

If they cannot agree on one paragraph, it is too early to launch the scenario. In this area, the weak spots are more useful than a pretty form, because they show where the process is still incomplete.

Then you need a pilot on a limited flow, not on the whole clinic right away. Choose one department, one task group, or one type of request for 2-4 weeks. Collect edge cases separately: the patient did not understand what they agreed to; the doctor does not trust the answer; the system used too much data; the audit log does not help reconstruct what happened.

Look at more than speed. Watch where people stumble. If the administrator spends an extra 40 seconds explaining the form, that is already a signal. If the doctor starts copying the LLM answer into the chart without checking it, the problem is no longer the consent text, but the process itself.

When the scenario passes the pilot without constant manual workarounds, you can move on to infrastructure. If the clinic needs a single OpenAI-compatible gateway with data stored inside Kazakhstan, PII masking, and audit logs, you can separately evaluate AI Router at airouter.kz. That layer does not replace the consent form, but it helps build the technical setup so you do not have to rebuild it later from scratch.

Frequently asked questions

Why can't one general consent cover all LLM scenarios?

Because these actions have different goals and different risks. Summarization creates a draft from already recorded data, triage affects urgency, and chart answers open past visits, lab results, and prescriptions. If you put everything into one item, the patient will not understand what they actually agreed to.

Do summarization, triage, and chart answers need separate consent?

Yes, it is better to split consent into three separate scenarios. That way, a patient can allow, for example, a draft discharge note, but refuse automated chart answers or chat triage. It is also easier for the clinic: later you can see exactly what was allowed and when.

What data can be sent to the model for chart summarization?

Take only what is needed for the specific task. If the doctor only needs a summary of the current visit, do not send the full history, contact details, insurance fields, or billing data. The narrower the data set, the lower the risk of unnecessary access and confusion in the answer.

What should the patient be told before triage?

Before the conversation starts, the person should see what they enter themselves and why the system reads it. Usually, complaints, symptom duration, temperature, pain, age, pregnancy, chronic conditions, medications, and allergies are enough. If there is a free-text field, it is better to warn people directly not to enter extra personal details there.

When should the model not answer on its own?

The system should stop if the case involves chest pain, shortness of breath, loss of consciousness, signs of stroke, heavy bleeding, seizures, suicidal thoughts, or a sudden decline in a child. The same rule applies to new dangerous symptoms and situations where the person cannot describe their condition clearly. In those cases, a staff member or emergency help is needed, not another conversation with the model.

Can a patient agree to one scenario and refuse the others?

Yes, that is a normal choice. A patient can agree to one scenario and refuse another, and the form should support that without workarounds. Otherwise, the consent is formal rather than truly informed.

What should be stored in the consent and audit log?

Store not just the consent mark, but also the text version the patient saw. It is useful to record the date, time, confirmation channel, validity period, the scenario that was launched, and who later checked the result. If possible, link it to the request log itself.

Do we need to ask for consent again when the model or process changes?

Yes, if the scenario itself changes, the model provider changes, the data transfer path changes, or the level of chart access changes. Old consent should not be stretched to cover a new task just because the interface looks similar. The person should see a clear explanation again and choose whether they agree.

How should draft and final chart responses be stored?

It is better to keep draft text separate from the final record. The draft should be stored for a limited time, while the verified version goes into the medical record with a note about who approved it. That makes it easier for the doctor to stand behind the result and for the clinic to review disputes.

Which LLM scenario should a clinic start with?

Start with one clear scenario, not everything at once. In most clinics, it is easier to begin with visit summarization or chart answers inside the doctor’s room, where a person can quickly check the result. A pilot in one department over a couple of weeks will show where the form, interface, or workflow still breaks.