Open-Weight Model or Closed One: Where Each Works Best

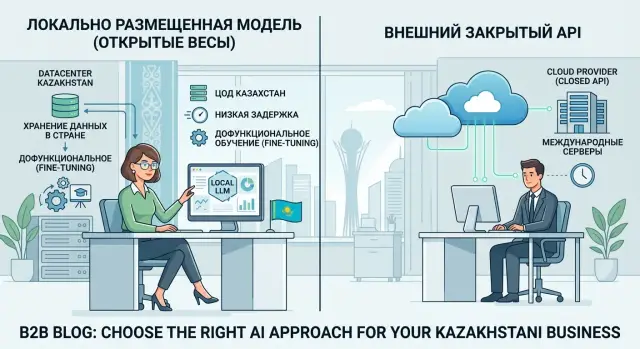

An open-weight model often wins where local data storage, low latency, and fine-tuning for your own processes matter most.

Why the choice of model often comes down to more than answer quality

In a demo, the model that writes a little cleaner and sounds more confident usually wins. In a real service, that is not enough. Teams almost always choose not the “smartest” answer, but the best balance of risk, price, and response time.

If an operator, customer, or employee waits 4 seconds instead of 700 milliseconds, the difference is obvious right away. If the cost per request suddenly doubles at scale, that becomes a real problem too. Even a strong benchmark result will not save a product if answers arrive too late or the budget gets too high.

It is better to compare models not by a polished screenshot or one lucky prompt, but by an ordinary working scenario: your real text volume, your fields, your constraints, and your traffic peaks. In that kind of test, it often becomes clear that a closed API gives a good answer but starts to slow down on long requests, while a simpler model keeps pace and costs noticeably less.

There is also another layer that is often forgotten in demos: where the data and logs live. For teams in Kazakhstan and Central Asia, that is not a formality. If requests, attachments, or audit logs leave the country, a launch can stop during security and compliance review even if everyone is happy with the answer quality.

That is why an open-weight model sometimes beats a strong closed one, not because it writes better, but because it fits real work better. It can answer faster, keep data inside the country, and give you more control over costs. In production, that is often more important than a few extra benchmark points.

Where a closed API starts to get in the way

A closed API is convenient while the team is testing an idea on clean, anonymized data. Problems start in production, when requests begin to include personal data, contract numbers, medical records, or internal correspondence.

The first blocker is that data and logs leave the country. For a bank, clinic, or government team, this is often not a convenience issue but an approval issue. Even if the model answers well, legal and security teams may simply not allow that setup to go live.

The second problem is latency. A request goes out to an external system, passes through the provider’s network, and comes back. For an occasional text query, that is manageable, but in a chat with an operator, a voice bot, or CRM suggestions, a few extra hundred milliseconds are already noticeable. The conversation feels broken, the employee waits, and the customer gets annoyed.

There is also a quieter risk: the team ends up living by someone else’s rules. The provider changes limits, updates the model, adjusts prices, or removes some features. The code may stay the same, but the model behavior has already changed.

Usually it shows up like this: security approval drags on for weeks, users notice pauses in responses, style or accuracy changes after an update, and employees have to rewrite prompts again to match internal terms.

There is one more limitation. A closed API usually understands general language pretty well, but it often gets confused by your abbreviations, status codes, product names, and document templates. If the provider does not offer convenient fine-tuning, or if that data cannot be sent outside, quality quickly hits a ceiling.

At that point, an open-weight model no longer looks like something exotic or difficult. It gives you more control where local storage, low latency, and precise knowledge of your domain matter.

When open weights bring more value

Open weights do not win every time. But in day-to-day work, they are often more practical than a closed API if the team needs control, not just the best answer in a generic test.

The first kind of task is when the model needs to stay close to the data. If documents, correspondence, and requests already live inside the internal environment, local deployment removes unnecessary data transfer outside. That lowers risk, makes security approval easier, and gives more freedom in setup.

The second case is when fast response matters. In chat, even a one-second difference is noticeable. In voice, it is even more frustrating: the person pauses, interrupts the system, or thinks it has frozen. When the team runs the model closer to the user, latency is usually lower and the reply feels smoother.

The third case is when the model needs to adapt to the company’s way of working. A closed API may write good general text, but it often understands internal codes, forms, abbreviations, and standard company documents less well. A fine-tuned open-weight model is more likely to answer accurately in a narrow process. For example, a support assistant can distinguish ticket statuses faster and stop mixing up internal plan names.

There is also a simple business reason. With steady load, the cost of an open-weight model is usually easier to predict. The team knows how much infrastructure costs, how many requests pass through per hour, and where the load limit is. With a closed API, the bill can jump around more because of token volume, model choice, and external limits.

If you have a constant request flow, strict data rules, and a narrow subject area, open weights often bring more value than an external API.

What changes when the team keeps data in the country

When prompts, files, and logs stay inside the country, it is not just the storage location that changes. The project’s risk changes too. It becomes easier for the team to explain where the data goes, who can access it, and how deletion works.

For a bank, telecom team, or government organization, this often removes the most painful question: can the pilot even be launched on real working data. If the data does not leave the country, security and legal review usually goes more smoothly. There is no need to untangle a long chain of external contractors and exceptions.

Local hosting also gives a clearer operating setup. Inside the country, you can keep not only the model’s answers, but the entire service trail: source prompts, attachments, request logs, access tags, and change history.

In practice, that makes four things easier: the data route is simpler to describe for internal review, PII masking can be added before the request is sent to the model, audit logs can be kept by key, team, or service, and there are fewer arguments about whether working documents can be used in a pilot.

The difference becomes especially clear once the project moves beyond demo mode. While the team is testing the model on anonymized examples, almost any closed API looks convenient. But as soon as customer requests, contracts, medical records, or internal emails enter the process, the question of where the data lives becomes more important than a few percentage points in answer quality.

A good example is an internal assistant for a bank’s contact center. If the service runs through an external API outside the country, every new type of data triggers extra checks. If the same setup keeps prompts and logs local while PII is masked before processing, the pilot is much easier to discuss.

For these scenarios, some teams use local hosting or a gateway like AI Router. It supports open-weight models hosted in Kazakhstan, PII masking, and audit logs, which helps where a project simply will not pass without a local setup.

Where low latency really matters

Latency shows up not in tests, but in live work. If an operator waits 4 seconds for every answer, they quickly stop using model suggestions and go back to manual work.

In an in-app chat, pace matters even more. The user asks a question, sees a pause, then another pause, and the conversation loses rhythm. Even a good answer does not feel useful if it arrives too late. A fast, short reply often reduces abandoned sessions more than a small gain in model quality.

Often, the time is not spent only on generation. It is eaten by the request’s journey: an external API in another region, an extra network round trip, queues at the provider. When the team keeps the model closer to the app, that layer disappears. That is why an open-weight model sometimes wins over a closed API: the answer may be a little simpler, but it arrives on time.

Low latency is especially important in an operator assistant during a call, in a mobile app chat, in internal knowledge search when the model is called many times in one session, and in voice scenarios where a long pause sounds like a failure.

If the team needs that kind of setup, local hosting gives more control. On your own infrastructure or through AI Router, you can keep the model close to your data and services instead of sending every request to an external system. For tasks where conversation pace matters, that is often more important than the difference in benchmark quality.

Why fine-tune a model for your process

Fine-tuning makes sense where the model solves the same work task every day. A general model may write well, but it often mixes up internal names, statuses, and response rules. For a business, that is not a small issue: one inaccurate phrase in a request, chat, or customer record quickly turns into manual corrections.

A good example is an internal assistant for employees. If the company has products with similar names, its own review stages, and short service statuses, a model without fine-tuning starts guessing. It may confuse the request type, name an old form, or answer in overly broad language. After tuning, it already knows your vocabulary and makes fewer mistakes on simple but frequent requests.

Another benefit is that you can significantly shorten the system prompt. Without fine-tuning, teams often put everything into it at once: tone of voice, product list, escalation rules, text format, and bans on extra phrasing. Such a prompt grows too long, answers get slower, and model behavior still drifts. A fine-tuned model already holds the basic rules inside, so the prompt only needs the task and fresh context.

Fine-tuning is usually needed when the model must distinguish your products, plans, and internal statuses, answer in one tone across all channels, follow an exact format for a form, CRM, or JSON, and adapt quickly after rule or template changes.

This is where open weights are often more convenient than an external API. Such a model is easier to adapt to your process and update when your team needs it, not the vendor. If the company works with local infrastructure or through AI Router, where open-weight models and fine-tuning options are available, this path also removes part of the data storage and response-time issues.

When legal teams change the consent text or operations introduce a new status, the team can update the dataset and re-release the model. That is usually more reliable than rewriting a long prompt every time and hoping the model remembers the new rule.

How to make the decision without a long pilot

A benchmark table is almost always misleading for production. To choose between a closed API and an option where an open-weight model runs on your side or with a local provider, a short check on real tasks is often enough.

Use not abstract prompts, but 3–5 live scenarios. Better yet, choose the ones that already create business load: a customer reply, document review, knowledge base search, or a short call summary. One successful demo request proves almost nothing.

Then run the real inputs end to end: with the system prompt, conversation history, and normal text volume. Measure latency from the user’s action to the final answer, not just generation speed. The difference between 0.8 and 3 seconds changes the experience a lot in chat and in an operator console.

Right away, mark the fields that cannot leave the country: IIN, account number, phone number, medical data, internal documents. After that, some options will drop out on their own. Then calculate the cost per thousand real requests with long context, repeated calls, and retries. And separately decide whether you need control over the model version. If your process depends on a stable response format, an unexpected update from a closed API can break the flow in a single day.

This kind of test can be done in a few days, not months. If you need a single OpenAI-compatible gateway, AI Router lets you run the same request set through different models by changing only the base_url, then compare quality, latency, cost, and data constraints without rewriting the code.

After that kind of check, the decision becomes much easier. If one model is a little smarter but loses on latency, cost, or data storage rules, the choice is no longer theoretical. It becomes practical.

Example: a bank assistant

In a bank, this kind of assistant is usually needed by employees, not customers. An operator, lawyer, or back-office specialist looks for answers in policies, tariffs, email templates, and internal instructions every day. If searching takes 7–10 minutes per question, the queue grows very quickly.

The problem is that requests are rarely “clean.” They almost always include a full name, IIN, contract number, account balance, or the text of a customer complaint. Sending that data to an external API is already inconvenient for many banks at the security-policy level. Even if the external model gives a stronger answer, the risk and approval process eat up the benefit.

In that situation, an open-weight model often brings more value. It can be run locally, keep data inside the country, and add PII masking before the request reaches the model. The employee writes the question in a normal form, and the system hides sensitive fields while keeping only the context it needs. The risk is lower, and the process still works.

Fine-tuning for the bank’s internal language brings another benefit. Without it, the assistant answers too broadly or mixes up wording from different regulations. After tuning, it is more likely to use the right templates, the correct product names, and the familiar response style. The employee spends less time making manual edits before sending the answer to customers or colleagues.

Confusing answers are also easier to review when the system keeps audit logs. A manager can see which question the employee asked, which document went into context, what the model answered, and where it went wrong. That helps both with control and with improving prompts, masking rules, and the document set.

Common mistakes when choosing

Teams often pick a model based on a polished demo or a high spot in a general ranking. That almost always leads them in the wrong direction. If the real flow consists of short requests, internal terms, tables, and Russian and Kazakh conversations, you should look not at other people’s tests, but at your own 50–100 typical cases.

The second common mistake is counting only token price. Cheap tokens do not help if the model responds slowly, needs long context, often fails at field extraction, or requires repeated calls. In production, money goes not only to generation, but also to retries, manual checks, support, and team downtime.

Problems often start in four places. Teams use a public benchmark instead of their own chats, emails, and documents. They prepare a fine-tuning dataset without cleaning it and leave in personal data, duplicates, and noise. They try to force every scenario onto one model, even though classification, knowledge search, and customer chat need different behavior. And they launch access without key limits or a proper activity log, then later cannot tell who burned the budget or where the flow broke.

A dirty fine-tuning dataset is especially expensive. The team expects the model to understand the internal language better, but gets old mistakes in a new package. If the examples contain too many duplicates, contradictions, and extra personal data, the model learns the noise along with the useful rules.

A quick check before launch

A model-choice mistake usually shows up not in the demo, but after the first real workload. If the team answers a few simple questions in advance, it saves weeks of integration rework, approvals, and unnecessary costs.

- Does the scenario break, or just become annoying, if the user waits more than 1–2 seconds for an answer?

- Do you need to keep data in the country, including prompts, logs, and personal fields?

- Do you already have at least a few hundred good examples for fine-tuning one process?

- Has the load grown to the point where you are already paying for tokens every day in a noticeable amount?

- Can you switch models in a day, not a month?

If the answer is “yes” to at least two questions, do not look only at answer quality in a vacuum. Compare the whole setup: where the data lives, what response time you can sustain under load, whether the model can be fine-tuned, and how quickly the team can replace the provider.

In those cases, open weights often turn out to be more useful than they first seem. And if the team uses an OpenAI-compatible gateway, it can switch providers or move to its own hosting without rewriting the SDK, code, or prompts.

What to do next

Do not try to solve everything at once. Take one scenario where a mistake is expensive: a customer reply, request review, an operator suggestion, or search through internal documents. In a case like that, the difference between a closed API and your own model becomes visible quickly, without a six-month pilot.

Then move ahead with a simple plan. Build a set of 50–100 real requests and include not only normal cases, but also difficult ones: long documents, ambiguous wording, sensitive data. Run that set through the external model and through the open-weight model. Look not only at answer quality, but also at latency, cost per request, stability, and data flow.

Another practical step is not to hardwire model choice directly into the application. Keep a single API layer between the product and the models so you can later change the route, provider, and rules without rewriting code. That removes unnecessary rigidity and gives you more freedom when requirements change after launch.

Sometimes the final setup is mixed. The team keeps general text on the external API and uses an open-weight model on local hosting for sensitive or frequent tasks. In practice, that is usually more sensible than trying to find one perfect model for everything at once.

Frequently asked questions

What matters more when choosing a model: answer quality or speed?

Start with the use case. If a person waits for an answer in chat, voice, or an operator window, latency and price often matter more than slightly nicer wording. A model with the best demo answer can still lose in real work if it responds slowly or becomes too expensive at scale.

When does a closed API start getting in the way?

Problems usually start where requests include personal data, internal documents, or strict log requirements. The service may work well on the surface, but legal and security teams often stop the launch if the data leaves the country. The second common reason is extra delay on every request.

Why keep prompts and logs inside the country?

Because it makes it easier for the team to explain the data flow, access, and deletion. For a bank, clinic, or government team, that often removes the main concern before the pilot even starts. When prompts, files, and audit logs stay local, approvals usually move more smoothly and the project is less likely to get blocked by reviews.

In which tasks do open weights usually win?

They work well for tasks with steady load, a narrow vocabulary, and strict data requirements. If the model has to stay close to the documents, respond quickly, and understand internal codes, a local option often brings more value than an external API. This is especially noticeable in support, contact centers, and internal assistants.

When is it time to fine-tune a model for your process?

Fine-tuning makes sense when the model solves the same process every day and keeps mixing up your names, statuses, or response format. After tuning, it handles the company vocabulary better and needs a shorter system prompt. That is useful for CRM, requests, regulations, and standard replies to staff.

Are open weights always cheaper than a closed API?

Not always. For small volumes, an external API is often simpler and cheaper to start with. But under steady load, your own infrastructure is easier to plan: the team sees infrastructure cost, request limits, and does not depend on someone else’s quotas or token bill spikes.

How can you compare two models quickly without a months-long pilot?

Take 50–100 real requests, not abstract examples. Run them through both options with the same system prompt, conversation history, and normal context length. Then compare four things: quality, latency, cost per request, and data flow. This often takes just a few days.

Can you use a closed API and a local model together?

Yes, and that is often the most sensible approach. General text can stay on an external API, while sensitive or high-volume tasks move to a local model. That way, the team does not need one option for everything and can balance quality, speed, and data rules.

What mistakes do teams make most often when choosing a model?

Most often, teams look at a pretty demo, compare only token price, and choose one model for every task. Another common mistake is a dirty fine-tuning dataset with duplicates, noise, and personal data. After that, the model learns someone else’s mistakes instead of your rules.

Why put a single API layer between the product and the models?

It gives you flexibility for the future. If you keep one OpenAI-compatible layer between the app and the models, the team can switch providers, routes, or model types without rewriting the SDK or prompts. For this kind of setup, AI Router works well too: you can change the base_url and compare different models in one setup.