Migration to Multiple AI Providers Without Service Downtime

Migrating to multiple AI providers without downtime: stages, SDK compatibility checks, shadow launch, and response comparison before switching.

Why one provider starts to get in the way

One provider is convenient at the beginning. Setup is quick, there are few settings, and billing is easy to understand. But in production, that simplicity turns into dependence on someone else’s limits, queues, and rules.

The problem usually shows up not on a calm day, but at peak time. Traffic grows, marketing launches a promotion, the contact center starts sending lots of calls for summarization, and the external API suddenly responds more slowly or starts cutting requests with a rate limit. For the user, it looks simple: the service takes longer to "think," returns an error, or gives back an empty response. For the team, it is already an SLA hit.

Even a short outage quickly creates more problems. If the LLM is part of onboarding, search, support, or document review, a 10-20 second delay builds up in queues. Users repeat requests. Load grows even more. Costs do too.

Manual switching rarely helps. When the team rushes to change keys, base_url, the model, or the provider directly in code, small issues almost always appear: a different error format, different timeouts, tool calling differences, an unexpected context limit. On paper, the service has already been "switched," but in reality some scenarios are broken.

A second provider is usually needed once at least one of these signs appears:

- rate limit errors have become a normal part of work, not a rare exception;

- response latency changes noticeably at different times of day;

- one vendor does not meet data, audit, or hosting requirements;

- you cannot safely test a cheaper or stronger model without risking production.

For companies in Kazakhstan and Central Asia, there is another factor. If data must be stored inside the country or you need audit logs and PII masking, one foreign API becomes not just a technical bottleneck, but an organizational one as well. In that situation, moving to multiple AI providers is not a "later improvement" but standard service protection.

A mature team does not wait for an incident. It prepares a backup route in advance, compares model responses, and sets up switching so the user does not notice it.

What to check before the first step

Migrations usually break not on the models themselves, but on the details the team stopped noticing a long time ago. Before the first test, it helps to build a clear picture: which requests go to production, which models handle them, and where an error is already costing money or time.

Start with a list of tasks. Not "chat" or "analytics" in general, but specific scenarios: customer support, call summarization, knowledge base search, document review. Next to each one, note the model, average request size, expected response length, and acceptable latency. Often, even at this stage, it becomes clear that one expensive model handles everything, while half the tasks would work fine with a cheaper option.

Then check what your code is actually sending to the API. Many teams think they only use model and temperature, but later discover top_p, response_format, seed, tools, max_tokens, and system instructions that accumulated in the project in pieces. If you do not record these parameters in advance, provider comparison will not be fair.

Also look at network behavior separately. For moving to multiple providers, it is not enough to answer the question "does it work or not." You need to understand how the service behaves under load. Usually, four checks are enough:

- what timeout is set for a request and whether it is enough for long responses;

- how many retries the client makes and whether they duplicate retries on the gateway side;

- what limits apply by keys, users, and queues;

- what happens on 429, 500, and stream interruption.

After that, establish a baseline. Look not at averages, but at p95 latency, cost per 1000 requests, error rate, the share of empty or cut-off responses, and quality on a control sample. Otherwise, the team will see a nice cost saving and then get more complaints because of weaker answers.

Another layer of checking is related to data. If requests include personal data, medical records, or banking information, decide in advance what can be sent out, what must be masked, and where logs should be stored. For companies in Kazakhstan, this often affects the whole migration plan from the start, rather than being solved at the last moment.

A good readiness signal is simple: you have a table of scenarios, a list of real request parameters, and a clear baseline for cost, latency, and errors. Without that, any migration quickly turns into a debate of opinions.

How to keep SDK compatibility

The safest move during migration is not to touch client code unless necessary. If the application already works through an OpenAI-compatible API, most of the time you can keep the current SDK and change only the connection point and request settings.

To do that, move base_url, the model name, and keys out of code into config or environment variables. Then the same service can call the old provider, the new gateway, or a fallback model without a separate branch and without emergency fixes before release. In practice, this reduces risk more than many one-off optimizations.

If the team already uses the OpenAI SDK, switching to an OpenAI-compatible gateway usually looks simple: base_url changes, and the rest of the code stays the same. With AI Router, that is the basic scenario: requests can be sent to api.airouter.kz and the same SDK, code, and prompts can keep working.

What to check before switching

One successful request is not enough. Errors hide in details that are not visible in a demo.

Check streaming: how chunks arrive, when the completion signal comes, and what your parser does with an empty fragment. Then check tool calling: whether the client builds arguments, tool names, and JSON schemas in the same way. After that, standardize errors into one format: timeout, rate limit, 4xx, 5xx, empty response. And immediately add a single request_id that reaches logs, metrics, and headers.

Next, build a thin compatibility layer inside your own code. Let the app call one internal method, and let that method decide which base_url to use, how to name the model, how long to wait for a response, and how to parse an error. Then provider differences stay in one place instead of spreading across controllers, workers, and retries.

request_id is not just for neat logs. When you run the old and new routes in parallel, it helps you quickly understand why one request got slower, where streaming broke, and which model returned which response.

There is a simple rule: if the SDK passed a normal text request, that proves nothing yet. The first checks should be on streaming, tool calls, and real errors. That is where compatibility breaks first.

Step-by-step migration plan

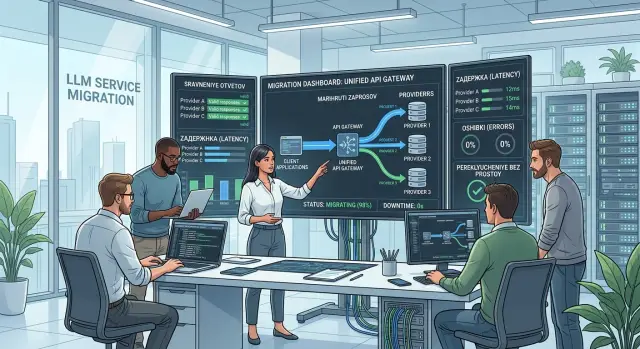

Moving to multiple AI providers goes more smoothly when the team has one entry point for all traffic. Then the request route changes in one place, not separately in every service, worker, and cron job.

If you already have an OpenAI-compatible gateway, the move becomes much simpler. In the case of AI Router, the team can change base_url to api.airouter.kz and keep working through the same SDK and the same call format. That removes unnecessary client-side changes and lets you focus on routing.

A practical order usually looks like this.

- First, put a single routing layer in front of all LLM calls. This can be an internal gateway or an external OpenAI-compatible gateway. The goal is simple: all traffic passes through one point where you can see the provider, model, errors, latency, and cost.

- Then connect the second provider so the product logic does not change. Do not touch business rules, the interface, or the response-processing chain. At this step, only the route under the hood changes.

- After that, enable shadow launch. The user still gets the answer from the old route, while a copy of the same request goes to the new provider. This gives you real data on latency, failures, and quality without risking production.

- If the shadow results look good, send a small share of live traffic to the new route. Usually 1-5% is enough at first, but only for clear scenarios without strict SLAs. The share should grow on schedule, not by feeling.

- Keep a fast rollback ready the whole time. The most convenient option is one flag or routing rule that returns 100% of requests to the old setup within a minute.

Do not overcomplicate this stage. Do not change the provider, prompts, and response format all at once. If you touch everything together, the team will not know what actually broke. It is much calmer to take one visible step at a time: first the route, then the test, then the traffic increase.

How to compare responses before switching

Before switching traffic, build a control set of requests and run it through both routes: the current provider and the new one. Otherwise, you will be comparing not models, but different conditions. The set should include normal requests, hard cases, and errors that happen rarely but are expensive.

Do not look only at the response text. Save four things next to it: the response itself, latency, token usage, and error codes. Even at this stage, it often becomes clear that the new setup is no worse in text quality, but answers 700 ms slower or fails more often on long inputs.

If you only change base_url and keep the same SDK, the comparison becomes cleaner. The code and prompts stay the same, and the difference is visible in the route and model, not in the client side.

What to compare in each test

It is better to compare text by meaning, not by letters. Two models can answer in different words and still both be right. But for structured scenarios, the rules are stricter. If the service must return JSON, check four things:

- whether the schema and field set match;

- whether required values are missing;

- whether the response parses without manual fixes;

- whether data types changed.

This is a common trap. The text looks fine, but the integration fails because a number came back as a string or the model added an explanation outside the JSON.

Test long conversations separately. On a short request there may be no difference, but by the twelfth message one model loses context, starts repeating itself, or forgets the response format. It is also useful to keep a separate set for rare cases: mixed language, typos, empty fields, long documents, abrupt topic changes.

Set an acceptable difference threshold in advance. For a support chat, you might accept 90% semantic match and no more than 2% JSON errors. For application scoring, the bar is usually higher: even a rare mistake is expensive there.

A normal working approach is this: automatic checks filter out all questionable answers first, and then the team reviews only the deviations above the threshold. That way, comparison does not stretch into weeks and does not turn into reading thousands of nearly identical responses.

A simple real-world scenario

Imagine a team with a bot for contact center agents. It suggests answers to common questions: order status, returns, plan changes, card limits. Right now, all requests go to one provider, and any speed drop immediately affects the agents’ queue.

The team does not move all traffic at once. First, it splits requests into two groups. Complex conversations with long context, ambiguous wording, and a high risk of error stay with the current provider. Simple and repetitive questions go to the second route in shadow mode. The agent still sees the main answer, while the new route works alongside it and does not interfere with the switch.

This gentle mode is almost always safer than a sudden cutover. Shadow launch shows the difference on live traffic, not on tests, where everything usually looks better than it does in real work.

For the first check, 10-20 common scenarios from the logs of the last week are often enough. It is better to take not the "clean" examples, but the ones where the bot already made mistakes: short replies, typos, mixed languages, sudden topic changes. That is where the new route either passes the test or fails quickly.

The team usually looks at a few simple things:

- does the answer stay within company rules and avoid making things up;

- does it keep the right tone for an operator;

- did response time increase;

- did the price go up for the same type of request.

If the team has one OpenAI-compatible entry point for both main and shadow traffic, comparison is easier to manage. For example, in AI Router both routes can live in one layer, and audit logs help break down identical requests by request_id. But even without a separate gateway, the logic is the same: compare first, decide later.

Switching starts only after a stable run. For example, the new route is no worse in quality for three days in a row, fits the required latency, and does not produce a spike in disputed answers on common scenarios. After that, you can move 5% of real traffic, then 20%, and only then the main share.

This path may feel slow. In practice, it often saves a week of post-release analysis and does not force agents to manually fix bot mistakes.

Where teams most often go wrong

The most common mistake is simple: the team tries to change everything at once. Then migration quickly becomes stressful and hard to control. It is much safer to move one request flow, for example only internal search or only summarization of inquiries, and watch latency, cost, and error rate under live load.

The second mistake breaks quality checks before launch. Teams compare responses with different prompts, different system instructions, or even different temperature settings. After that, they argue about which model is "better," even though the test was unfair from the start. A fair comparison needs the same input set, the same settings, and clear metrics: task accuracy, response length, latency, cost, and number of failures.

Problems often start at the traffic layer too:

- one provider handles high load, another starts cutting requests much earlier;

- limits are counted differently by tokens, minutes, or keys;

- the team does not plan retries and a fallback route;

- logs do not include the error code and reason for switching.

Because of this, shadow mode looks stable in tests and falls apart on the first working day. If you only change base_url and keep SDK compatibility, that still does not mean provider behavior matches. You need your own rate limits at the key level, queue rules, and a clear policy for when the system retries and when it immediately goes to the backup route.

Another common miss is weak auditing. The team stores only the final response, but does not keep the model, provider, time, token count, error reason, and the fact of switching itself. Later, it becomes impossible to understand why the cost went up by 18% or why some requests timed out. Without such logs, you cannot compare providers fairly or find the source of failures.

Data storage is also an important issue. For banks, telecom, healthcare, and the public sector, this is not a formality. If requests contain personal data, you cannot send them anywhere just to get a lower price. In Kazakhstan, teams usually check data residency, PII masking, and audit logs right away. If one entry point to multiple models is needed for that, it is convenient to handle those requirements at the gateway level. For example, AI Router offers in-country data storage, audit logs, PII masking, and key-level limits, but routing rules and the data set itself still need to be configured by the team.

A good sign of a mature migration is simple: first you see where each request goes and why, and only then do you expand traffic.

Quick checks before switching

A day before the route change, the team should be able to move traffic with one setting, not by editing code in every service. If you still need to open the repository, build a container, and wait for release just to change providers, zero-downtime switching is in doubt.

A reliable setup is simple: the app takes base_url, the model, and the routing rule from config, an environment variable, or a feature flag. Then the entry point and model selection logic change, while the SDK, prompts, and client code stay in place.

Before the final step, check a short list:

- monitoring shows not only average latency, but also p95, p99, error rate, timeouts, and stream breaks for each route;

- the team has already done a manual rollback and knows which flag to return, who does it, and how many minutes the return takes;

- the test set covers frequent requests, rare scenarios, long conversations, large documents, empty fields, and traffic spikes;

- finance has approved the new setup: limits, tracking spend by provider, the monthly invoice, and internal cost centers;

- security has confirmed the rules for PII, logs, data storage, and content labels if they are required by internal or local regulations.

Also check shadow launch separately. It often looks "almost ready," even though the comparison ran only on convenient examples. You need live requests from production, even if only on a small share of traffic. Otherwise, the new route will pass the demo, but start failing on long prompts, unstable network responses, or unexpected JSON formats.

A small example. Bank support sends short questions, while internal search processes long documents with tables. If you only tested the first type of request, monitoring will show normal average latency. But after switching, the second flow will increase timeouts and parsing errors. Things like this only appear when the test set looks like real load.

If the team uses an OpenAI-compatible gateway, it is worth checking the SDK once on the live client before launch, not in an isolated script. Sometimes the small difference is not in the API, but in retries, timeouts, or the parsing of streaming responses.

If even one item is not covered, do not move all traffic at once. Start with 5-10%, watch the metrics for 30-60 minutes, and only then increase the share.

What to do after launch

Right after switching, do not leave the system on autopilot. During the first few days, the team should check every day where requests went, where rollback was triggered, and why it was needed at all: timeout, price increase, empty response, broken format, or quality drop.

Problems are usually visible not in the overall summary, but by route. One provider may be fast on short requests but slower on long ones. Another may maintain quality but be too expensive on simple tasks. If you only look at averages, it is easy to miss those imbalances.

Set rules, not manual firefighting

After launch, it helps to lock in clear routing rules based on cost, speed, and quality. Do not make them too complicated on the first day. To start, basic logic is enough: a cheaper route for simple tasks, a stronger model for harder ones, and a fallback path on error or delay.

A working setup usually includes four things:

- a cost limit for each request type;

- a latency threshold after which the request moves to a fallback route;

- a separate route for tasks where quality matters more than cost;

- logging the reason for each switch.

If these rules are not written down anywhere, the team quickly falls back to manual exceptions. A month later, nobody remembers why the traffic goes that way.

Lock in the test set you used to compare responses before release. Do not change it without a reason. That set will be useful for every future change: a new model, a new price, a new provider, or new system prompts. Otherwise, every new comparison becomes another debate based on impressions.

Another practical step is to describe the switching procedure for the on-call team. Who makes the decision, at what error rate rollback is triggered, where to look at logs, how to check quality degradation, and who informs the business. A short one-page runbook is better than a long document nobody will open at night.

If the team needs one OpenAI-compatible endpoint without rewriting the SDK, it is better to think through that layer in advance. For teams in Kazakhstan, this is often also tied to in-country data storage, audit logs, PII masking, and local legal requirements. In such a setup, you can use AI Router: a single OpenRouter-compatible and OpenAI-compatible API gateway that makes it easy to route requests between different models and providers without changing the existing client code.

Frequently asked questions

When is it time to add a second AI provider?

A second provider is needed before an outage happens. If 429 errors become frequent, latency varies by time of day, and one vendor cannot meet your logging, PII, or data storage requirements, it is time to prepare a fallback path.

Teams usually wait too long and start migrating under SLA pressure. It is much calmer to connect a second route in advance and test it on live traffic in shadow mode.

Can we migrate without rewriting the SDK?

Yes, if you already work through an OpenAI-compatible API. In most cases, it is enough to move base_url, the model, and secrets into config, while keeping the SDK, call code, and prompts as they are.

This approach lowers the risk: you change the entry point instead of rewriting the app right before release.

What usually breaks first when changing providers?

The first things to break are usually streaming, tool calling, and error handling. A normal text request may work fine, while a real scenario fails on an empty chunk, a different error format, or an unexpected timeout.

Check long responses, stream breaks, 429, 5xx, and JSON parsing right away. That is where differences show up fastest.

Where should we start so we do not get lost?

Start with a map of real scenarios. Record which tasks run in production, which models handle them, how many tokens are used, and what latency is acceptable for each case.

Then establish a baseline: p95, cost for the same request volume, error rate, and quality on a control sample. Without that, the discussion quickly turns into opinions.

How do we compare the old and new routes fairly?

Run the same set of requests through both the old and new paths with identical settings. Save not only the text response, but also latency, tokens, error codes, and cases where the response was cut off or could not be parsed.

For free-form text, compare meaning, not exact wording. For JSON, check the schema, required fields, and data types.

Do we need shadow launch before switching?

Yes, it is the calmest approach. The user keeps getting the response from the current route, while the new route receives a copy of the request and shows how it behaves under live load.

Shadow mode quickly reveals things you cannot see in a demo: long conversations, rare errors, latency spikes, and odd response formats.

How do we prepare a fast rollback?

Use a flag or routing rule for rollback, not an emergency release. If you need to open the code to return to the old setup, you have already lost time.

Before launch, rehearse the rollback once. The team should know who switches traffic, where to check metrics, and how many minutes the return takes.

What should we do about PII, logs, and data storage?

Decide this before the first migration, not after testing. You need to define what can go out externally, what must be masked, where logs are stored, and who can access them.

For teams in Kazakhstan, this often affects the whole setup from the start. If you need one entry point with in-country storage, audit logs, and PII masking, it is easier to keep those rules at the gateway level.

How do we safely increase traffic after launch?

Do not move the whole flow at once. First give the new route a small share, for example simple and repetitive scenarios, and watch the metrics for at least 30-60 minutes.

If latency, cost, and quality stay stable, increase the share on schedule. Keep the hard cases with long context on the old or stronger route until the check is complete.

Why do we need a single gateway like AI Router?

A single entry point removes chaos. The team can see where each request went, which model answered, where a retry happened, why the system switched, and how much it cost.

If you need an OpenAI-compatible gateway without changing client code, AI Router covers that case. It provides one endpoint for different models and providers, and for Kazakhstan it is useful to have in-country data storage, audit logs, PII masking, and billing in tenge.