LLM Context Trimming Without Losing Meaning: Windows and Summaries

LLM context trimming helps keep a conversation within the token limit. We’ll cover context windows, priorities, conversation summaries, and quick checks.

Why a conversation loses focus

The problem usually starts not with the model, but with the length of the exchange. Every new reply uses tokens, and the context window fills up faster than it seems. Five minutes of live back-and-forth with clarifications, quotes, and log inserts can easily turn into a long tail of text where less than half is actually useful.

The model relies more heavily on what it can see right now. If you set strict rules, the answer format, and task boundaries at the start, and then add twenty messages with new details, the early conditions get pushed far back. Formally, they are still in the history. In practice, later messages start to crowd them out.

Repetition eats up space especially fast. Polite phrases, duplicate questions, restating what has already been said, and long confirmations like "yes, that’s right, keep going" add very little meaning. For a person, that is ordinary conversational noise. For the model, it is text just like any important instruction.

That is why a bot’s memory often seems worse than it really is. It did not "forget" the task in a human sense. It simply received too much weak signal and too little dense context. As a result, the answer shifts toward the latest question instead of the overall goal of the conversation.

Most often, four kinds of junk fill the context window:

- repeated instructions in the same words

- empty polite phrases

- long quotes from the conversation without selection

- side branches that are no longer needed

Imagine an internal assistant for a bank or retail company. In the first message, it was given rules: do not invent numbers, answer briefly, and keep the table format. Ten turns later, the user started arguing over wording, copying old answers, and adding new exceptions. If you do not trim the history and create a summary, the model will soon start answering based on the nearest meaning. It will pick the freshest piece of the conversation and miss the older constraints.

So loss of focus is almost always caused not by model "magic," but by poor token management. If the history grows without rules, the conversation drifts even with strong models.

What should stay in the history

If you send the entire log to the model, it spends tokens on noise and is more likely to wander off. The history should keep only what moves the current task forward: what the user wants now, what has already been agreed, and what is still unresolved.

First, keep the goal of the current request. It is better in a short form, without a long conversation around it. If the person rephrased the same request five times, keep the latest and most accurate version.

In most cases, you should keep these details close at hand:

- numbers: budgets, limits, percentages, volumes

- deadlines: launch dates, reporting periods, due dates

- roles: who approves, who writes, who reviews

- hard constraints: do not mention the brand, answer in Russian, keep the reply under 500 words

- latest decisions and open questions

These are the things the model loses first when the context gets full. Then it starts changing numbers, forgetting deadlines, or returning to a dispute that was already settled.

It is useful to keep not the entire chain of discussion, but only the decisions that were made. For example, if the team already decided that the report is for the CFO, the tone should be neutral, and tables are not needed, that is enough. A long argument about why tables did not work can usually be removed.

The rule for unfinished questions is simple. If the next step cannot be taken without the answer, the question stays in the history. If it was incidental and does not affect the outcome, it is better to cut it.

Another common source of noise is greetings, confirmations, and empty clarifications. Phrases like "thanks," "noted," "go ahead," and "try again" rarely carry meaning. Repeats are also unnecessary, especially if the model has already received a more recent formulation.

A good rule of thumb is this: every piece of history should answer the question, "What breaks if I remove this?" If nothing does, delete it. If a constraint, a number, or an unresolved choice disappears, keep it. Then the context window serves the task instead of storing the entire conversation for its own sake.

How the context window works

The context window is the part of the conversation the model can see in the current request. Anything outside it is effectively gone for the model. So the answer depends not on the full dialogue history, but only on the set of messages you passed in.

That is why teams often confuse storing history with sending history. You may have a full log for a month, but only the relevant slice needs to go into the model. That is what LLM context trimming is: keep what the answer would break without, and remove everything else.

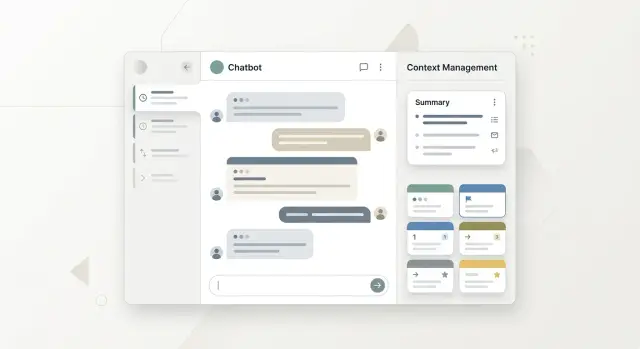

It helps to split context into four layers. System rules are best kept separate from the conversation: answer format, prohibitions, tone, and safety requirements. The latest replies are usually worth sending in full because they contain the current goal and fresh constraints. Facts that must not be lost should be pinned outside the sliding window: contract number, reply language, chosen plan, user role. Older branches of the conversation are better compressed into a short summary, otherwise the window fills quickly with details that have already done their job.

A sliding window works simply: with every new message, older parts begin to drop out. If you rely only on this mechanism, the model will forget what mattered ten minutes ago. That is why permanent rules and pinned facts should not be mixed with ordinary chat.

A good example is an internal support chat. The user spent five messages discussing a return, then asked about delivery, and then came back to the return. If you keep only the latest replies, the model can easily drift in the wrong direction. But if there is a short summary nearby like "the customer has already confirmed the order number and is requesting a refund for one item," the answer stays accurate even after the topic changes.

In practice, the setup often looks like this: permanent instructions, then pinned facts, then a short summary of the past, and only after that the latest messages in full. This order helps the model keep the meaning intact and avoid wasting tokens on noise.

How to trim history step by step

If you send the full log to the model, it spends tokens on old repeats and extra details. It is better to clean the history before each new request using one short workflow.

- First, define the goal of the current turn in one sentence. Not "continue the conversation," but a concrete action: "prepare a reply to the customer about a return" or "compare two contract options."

- Then mark the facts the answer would break without. These are usually numbers, dates, entity names, the chosen format, decisions already made, and any text fragments the model should rely on.

- Compress the older part of the conversation into a short 5-7 line summary. Keep only the meaning: what the user wanted, what you agreed on, what was already checked, and which options were ruled out.

- Check that the window still contains constraints and open tasks. Constraints are often the first to disappear, even though they are what keep the answer within bounds.

- Only then send the request. If there are still too many tokens, cut examples, repeats, and old explanations. Do not cut rules, facts, or unanswered questions.

Good LLM context trimming feels like an editor’s work. You are not rewriting the entire conversation; you are keeping only what the next answer would be worse without.

A small example. The user spent ten messages discussing an integration and then asked for a short launch plan. You do not need the whole argument about models and pricing for the new request. You need the goal, the chosen stack, the launch date, data constraints, and the list of unresolved points. Everything else can be condensed into a few lines.

If your team uses a single gateway to access models, this order is especially convenient. For example, in AI Router you can change the route to a model, but the rule stays the same: first trim the history down to the working core, then send the request. This saves tokens and noticeably reduces the number of replies where the model drifts off topic.

When a summary is better than the full log

A full log is not always necessary. After a long discussion branch, the model starts spending context window space on repeats, older versions of decisions, and minor digressions. At that point, a conversation summary works better than dozens of past messages in a row.

Usually, a summary is worth making in two cases. The first is when one topic is already closed and the team has moved on. The second is when the conversation has clearly changed direction. First you discussed ticket classification, then you moved to the reply text, limits, and safety rules. For LLM context trimming, this is one of the most useful steps.

It is time to refresh the summary if one of these happened: you chose one option and rejected the others, the topic or subtask changed, new constraints appeared, or the model is asking again about something that was already decided.

But the raw log should not always be thrown away. If exact wording matters, keep the original conversation and, when needed, add the relevant fragment back into the context window. This applies to legal wording, system prompts, SQL queries, API parameters, numbers, deadlines, and promises to customers. A summary does not replace the source. It only helps the current dialogue stay focused.

A common mistake is making the summary too broad. A phrase like "we decided to improve the answer" is almost useless. The model needs practical meaning: "the answer should be no longer than 500 characters, must not diagnose, and should advise seeing a doctor if there are warning signs." Such a note takes little space but preserves the agreement.

It is better to update the summary after each important decision, not only at the end of a long session. That keeps the bot’s memory steady, without jumps or losses. If there were no new decisions, there is nothing to rewrite.

A good rule is simple: keep the full log for accuracy, and the summary for work. After 30-40 messages in an active window, 6-8 lines with the goal, constraints, decisions made, and open questions are often enough.

A real-world example

Imagine an online store support chat. The customer first says they want to return a product because the size does not fit. In the first messages, the model needs specific details: order number, purchase date, return period, and the rules for that product category. It is also useful to keep the promised response time nearby so the bot does not invent it again.

A few turns later, the conversation changes. The agent asks for the order contents, and the customer decides to keep one item and no longer requests a return for the other. Instead, they want to change the delivery address for the part of the order that has not been shipped yet.

If you put the entire log into the context window, the model may latch onto the old goal. It will keep discussing the return, even though the conversation has already moved elsewhere. This happens often: early messages take up a lot of space and pull the answer backward.

After ten turns, the history usually accumulates noise. There are greetings, repeated order numbers, apologies, long customer explanations, and agent phrases like "I’ll check now." For the next answer, most of that is not needed. LLM context trimming works especially well here: it removes the repeats and keeps the facts that determine the next action.

What a working summary looks like

Instead of the full log, the system only needs to send a short note:

- order #48173

- return request for one item is being processed

- delivery address for the remaining part of the order has been changed

- agent promised to confirm the changes by 18:00

That summary is enough for a new agent or the next model call to understand what is already settled and what still needs an answer. It does not need to read the whole conversation and figure out where the customer changed their mind.

This approach helps both people and bots. A person spends less time getting up to speed. The model is less likely to confuse an old request with the current one and does not waste tokens on unnecessary details. When a team handles many similar requests, this kind of bot memory usually gives steadier answers than keeping the full chat forever.

Where teams go wrong

Teams usually break the conversation not in the model itself, but in the memory rules. They set a simple token limit, cut off old messages, and think that is enough. In practice, this removes not noise but the semantic anchors: why the user came, what was already forbidden, and which conditions must not be broken.

Trimming by length alone almost always creates bias. Two short messages can matter more than ten long ones. The phrase "do not send the client a draft without approval" takes little space, but if it disappears from the context window, the mistake can be costly.

People often cut out negatives, exceptions, and prohibitions because they seem minor. That is one of the most painful mistakes. The model handles the general direction well, but these details completely change the answer: "do not call after 6 p.m.," "do not mention the diagnosis," "except for corporate plans." If the team keeps only the topic of the conversation and loses the constraints, the bot starts answering confidently and incorrectly.

Another problem is mixing user facts with model guesses. The user said they have a network of 12 branches. That is a fact. The model guessed they need a centralized SLA. That is a hypothesis. If both end up in the summary as equals, the next version of the answer will be built on a guess as if it were truth.

The summary itself is often handled badly too. Some teams rewrite it from scratch every turn. That causes them to lose stable rules, change wording, and slowly introduce drift. It is better to update the summary in parts: permanent facts separately, working agreements separately, temporary tasks separately.

There is also a quieter mistake: old agreements that no longer apply are carried into a new request. The user changed the goal, chose a different answer format, or removed a previous restriction, but the system keeps living by the old rules. As a result, the memory looks full, but it is already outdated.

The check here is simple. Before each history trim, ask: is this a fact, a constraint, or just part of the conversation? If it is a fact or a restriction, keep it. If it is an intermediate version, a guess, or an old decision, it can often be removed without losing meaning.

Quick check before sending the request

Before sending a request, it helps to spend half a minute on a short check. This habit often saves a conversation from strange answers, lost decisions, and wasted tokens.

If the trimming mechanism is already set up, the problem is usually not the mechanism itself, but small mistakes. The model gets a short but messy history and confidently continues down the wrong path.

- The first line should immediately explain what needs to be done now, without a long introduction.

- Facts that cannot be distorted should stay in the window: numbers, dates, limits, statuses, amounts, deadlines.

- The last accepted decision should be visible to the model. If the team already chose option B, there is no need to compare A and B again.

- The summary should contain only confirmed facts, without guesses or convenient conclusions.

- Leave enough token room for the answer. If the window is almost full, the model will start skipping details or cut the answer off halfway.

A small example shows this well. Five messages ago, the user approved a budget of 2 million tenge and a deadline of June 15. The short memory kept only the general project goal, and the budget decision dropped out. In response, the model suggests a new plan for 3 million and a different deadline. The logic is formally sound. It just did not see the thing that was already settled for you.

Check summaries separately after long conversation branches. If a line like "the user probably wants a faster launch" appears, that is already a guess. It is better to write it more narrowly: "the launch date was discussed, but no final decision was made."

This kind of control is especially useful in production, where every request has a cost. If your team works through airouter.kz, it is helpful to look at both the request route and the limits separately so the context window does not get jammed to the point of failure.

What to do next

If the overall idea is clear, do not move it into production right away. First, turn LLM context trimming into a short set of checks where both cost and answer quality are visible.

Start with measurements. Take several real conversations and count token usage before and after the summary. Look not only at the average, but also at the long tails. That is where budget leaks fastest.

Then check whether quality dropped. Run the same set of conversations through two or three models. Evaluate simple things: does the model remember the task, does it mix up facts from earlier turns, does it lose constraints, does it start answering too generally.

For this kind of test, a short plan is enough:

- collect 10-15 typical conversations from the product

- run them with the full log and with a summary

- compare token count, latency, and answer quality

- separately test long chains of 20-30 turns

- record when the system updates the summary

Long conversations should be tested separately. On short examples, almost everything looks fine, and the problems show up later: the model forgets a decision, asks again about something already closed, or clings to an old version of the task.

After testing, set one rule for the whole team. For example, update the summary every N turns, after a topic change, or after any step where the user changes the goal. If every service has its own rule, chaos and hard-to-reproduce bugs will appear quickly.

If you run these tests through an OpenAI API-compatible gateway, comparisons are usually simpler. In AI Router, you can change the model and provider without rewriting code, SDKs, or prompts, so it is easier for the team to see where the conversation summary works well and where the model starts to lose the meaning.

A good rule of thumb is simple: after implementation, the system should use fewer tokens, keep the thread of thought over long stretches, and give almost the same answers as the full log. If one of those points does not hold, the history trimming rule is still too rough.

Frequently asked questions

What is LLM context trimming in simple terms?

It’s a quick cleanup of the conversation before a new request. You keep the goal, constraints, facts, and latest decisions, and remove repeats, polite chatter, and old side branches.

When is it better to keep the full log instead of a summary?

A full log is useful when exact wording matters: contract terms, SQL, API parameters, amounts, deadlines, or promises to a customer. For normal working chat, a short summary and the latest messages are usually enough.

What must be preserved in the conversation history?

Keep anything that would break the next answer if removed: the current goal, numbers, dates, roles, restrictions, the chosen format, and open questions. If a fragment changes nothing in the answer, you can remove it.

What can be removed first with little risk?

Start by cutting greetings, confirmations, repeated questions, long quotes with no new information, and closed side threads. That text takes up space but helps the model very little.

How can you tell the model has lost focus?

Usually it starts ignoring older restrictions, changing numbers, forgetting a deadline, or going back to a decision that was already closed. If the answer latches onto the latest message and loses the main goal, the context is built poorly.

How often should the history summary be updated?

Update it after an important decision, a topic change, or when the user changes the goal. If the conversation is steady and there are no new agreements, you do not need to rewrite the summary at every turn.

Is it enough to pass only the latest messages?

No, that is not enough for long conversations. The latest messages help, but without pinned facts and a short summary, the model quickly loses older constraints.

How is a summary different from a full log?

The log keeps the original wording and the full flow of the conversation, while the summary keeps only the working meaning for the next step. The log is for accuracy, and the summary is for saving tokens and keeping the answer on track.

How do you avoid mixing user facts with model guesses?

Write down only what the user confirmed or the system checked on its own. If it is just a model guess, mark it as an open question or leave it out entirely.

What should you check quickly before a new request?

Before sending the request, check four things: is the current task clear, are numbers and deadlines visible, is the last decision still there, and is there enough token space left for the answer. This takes less than a minute and often prevents odd replies.