KV-cache Reuse in Long Conversations

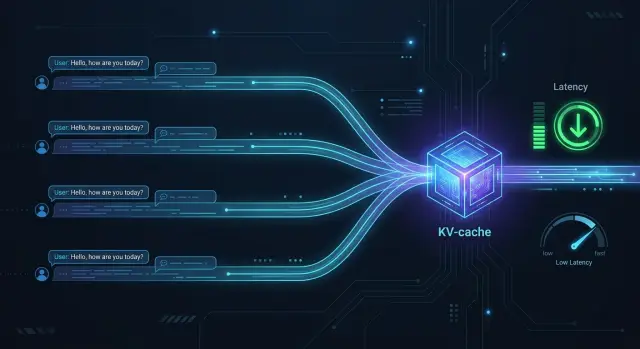

KV-cache reuse speeds up long conversations when requests share the same opening history. We’ll cover the setup, risks, metrics, and checks.

Why long conversations start to slow down

The problem does not begin at the moment of the answer, but earlier. When a user sends a new message, the model usually reads the entire conversation history again to restore context. If the chat already has dozens of turns, it keeps spending time on the same prefix over and over.

As a result, latency grows even before the first token. The user sees a pause and thinks the model is “thinking for a long time,” although in many cases it is simply reprocessing old messages, system instructions, and previous answers. The longer the history, the longer this stage takes.

A short new question does not help much. Even if the person wrote two words, the model still needs the previous context. Otherwise it would lose the meaning of the conversation, answer rules, tone constraints, and details that have already been discussed.

In real operations, this is especially annoying in support. The first messages often look the same: a greeting, a policy explanation, a request to confirm details, or a template for an agent or bot. Then the customer asks a short follow-up question, but the system still recalculates the entire long opening. If there are many such conversations, the extra work adds up quickly.

The consequences are straightforward: the answer starts later, the GPU or external provider spends more compute on repetition, the queue grows during peak hours, and inference costs rise even without any new useful information.

This problem is especially noticeable in production, where many similar scenarios repeat. In banking, retail, or SaaS, thousands of requests can start almost the same way. If you recalculate the shared history prefix every time, the infrastructure pays for the same thing many times.

That is where KV-cache reuse stops looking like a small optimization. It becomes a simple way to save time and money. The model does not become “smarter,” but it stops doing unnecessary work where no new information has appeared yet.

What KV-cache stores

KV-cache does not store “memory” in the human sense, and it does not store a ready-made answer. It saves intermediate numerical states that the model has already computed for the tokens it has read. In simpler terms, the model parses the beginning of the conversation once and keeps the working data so it does not have to perform the same calculation again.

The letters KV stand for key and value. These are parts of the attention mechanism in a transformer. For each already read token, the model creates internal representations at different layers. They are needed so that later tokens can take earlier context into account without fully recalculating the entire history.

That is why KV-cache reuse only helps where requests share a common prefix. If the first 800 tokens of two requests match exactly, the model can take the ready-made base for those 800 tokens and continue from the point where the difference begins. If the difference appears in the first lines, there is almost no benefit.

A good everyday example is a support chat. Many conversations start the same way: a system instruction, response rules, product description, a greeting template, a policy block, and output format. If that part does not change, you can calculate it once and then reuse it.

This cache has strict limits. It does not move between different models. Even similar models keep separate states because their internal layers and weights are different. Usually, the cache also cannot be shared across different versions of the same model. If a team sends the same prefix to one model and then to another, each one will calculate its own KV-cache separately.

This matters a lot for routing. The same conversation text does not automatically mean the same cache. The tokens, the model, and its version must all match.

It is better to think of KV-cache as a saved “draft of computations” for the exact beginning of a conversation. It does not replace context and does not shorten history by itself. It simply removes repeated calculation where the history is truly identical.

When reuse makes a noticeable difference

KV-cache reuse works best when many requests begin the same way. Usually that means a long system prompt, a set of answer rules, output format, and service instructions that hardly change from one conversation to another. The model has already processed this part once and does not spend the same time on it in later requests.

The most common case is a chat where the user only adds to the end of the history. The foundation stays the same: the assistant role, safety policy, answer template, and the first few messages. As the conversation grows and only the last turns change, the benefit becomes obvious very quickly. The longer the shared prefix, the more you save on each new turn.

In practice, the effect is usually clear when the system prompt and opening instruction block match, the shared part of the history is much longer than the new messages, an answer needs to avoid an extra 300–800 ms for reprocessing the start, and requests arrive often enough for the cache to stay warm.

Request frequency matters a lot here. If similar requests arrive every few seconds or minutes, the cache lives long enough to help. If one request comes in the morning and the next one with the same prefix only comes in the evening, it is hard to count on the same gain.

A good example is support, where every conversation starts the same way: tone rules, a ban on making extra promises, answer format, and a short product summary. The customer sends a new message, and the assistant sees almost the same context plus a fresh tail. In long threads, this can remove a noticeable part of the delay, especially on models where the prefill stage is expensive by itself.

If the shared prefix is short or keeps changing, the cache will do almost nothing. If the prefix is stable, the sessions are long, and the request flow is steady, KV-cache reuse pays off quickly.

How to build a shared-prefix setup

The setup only works when you clearly split the history into two parts: a fixed prefix and a new tail. The prefix usually includes the system instruction, answer rules, service roles, greeting, and the messages that repeat across many conversations.

The variable part starts where the user adds something new: a fresh question, new data, attachments, search results, or the agent’s replies. If you mix that with the fixed block, the cache quickly breaks into small variants and brings almost no value.

It helps to keep the template as a separate versioned object. Even an extra space, a different role order, or a field name change can produce a different token sequence. That is why it is better to explicitly fix the template version, role format, and serialization rules.

A basic setup looks like this:

- Build the prefix using the same template every time.

- Tokenize it with the same tokenizer the model uses.

- Compute the hash after tokenization, not from the raw text.

- Store the KV-cache bound to the model, template version, and context length.

- In the new request, add only the tail.

The hash after tokenization matters for a simple reason: two similar texts can split into tokens differently. For the cache, the tokens are what count. If you use several models, do not share the same cache between them, even if the prefix text looks identical.

In practice, cache entries are usually tied to fields such as model_id, tokenizer_version, template_version, prefix_hash, and context_limit. Sometimes a region is added if inference runs in different environments. For teams that need to keep data inside the country, that is a common measure.

You also need a TTL. Templates change, system instructions get updated, and an old cache can quietly create incorrect acceleration or break reproducibility. So set a lifetime and do an explicit reset whenever the template, roles, or prompt-building logic changes.

Example: a support chat with the same opening

A support bot often has the same opening for every new session. It starts with a system instruction: how to address the customer, what topics cannot be promised, when to involve a human, and how to write briefly and calmly. This is often followed by a greeting template, safety rules, and a few service tags. Together, that can amount to several hundred tokens that the model sees again and again.

Imagine a bank or e-commerce chat. One customer asks about a refund, another about delivery, and a third about changing a phone number. The questions are different, but the opening is almost the same for everyone. If you recalculate that prefix from scratch for every conversation, the model spends time on a part of the history it already knows.

That is where KV-cache reuse helps. The team processes the shared prefix once, saves it, and then reuses it for new requests. When a fresh customer message arrives, the system does not recalculate the first hundreds of tokens; it processes only the new message and builds the answer on top of the ready state.

The user notices this right away. The first answer token appears faster, and when the queue is long, the difference no longer feels small. If the bot handles thousands of similar requests per day, the savings on the repeated opening add up quickly.

The rule of thumb is simple: the longer the shared prefix and the more often it repeats, the bigger the gain. If you have a standard 500-token opening and a short 30-token customer question, recalculating the entire input from scratch is simply inefficient.

How to avoid mixing in someone else’s data

The most common mistake is simple: the team puts not only system instructions into the shared prefix, but also pieces of live conversation. After that, KV-cache reuse no longer speeds things up safely; instead, it creates a risk of leaking context between people or companies.

Only put into the shared prefix what is the same for all requests within one narrow group. That can include answer rules, product description, the general conversation structure, and standard disclaimers. A customer’s name, contract number, order history, diagnosis, delivery address, or any other personal fragment should not go there.

A good rule is: if the text cannot be shown to another user without doubt, it should not live in the shared prefix.

Even a safe prefix is better split into separate segments. The same template in Russian and Kazakh should be stored separately. The same applies to different customer environments and different prompt versions. If a bank and a retailer use similar opening text, the cache should still live separately.

Access roles should not be mixed either. The prefix for a regular agent, an internal analyst, and an administrator must be stored in different areas. Otherwise, the model may receive extra instructions or data that belong to broader permissions.

A short TTL is often more useful than complex cleanup rules. For sensitive scenarios, it is better to keep the cache for minutes rather than hours. Yes, there will be fewer hits. But the risk that old context accidentally survives long enough to reach someone else’s request will also be lower.

You also need separate event tracking. Log cache hits and resets for different reasons: the TTL expired, the template version changed, the customer environment changed, or the request went to a different language flow. Then the team will see not only latency savings, but also the risky parts of the setup.

A small example: in a telecom support chat, you can reuse the common opening with tone rules, a list of allowed actions, and the answer format. But once the user writes a phone number or national ID, that piece should live outside the shared prefix.

If you build this kind of setup through AI Router, it makes sense to enable PII masking and audit logs on the gateway side. That does not replace cache separation, but it helps you catch prefix-building mistakes faster.

Where the cache breaks

KV-cache most often breaks not because of the model, but because of small differences at the start of the request. On paper the prefix looks the same, but in real traffic it is already different. That is enough for the cache to miss and for the model to recalculate everything from scratch.

The first common mistake is putting data into the shared prefix too early when that data changes on every request. It could be the current time, a session ID, a random marker, a trace id, or even a date in the system prompt. If such a token appears in the first lines, the requests no longer share the same beginning.

The second problem happens all the time: the team changes the system prompt and forgets to update the cache version. Yesterday’s cache still exists in name, but it belongs to a different text. In the end, you either lose the speedup or get hard-to-notice answer errors.

Sometimes it is not the meaning that breaks the cache, but the format. One service adds an extra space at the end of the line. Another changes the role order. A third serializes the same block with different punctuation. To a person it looks like the same text; to the tokenizer, it is not.

Another risk is tied to model updates. If the provider changes the model version, the old KV-cache should be considered invalid right away. Even with the same prompt, the internal representations may no longer match.

Another common failure comes from history trimming. As long as every conversation has the same opening, everything works well. But then one service cuts the first messages by length, and the shared-prefix boundary shifts. After that, some requests hit the cache while others do not.

For verification, five checks are usually enough:

- keep time, session id, and random fields outside the shared prefix;

- version the system prompt and include the version in the cache key;

- normalize spaces, line breaks, and role order;

- reset the cache when the model changes;

- check the history-trimming logic separately.

If the cache suddenly drops, compare the first tokens of two requests byte by byte first. In most cases, the problem shows up within a few minutes.

How to measure the gain instead of guessing

Do not rely on the feeling that it “got faster.” Compare two identical requests. The first run goes without cache, and the second uses the already prepared shared history prefix. You need to compare them on the same model, with the same provider, the same context size, and the same generation settings. If you change several things at once, the numbers stop making sense.

A simple test set of long conversations works best, especially where the beginning of the history often matches. For example, in support chat the system prompt, safety rules, product description, and the first user turns are the same, while only the current question changes later. In those scenarios, KV-cache reuse shows a fair result.

Measure at least two metrics. The first is time to first token, or TTFT. It shows how quickly the model started answering. The second is total latency until the answer is complete. Sometimes TTFT drops noticeably while total time barely changes if the answer is long. Sometimes it is the other way around.

The average almost always paints too nice a picture. So also look at the distribution: p50 shows an ordinary request, p95 shows what a noticeable share of users feel during bad moments, p99 catches rare but most unpleasant tails, and cache hit rate shows how often the setup actually helps.

Check the hit rate not on synthetic tests, but on live traffic. In tests you may get 90% hits, but in production only 35%, because users change the language, channel, or format of the first message. Then the speedup exists on paper, but you barely see it under real load.

It also helps to count the gain in more than milliseconds. If the shared prefix contains 8,000–10,000 tokens, each cache hit removes repeated prefill for that section. That is GPU time saved. It is worth measuring in GPU-seconds, or at least in the total number of prefill tokens saved per day.

A good rule of thumb is simple: a warm request should clearly beat a cold one on TTFT, p95 should not balloon, and the hit rate should stay high enough to justify the complexity of the setup.

A quick check before launch

Before turning on KV-cache reuse in production, look at real requests, not a nice setup in your head. The cache pays off only where a noticeable share of conversations share the same beginning. If the shared prefix appears rarely, the benefit is often eaten up by complexity and extra checks.

You can run a quick test in one working day.

First, take logs from a few days and compare the first messages in conversations. Look for identical system instructions, opening replies, and template blocks. If there are few matches, KV-cache reuse will not bring much value yet.

Then check that all services send history in the same way. Web, mobile app, bot, and internal service should use the same message order, the same roles, and the same way of inserting service text. Even small serialization differences can easily break cache hits.

Next, set simple cache rules: you need a TTL, a template version, and manual reset. TTL protects you from stale prefixes, the version separates the old template from the new one, and manual reset is needed when the team urgently changes the system prompt or the conversation logic.

Before rolling out to all traffic, turn on logs and watch not only the hit rate, but also the reasons for misses. It could be a changed prefix, an expired TTL, a different template version, or a different history format from a specific service. Without this data, you will be left guessing why latency is not dropping.

Also run scenarios with personal data. If a name, contract number, or phone number enters the shared prefix, that cache quickly becomes a problem instead of an optimization. It is usually safer to trim the cache before user data or mask it before inference.

One practical example: a support chat often begins with the same rules, disclaimer, and first two messages. But if the mobile app adds a hidden service message and the web chat does not, the hit rate will drop sharply. From the outside it looks like “the cache does not work,” although the reason is much simpler: the history format is different.

If after this check you see a stable prefix, clear reasons for misses, and clean PII scenarios, you can launch a pilot. If not, first align the message format and reset policy. It is less exciting than the optimization itself, but that is where time is most often lost.

What to do next

Do not try to enable KV-cache reuse in every scenario at once. Pick one flow where many requests start the same way: a system instruction, greeting, rules, and the first form steps. On that flow, collect baseline metrics on the cold path: time to first token, total response time, the share of long prefixes, and the cost of a series of requests.

Then run an A/B test. In group A, keep the normal processing without a warmed cache. In group B, use the warm path with the same shared prefix. Look not only at the average time, but also at p95, the number of cache misses, and the error rate. The average often improves quickly, while the long tail of delays barely moves.

After that, compare several models on the same prefix. Otherwise you will not know whether the gain came from the cache itself or from the different model. For a fair comparison, keep the prefix text, generation parameters, expected answer length, and the way latency and cost are calculated the same.

If you already work through a single OpenAI-compatible endpoint, these tests are easier to run in one layer. In AI Router, you can change the model or provider without rewriting the SDK, code, or prompts, so the comparison is cleaner and faster.

For environments with strict data storage requirements, do not wait until release to check compliance. Immediately clarify where the cache lives, who sees the logs, whether the shared prefix is included there, and how PII is masked. For a bank, telecom company, or public sector system, this is not a formality.

A good first step is simple: choose one flow, collect numbers for a few days, run the cold and warm paths, and then compare 2–3 models on the same prefix. After that, it is already clear whether the scheme should be expanded to other conversations or whether the gain is better found elsewhere.

Frequently asked questions

What is KV-cache in simple terms?

KV-cache stores not the answer, but the model’s already computed internal states for the start of a conversation. If the next request begins with the same tokens, the model does not recalculate that part and can move to the new part faster.

When does KV-cache reuse really speed up a chat?

It works best when many requests have the same long start: a system prompt, answer rules, a greeting, and the first service messages. The longer the shared prefix and the more often it repeats, the bigger the savings in latency and compute.

Why can a short new question still feel slow?

Because the model usually reads the entire history before producing a new answer. Even a short question at the end does not remove the long prefix if the model still needs it for rules, tone, and the meaning of the conversation.

What should go into the shared prefix?

Put only what repeats across many conversations without changes. That is usually the system instruction, answer format, general rules, and template opening messages.

What should not go into the shared prefix?

Do not put the customer’s name, contract number, phone number, address, order history, or any other personal data there. A simple rule works well: if you would not be comfortable showing that text to another user, keep it outside the shared prefix.

Can the same cache be used for different models?

No, that is not a good idea. KV-cache is tied to a specific model and usually to its version as well, because their internal states are different.

Why can an identical-looking prefix sometimes miss the cache?

Usually small differences in the first tokens are to blame. An extra space, a different date, a different role order, or a session id or trace id at the start of the request can break the match.

How should a cache entry be identified?

Compute the hash after tokenization, not from the raw text. In the cache entry, keep the model_id, tokenizer version, template version, prefix hash, and context limit.

How do you measure the benefit instead of guessing?

Compare the cold and warm paths on the same model and with the same parameters. Look at TTFT, total latency, p95, and hit rate, not just the average number.

Where should you start a KV-cache pilot in production?

Start with one stream where many requests share the same opening, such as a support chat. Fix the template, set a TTL, turn on miss-reason logs, and make sure personal data does not enter the shared prefix.