Dense, sparse, and hybrid retrieval: how to compare them fairly

Dense, sparse, and hybrid retrieval can be compared fairly if you align the corpus, queries, metrics, and chunking rules for different document types in advance.

Why the comparison breaks before the metrics

The problem is usually not the model or the index. It breaks earlier, when people put different kinds of text into one test and expect a single winner. On a mixed corpus, that almost never works. Sparse is better at matching exact words and rare terms, dense is more likely to find semantic matches, and hybrid often wins simply because it has more room to be tuned.

Short and long documents also give different signals. A three-line note often depends on one or two exact words. A 40-page policy, on the other hand, spreads the answer across several sections, and then not only the retrieval method matters, but also how you split the text into chunks. If you do not align that first, the debate quickly shifts from retrieval to preprocessing.

There is also a more practical reason: teams call the same thing by different names. In one department they write "contract number", in another "contract ID", and in a third they use an internal request code. Sparse may struggle because of vocabulary, dense may confuse similar entities, and hybrid may look better only because it covers both kinds of misses. That does not make it the best choice for every corpus.

Most tests get thrown off by four things: different chunking for different methods, only one type of query, different text cleaning before indexing, and fuzzy relevance judgment. In practice, you see this on any mixed set: knowledge base articles, internal emails, policies, short operator notes. One method works great on notes and much worse on long documents. Another shows the opposite pattern.

That is why dense, sparse, and hybrid retrieval should not be compared "on average". First set common rules: one corpus, one text preparation flow, comparable compute conditions, and one shared way to decide what counts as a relevant answer.

What to align before the first run

The comparison breaks before the metrics if the inputs are different. For a fair test, first lock down the corpus: one export date, one document list, one field schema. If the database has 120,000 documents today and 127,000 tomorrow, the difference in results may come from new text, not the method.

Next, remove obvious duplicates and near-duplicates. This is a common trap in mixed corpora, where the same instruction exists in a PDF, a wiki, and the support team’s knowledge base. Then one method seems to "find more", when in reality it just matched several copies of the same answer. It is better to keep one canonical document or at least label duplicate families in advance.

Everyone needs the same text cleaning. If sparse indexes raw HTML with menus, footers, and service blocks while dense receives cleaned text, you are comparing not search methods but two versions of the corpus. Decide on one pipeline up front: how you remove noise, what you do with tables, how you clean OCR, and whether you keep headings and captions.

Do not mix experiments with chunking either. Short and long documents behave differently, and chunk size changes the outcome a lot. If one run uses whole documents and another uses 300-token chunks with overlap, the difference is no longer about the retriever. First compare the methods on one splitting scheme. Then compare the schemes themselves separately.

Before the first run, it helps to freeze a few things:

- the corpus export date

- the list of search fields

- filters for language, document type, and access rights

- text cleaning rules

- the chunking scheme for this run

Pay special attention to fields and filters. If sparse searches title and body while dense encodes only body, the test is already skewed. The same goes for filters by language, department, or document status. They must work the same way in every run.

On a mixed corpus, this shows up immediately. Suppose legal writes short notes, support stores long articles, and the product team loves tables and lists. Without common preparation, dense may win simply because it handled the noisy format better, not because the search itself is stronger. Align the input first, then look at the numbers.

How to build queries without bias

If one engineer writes all the test queries, you are not testing search, you are testing that person’s habit of phrasing thoughts. That kind of set is almost always cleaner, shorter, and more logical than real user questions.

It is better to start with live sources: search logs, support tickets, internal chats, emails to support, query history in the knowledge base. That quickly shows the gap between how people "should" ask and how they actually ask. Before labeling, remove duplicates and mask PII, but do not smooth out the language. Typos, broken phrases, and odd abbreviations are useful here.

Then split the queries into simple slices. It is enough to take short 2-4 word phrases, long questions with multiple conditions, conversational queries like "can you tell me where...", terms and abbreviations, and noisy forms with typos and mixed Russian and English.

On a mixed corpus, that matters especially. One team writes "reconciliation act", another says "statement of reconciliation", and a third searches by template number. Methods behave differently on those queries, and a fair test needs to catch that.

Even when you are collecting rare cases, do not write every query with one hand. Bring in several roles: support, analyst, knowledge base editor, engineer. Usually by the tenth example you can already see how their language differs. That is good. It gets you closer to real traffic.

It also helps to label query intent. For practical purposes, five labels are enough: fact, instruction, comparison, exact phrase, and search by identifier or term. That kind of labeling makes result analysis much easier. If dense is worse at exact phrases and hybrid wins on long conversational questions, you will see it right away instead of losing it in the average metric.

How to prepare short and long documents

Using the same chunking for the whole corpus almost always hurts the comparison. If you split every document into 500-token chunks, short cards lose their shape, and long texts break apart so the answer ends up between two chunks. After that, you are comparing not methods, but different versions of meaning.

Short documents are better left alone. A product card, a short FAQ, a pricing description, or a one-page internal rule usually works better as a whole. If you split that text, search starts returning fragments, even though the original document was already a convenient retrieval unit.

Long documents need a different approach. A policy, contract, manual, or report is better split by headings, subheadings, and complete semantic blocks. A fixed size can be used as an upper limit, but not as the only rule. Otherwise, one chunk will contain two topics, and another will contain a table reference without the table itself.

In a mixed corpus, it helps to store metadata separately from the text. At minimum: document type and source team. This is not only for filters. It also lets you see that one method is better at FAQ searches and another at policies, without confusing algorithm differences with differences in writing style.

A basic setup looks like this:

- keep short cards intact

- split long texts by document structure

- add a small overlap only where the idea often crosses a block boundary

- store document type, author, and section in separate fields

- check the chunk for answer completeness, not just length

That last point is often skipped. Take 20-30 queries with a clear answer in the corpus and manually check whether the retrieved chunk contains the full answer. Not a hint, not half a paragraph, but a fragment you can safely pass to RAG.

A simple example: a document has a section called "Operation Limits", and below it there is a table with exceptions. If the chunk ends before the table, sparse will find the right heading, dense will find a similar paragraph, and hybrid will return both fragments. But none of those will give the full answer. The problem is not the retriever, but how the document was split.

How to run the test

Start with two simple baselines. For sparse, BM25 without manual tuning is usually enough. For dense, take one embedding checkpoint and one chunking method, without a reranker at the start. If you build a complex pipeline right away, you will not know what actually drove the improvement.

Then add hybrid with the simplest fusion. In most cases, reciprocal rank fusion or a clear linear combination of scores is enough. Do not try to tune a dozen weights on day one. For a fair test, a rough but transparent setup is better than a clever one you cannot reproduce a week later.

The same query set must go through all three variants. You cannot change wording, filters, chunking rules, or corpus composition between runs. Even a small difference breaks the comparison, especially when the corpus contains short FAQs, long policies, and documents from different teams.

A practical order is:

- freeze the corpus, chunks, stop words, and filters

- build the sparse index and dense index on the same data

- run one query pool without manual cleanup of results after search

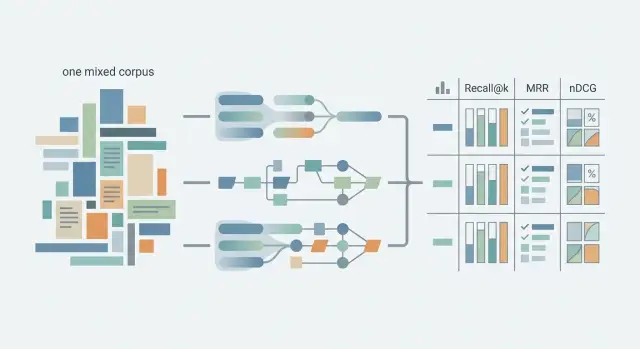

- calculate Recall@k, MRR, and nDCG on the same k values, for example 5 and 10

- measure latency, index size, and update cost separately

It is better to read the metrics together. Recall@k shows whether the system found the right fragment at all. MRR is useful when you care about how high it ranked. nDCG shows differences better when relevance is not binary and there are levels like "exact fit", "partial fit", and "noise".

Do not mix offline quality and cost into one number. It is easier to make a simple table: quality, average latency p50 and p95, index size, and reindexing time and cost. Then you can see, for example, that dense improved results on long documents but updates three times slower, while hybrid handles the mixed corpus more evenly and barely loses on speed.

If you want a result you can trust, leave tuning for the second round. The first run is not for a record score, but for a clean baseline. Only after that does it make sense to tune fusion weights, change chunk size, or add a reranker.

An example on a mixed corpus

A good test becomes clear on a simple but uneven document set. Imagine the database contains short 2-3 line FAQs, detailed two-page instructions, and support email templates. They all describe the same return process, but different teams wrote the text, so the wording varies.

Use a conversational query: "how do I return a product without a receipt". It is awkward for a clean comparison, and that is exactly why it is useful. The user writes as they speak, not the way the knowledge base section was named.

What the results look like

Sparse usually latches onto exact words. If the FAQ includes the line "returns without a receipt are possible with purchase confirmation", that document often rises near the top. The signal is strong because the match is almost literal.

Dense behaves differently. It may find a long instruction that does not mention "without a receipt" but does include a paragraph like "if the customer did not keep the receipt, the agent checks payment by card or order number". Semantically, that is the right answer, even though the wording is different.

On the same corpus, hybrid often gives the best top results. It can keep the FAQ with the exact phrase next to the long instruction that describes the process. For RAG, that is usually better than one short answer without detail or one long document without an obvious heading match.

A simplified top list might look like this:

- sparse: FAQ "returns without a receipt", email template with "receipt lost", then the instruction

- dense: return instructions, FAQ about purchase confirmation, then an operator note

- hybrid: FAQ "returns without a receipt", instructions with purchase-check steps, then the email template

Where fusion breaks

The problem is often not the hybrid idea itself, but how ranks are merged. If fusion pushes too hard on semantic signals, the top results will be texts about exchanges, warranties, or order cancellations that are merely similar. If it loves exact matches too much, a long instruction with the right scenario may fall below a short FAQ, and the RAG model will answer too generally.

On this kind of corpus, it helps to look at not just the first document, but the top 3. If the top three include both the exact FAQ and the detailed instruction, the system is behaving reasonably. If you see three almost identical email templates, the search technically found something, but it is not very useful.

That is where a fair test shows the real difference. Sparse catches the phrase "returns without a receipt", dense understands the conversational question, and hybrid only wins when fusion does not push exact matches below overly broad semantic hits.

Where the methods diverge

One average score across the whole corpus often hides the most important part. A system can score well overall and still fail on the queries people ask most often.

Sparse usually does better at exact matches. If a query contains an error code, part number, form name, field name, abbreviation, or rare term, this kind of search often hits the target faster than dense. For a corpus assembled by different teams, that is normal: a field name like client_id, "Form 12", or an internal process code is something sparse finds confidently, while dense can sometimes drift toward a similar but wrong document.

Dense holds up better when people write at length and in their own words. Paraphrases, conversational questions, and problem descriptions without an exact term are its strength. If an employee asks "how do I pass customer consent into the new form", dense is more likely to find the right document, even if the text uses a different phrase such as "transfer confirmation to the profile".

Hybrid is useful when the same process has been described in different words by different authors. In these corpora, hybrid search in RAG often gives the most even result: sparse catches the exact words, dense brings in the meaning. This is especially noticeable in knowledge bases that were built over years and never cleaned up into a single vocabulary.

But hybrid can miss too. If one signal becomes too loud, it pulls the results in the wrong direction. A typical case: BM25 pushes a document with the right word too high, even though it does not answer the question. The opposite also happens: dense ranks a text about "the same thing" above the document that contains the exact reference and should be higher.

That is why you should not look only at overall nDCG or Recall. Split the queries into at least a few slices: exact identifiers and codes, short 2-4 word queries, long free-form questions, paraphrases of the same intent, and cases where one process has several names.

After that split, the picture is more honest. Sometimes there is no winner "on average" at all: sparse is needed for precision, dense for meaning, and hybrid only makes sense if you can tune the weight of each signal by query type.

Common evaluation mistakes

Most mistakes appear not in the formulas, but in test preparation. If one corpus contains three-line FAQs, long policies, and documents from different teams, metrics can easily produce a nice-looking but unfair comparison.

The first mistake is comparing methods on different chunking. If sparse works on whole pages while dense sees 300-500 token pieces, you are not comparing search methods, but different representations of the corpus. Sometimes people then conclude that one approach is better, even though it simply got a more convenient text format.

The second mistake is tuning fusion on the test set. It happens all the time: teams take hybrid, adjust dense and BM25 weights until the metric goes up, and then call that the final result. Tuning needs a separate dev set. The test set should stay untouched until the end.

The third mistake is quieter, but it also ruins conclusions: the corpus is cleaned only for one index. For example, duplicated headings, boilerplate phrases, and stop words are removed for sparse, while dense gets a different text. Or the fields are rewritten and sections are merged only for embeddings. A fair test starts with one frozen version of the corpus, from which all indexes are built.

Another source of noise is duplicates, templates, and old document versions. They often boost scores almost by accident. The query lands not in the right document, but in its copy, draft, or outdated revision. On paper the metric looks fine, but in a real RAG system the user gets the wrong answer.

An average score by itself also says little. Two systems may have similar Recall@10 but fail in different ways. One consistently misses long documents. Another gets confused by short internal notes with few terms.

After measurement, it is more useful to manually review at least 20-30 misses and note the reason. The same scenarios usually repeat: the relevant fragment was not indexed, the chunk was too large or too small, the query and document use different vocabulary, an old version or duplicate entered the results, or hybrid merged ranks worse than each method alone. That kind of analysis usually helps more than one more decimal place in a table.

Check before the final measurement

Final numbers are often ruined not by the model, but by small differences in conditions. One index was built yesterday, another today. In one run the queries were cleaned, in another they were left as is. After that, the comparison can no longer be called fair.

If you need a test you can trust, check not only the metrics but also the discipline of the measurement. Especially on a mixed corpus, where short cards, long policies, and texts from different teams sit side by side.

Before you start, go through a short checklist:

- use the same corpus from the same export

- run one query set without changing wording between methods

- keep one relevance labeling scheme

- fix the same top-k

- look at the report by slices, not just one overall number

The last point is often what settles the argument. The average metric may look stable, but inside it, dense handles long explanatory texts better, sparse pulls out exact matches in short documents, and hybrid smooths the difference only for part of the queries.

Keep 20-30 manual error reviews next to the metrics table. That is enough to spot a repeating failure: a bad chunk, noisy headings, language mix-ups, duplicates, or a query that is too broad. Without such examples, it is easy to believe a pretty number and miss the real problem.

The final measurement should be boring and identical for every method. That is what a proper corpus search evaluation looks like: less freedom during the test, fewer arguments after it.

What to do after the measurement

After the measurement, do not rush to declare a winner based on one overall number. Average nDCG or Recall@k is useful, but the product does not live on averages. If 70% of your traffic consists of short queries with codes, plan names, or part numbers, look at that slice first.

Sometimes dense wins on the overall metric, but it makes more mistakes on the queries that cause users the most pain. Then it is not the product winner. It is more logical to choose the method that more reliably covers the priority scenarios, even if its average score is slightly lower.

Often it is wiser to choose not one method, but a main setup and a fallback. For example, dense may understand free-form questions better, while sparse catches abbreviations, form numbers, and rare terms more accurately. In that case, you do not have to run hybrid everywhere. Sometimes it is easier to keep a fallback and send code-based and exact-term queries to a second retriever.

After the decision, write it down. A short report should answer four questions: which method works best on the priority slices, where it falls short and which fallback covers that gap, what settings you used, and how much requests, latency, and reindexing cost.

That kind of template quickly removes opinion-based arguments. The team starts looking at the same numbers and the same examples.

Do not consider the test complete if you later change chunking or vocabulary. Even a good retriever changes noticeably after new chunk boundaries, different lemmatization, or stop-word cleanup. On a mixed corpus, you will see it right away: long policies start splitting better, while short notes suddenly lose exact matches. After such changes, you need another run on the same query set and with the same report template.

There is another trap: the team changes retrieval and the LLM layer at the same time. Then it is no longer clear what caused the gain - search, reranking, or the new answer model. If you are testing different models in parallel, it helps to keep the access infrastructure separate from the retrieval experiment. For example, AI Router at airouter.kz gives you one OpenAI-compatible endpoint for different models, so you can compare retrieval separately without rewriting SDKs, code, or prompts.

A good final result sounds boring, but that is exactly what you want: which query types each method handles well, where it makes mistakes, how much it costs to support, and what you chose for production. If your team can answer those questions, the comparison was worth doing.