Hedged Requests to Two Models: When p95 Drops

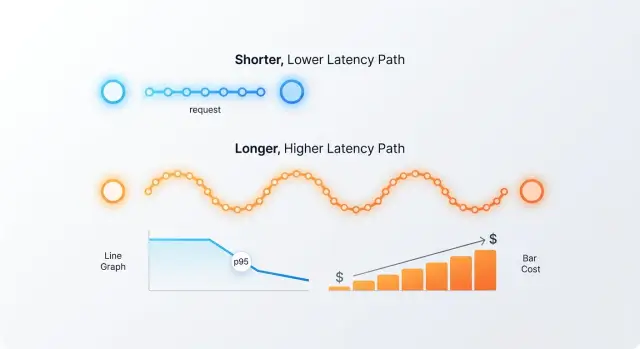

Hedged requests to two models can remove rare slow responses, but sometimes they only double costs. Let’s break down thresholds, metrics, and mistakes.

Where the p95 problem shows up

The p95 problem appears at the point where the metrics look acceptable, yet users still complain about "lag." The average response time may be 2.4 seconds, and on the dashboard that looks normal. But if part of the requests sometimes take 8-12 seconds, people remember those pauses.

The average hides the latency tail. If 90 requests finish in 2 seconds and 10 take 10 seconds, the average won’t show how unpleasant those rare slowdowns are. p50 is reassuring too: the median will still be around 2 seconds. But p95 immediately shows that a noticeable share of traffic already feels slow.

In LLM applications this is especially visible. One response can get stuck because of queueing at the provider, a long context, an extra content check, or a network dip. If a user request goes through several steps, the slowest step becomes the delay for the whole operation. One slow call hurts not only a single answer, but the p95 of the entire service.

Users do not think in p50 and p95. They just see that the interface sometimes takes too long to think. That alone is enough to erode trust in the system. In a chat, it is annoying. In a bank, telecom, or retail operator console, it immediately hurts the pace of work.

The tail is most noticeable in scenarios where the answer is needed right away: in chats and copilot interfaces for employees, in agent pipelines with several LLM calls in a row, in online classification and data extraction, in voice scenarios, and in any other user-facing feature where a person is waiting on the screen.

If the system makes one call and only rarely pauses, p95 may be unpleasant but not critical. If one user request triggers several model calls, the tail grows fast. Then even good averages no longer save you.

That is when hedged requests appear. Not because the average latency is bad, but because rare slow answers start breaking the entire user experience.

What a hedged request is

A hedged request is a setup where the service sometimes sends the same prompt to two models so it does not have to wait for a slow p95 tail. The word "sometimes" is the key here. You do not duplicate every request all the time; you launch the second call based on a rule: for example, if the first model has not started responding within 400 ms, or if the request falls into a high-latency-risk class.

There are two basic variants. The first is simultaneous start, where both models get the request immediately. This approach cuts tail latency more aggressively, but it almost always hits the bill hard because you pay for two runs.

The second variant is usually more practical: the service sends the request to the main model first, and starts the second one after a short delay. This delayed start is useful when you want to reduce p95 but are not ready to double inference costs on every call.

The point of the setup is not to return just any first answer. It is to return the first usable answer. That is an important difference. If one model returns empty text, broken JSON, or a response in the wrong format, the service should not treat that as the winner. It waits for another answer if that answer passes simple quality checks.

The check is usually simple: the response arrived without an error, the format is valid, the text is not empty, and it is not cut off. After that, the gateway sends the result that passed validation first. In a normal production setup, the winner is not the one that returns anything first, but the one that returns an answer you can safely pass downstream.

You need to think about canceling the second call right away. If the first model has already won and the second one keeps generating tokens, you are just burning budget. At the same time, unnecessary load on the provider increases, the connection lives longer, and your own metrics get worse.

That is why a working implementation does three things: it records the winner, immediately cancels the losing call, and logs how often hedging actually triggered. Without that, the scheme only looks smart on a diagram. On the monthly bill, it usually looks worse.

When the second call actually helps

The second call pays off in one clear case: the main model is usually fast, but sometimes falls into a rare long tail. The average may be fine, but p95 is ruined by a small share of stalls. If you launch the duplicate not immediately, but after a noticeable pause, you can cut the tail without doubling the cost across all traffic.

The best effect comes when the backup model is close in quality and response shape. It does not need to be better. It just needs to solve the same task in a similar way: keep the required format, not break tool calling, and not shift tone in a way users would notice. If both models produce almost the same result on the same set of requests, the pair is a good fit.

The setup usually works under four conditions. The main model has occasional stalls, not just consistently high latency. The backup model lives with a different provider or a different stack and does not share the same failure. The duplicate starts on a timer, for example after 300-800 ms, not at the same time. And the cost of an extra call on part of the traffic is lower than the cost of a broken SLA.

Imagine a chat assistant for a contact center. In 93-97% of cases, the main model answers quickly. But some requests hang for several seconds, and the operator sits there without guidance. If you send the same request to a second model after half a second and take the first usable answer, p95 often drops much more noticeably than the average latency. At the same time, spending only increases on the share of requests where the timer actually fired.

If your team already works through a single LLM gateway, this kind of setup is easier to test. In AI Router you can keep two models behind one OpenAI-compatible endpoint and change only the timer logic and winner selection. That is handy for a short test on a small slice of traffic.

If the business pays for latency tails in money, operator waiting time, or lower conversion, the second call on 3-7% of requests is often worth it. If that price is not there, the duplicate is usually not needed.

When the scheme just burns budget

Hedging breaks the moment a rare backup plan turns into a habit. If the second call fires on almost every request, you are no longer fixing a long latency tail — you are simply paying for two inference runs instead of one.

That happens when the trigger threshold is too low. Say the first model usually responds in 700-900 ms, and you start the second one after 300 ms. In that case, almost all traffic gets duplicated, while p95 barely improves. The average cost rises quickly and predictably.

It gets even worse when both models slow down at the same time. This is common if they sit with the same provider, in the same region, or hit the same traffic spike. Then parallel LLM calls do not give you a safety net: both queues grow together, and the second request does not save the tail.

Another trap is long answers. For a short classifier, an extra parallel call may cost pennies. For generating an 800-1,500 token response, the picture is different: most of the price sits in the output, not the input. If you hedge long generations without strict control, inference costs rise very quickly.

Often the budget leaks not because of the idea itself, but because cancellation is poor. The team takes the first arriving response, but the losing call keeps generating text for several more seconds. The provider bills tokens even though nobody needs that answer anymore. If that happens thousands of times a day, the losses become very noticeable.

There is also a worse scenario: the second model is faster, but worse. The user or operator sees a weak result and hits retry, or the system retries on its own. Then you pay for two parallel requests and then a third one. Formally, p95 goes down. In cost and quality, it is a failure.

A bad setup usually has the same signs: a large share of traffic goes into hedging, the delays of the two models are strongly correlated, answers are long and output tokens become expensive, the losing call is not canceled immediately, and the faster model more often gives a weak answer and causes retries.

A simple example: a support chat generates detailed answers for bank customers. If the second call starts almost every time and cancellation comes too late, the bill rises by nearly half. If the faster model also misses tone or facts more often, the team gets extra retries too. On the chart p95 looks better, but the business pays more on almost every conversation.

How to choose the launch threshold

You should not make up the threshold. It is chosen from data: how many requests actually get stuck in the tail, how much the second call costs, and on which tasks the delay hurts the product the most.

Average response time almost always lies. For this kind of setup, look at least at p95 and p99. If the average is 2 seconds but p95 jumps to 8, the problem is not overall speed — it is the long tail. That is what the second parallel call should cut.

First, split the traffic

One single number across all requests usually only gets in the way. Short classification, extracting fields from a document, and long answer generation behave differently. They have different prompt lengths, different response sizes, and different error costs.

It is enough to split the traffic into at least four groups: short answers up to 100-150 tokens, medium work requests, long generation and summarization, and tasks with a strict SLA. Then calculate p95 and p99 for each group separately. Often it turns out that hedging is only needed in one category, while the rest is better left alone.

Find the break-even point

The launch threshold is the waiting time after which you send the second request. Too early, and costs almost double. Too late, and p95 does not get cut in time.

A practical approach is simple: take latency history for one task group and test several thresholds, for example 800, 1200, and 1800 ms. For each option, look at three numbers: the new p95, the share of requests with a second call, and the increase in inference cost.

If an 800 ms threshold reduces p95 by 300 ms but triggers the second call on 35% of traffic, the setup may be too expensive. If a 1400 ms threshold cuts p95 almost as much, while the duplicate is needed only 8% of the time, that looks like a reasonable tradeoff.

The same threshold for short and long answers usually does not work. For a short task, 1200 ms is already a failure; for long generation, that may still be normal waiting time. If you route models through one gateway, it is easier to set such thresholds by route type instead of using one number for all traffic. In AI Router this is especially easy, because teams only change base_url to api.airouter.kz and keep the same SDK and code.

A good threshold looks boring. It does not produce a record p95 on the chart, but it clearly cuts the tail and does not inflate the bill at the end of the month.

How to choose the model pair

A hedging pair should not only look good on paper. First, run the same set of real prompts through both models and compare not the average latency, but the tail. Often model A is faster by median, but regularly falls apart in p95 on long answers. Model B may be a bit slower in ordinary cases, yet smoother under load. That pair works better than two "fast" models with the same spikes.

You need to compare under the same conditions: the same prompts, the same max tokens, the same response format, the same temperature. If you change parameters on the fly, the comparison loses meaning. This is a common mistake: the team sees a nice chart and then cannot reproduce the result in production.

Price matters too. The second call is not free, even if you only keep the winner’s answer. Usually you pay for input tokens in both requests, and sometimes for part of the output as well if cancellation arrived too late. So look separately at input and output cost. Sometimes the backup model is cheap on short answers, but gets much more expensive on long explanations or JSON with many fields.

Another filter is response compatibility. If the first model returns clean JSON by schema, while the second likes to add text before or after the object, you will get not insurance but extra parsing errors. The same goes for tool calling, field names, response length, and refusal style. The closer the contract, the fewer surprises.

Do not place two models with a shared failure point next to each other. If they sit with the same provider, in the same region, or on the same queue, their latencies often rise together. Then the second call does not reduce p95 — it just doubles the bill. It is better to use models from different failure domains.

Check quality on your own tasks, not on generic benchmarks. Take 100-200 live requests: field extraction, ticket classification, short support answers, document summaries. If the backup model makes mistakes in even a few noticeable cases, the latency gain will quickly be eaten by manual checks and retries.

A good pair usually looks like this: the main model gives acceptable cost and quality every day, while the backup keeps a smoother latency tail, returns the same format, and does not depend on the same infrastructure. If even one of these points does not line up, it is better not to hedge at all.

How to roll out the scheme step by step

First, you need an honest baseline measurement. Without it, it is easy to decide the scheme helps even though p95 barely changes and the bill rises noticeably. Collect at least a few days of normal traffic and save not only p95, but also p50, p99, timeout rate, average token count, and cost per 1,000 requests.

Then roll out narrowly. Do not enable the second call for the entire stream right away. Start with 1-5% of traffic and set a simple threshold: the second branch starts only if the first one has not begun responding within, say, 600-800 ms. For short requests, the threshold is usually lower; for long ones, higher.

- Choose one scenario. It is better to start not with the whole product, but with one request type where p95 is already bothering users: support chat, knowledge base search, or short-answer generation.

- Set the winner logic. As soon as one model returns a usable answer or starts streaming, mark it as the winner and immediately cancel the second branch.

- Put in hard limits. Cap the hedging share, the daily spend, and the maximum number of parallel duplicates per key or service.

- Separate the metrics. Store data for the first and second branch separately: who won, who was canceled, how long the response start took, how many tokens were burned, and where the errors were.

- Compare week over week. Watch not only the p95 drop, but also the price of that gain.

Canceling the losing call is not a minor detail — it is the center of the whole setup. If the provider or your gateway cannot quickly stop the extra request, the test will almost always give you a nice latency chart and poor economics.

Look at the result soberly. If p95 dropped by 25-30%, while weekly costs rose by 5-8%, the scheme is probably justified. If the gain is less than 10% and costs rose by 20% or more, it is better to keep the second call only for the most important requests.

A simple production scenario

The support team has one simple rule: a customer should not wait more than two seconds. If the bot responds later, the operator already sees an irritated user, and sometimes a follow-up message like "Are you there?"

On a normal day, the main model handles most requests on its own. Simple questions about order status, refunds, or plan changes come back quickly, often in less than a second. The problem is not the average time, but the long tail: some requests fall into p95 and spoil the whole experience.

The team did not duplicate every request right away. They set it up like this: the request first goes to the main model, and if there is still no response after 700 ms, the service starts a second branch. Usually it is another model with more predictable latency, even if it costs a bit more.

Then it is simple. The system takes the first usable answer and sends it straight to the chat. If the second branch is late, it no longer bothers the user, but its result still goes into logs. That way, the team sees not only who won, but also how much each attempt cost.

It is worth watching a few things: how many requests reached the second model, how often the second branch actually rescued the answer under two seconds, how much inference spending grew, and which answer more often passed the internal quality check.

After a couple of weeks, the picture usually becomes clear. If the second model triggers rarely and almost never improves p95, the setup is turned off or the threshold is moved higher. If it regularly pulls the latency tail down and the cost increase stays acceptable, the rule stays.

Common mistakes

The most common mistake is to look at the average response time and feel good: it was 2.1 seconds, now it is 1.8. But users are not angry because of the average. What irritates them is the tail: rare 8-12 second responses that break the chat, trigger timeouts, and keep workers busy. If you do not count p95 and p99 separately, hedging can easily look like a success even where it barely changes anything.

The second mistake is setting the launch threshold too early. If the second model starts after 200-300 ms, you are not protecting the tail — you are almost always duplicating traffic. The latency on the chart may look better, but the inference bill grows almost linearly. For a normal chat, that is often a bad trade.

The third mistake is comparing models under different conditions. The team changes the system prompt, the response template, or generation parameters, then decides one model is faster or more stable. That test proves nothing. First freeze one prompt version, the same temperature, the same max tokens, and one request set. Otherwise you are not comparing models, but two different scenarios.

Another miss is forgetting provider limits. Parallel calls create a spike right when the main model is already slowing down. If the provider has a strict rate limit, the second wave of requests will hit 429s, a queue, or channel degradation. Then p95 does not fall — it rises.

And almost everyone underestimates the billing of canceled calls. Many people think this way: if the backup request was canceled, you don’t pay. In practice, some providers charge once the request has already been accepted for processing or the model has managed to emit the first tokens. On the dashboard the experiment may look cheap, but the monthly bill shows a very different number.

Before launch, it helps to check five things:

- count p50, p95, and p99, not just average time;

- watch the share of requests where hedging actually triggered;

- keep the same prompt for both models;

- count finished and canceled calls separately;

- test rate limits under peak, not calm, load.

If p95 drops noticeably after that and the duplicate share stays moderate, the scheme makes sense. If not, the second call just burns budget.

Quick check and next steps

A hedging experiment can easily take the team in the wrong direction if you do not agree on the goal first. Some need p95 no higher than 2.5 seconds with price growth of no more than 10%. Others care more about p99 or timeout rate. Set the SLA and spending cap before the first test, or the second call will quickly become an expensive habit.

Next, you need a simple duplicate-start rule. Usually it should not be enabled for every request. Most often it is only needed after a delay of 300-700 ms, for long prompts, or for providers with uneven tails. If the rule cannot be described in two lines, it will be hard to test and even harder to switch off in time.

A short plan looks like this:

- write down the target SLA: p95, p99, timeout rate, and a cost ceiling for test traffic;

- define the duplicate trigger rule: by timer, request type, input size, or a specific model;

- check the mechanics: cancel the losing request, deduplicate the response, use one request ID, and set a budget limit;

- run the setup on 5-10% of traffic for several days and compare p95, p99, average cost, and the share of canceled duplicates.

It helps to decide in advance what action you will take after the test. If p95 dropped by 20% and the cost rose by 4%, you can expand the scheme. If p95 barely changed and expenses doubled, the test has already given you the answer: the duplicate is not needed.

When you need to quickly test model pairs from different providers through one OpenAI-compatible endpoint, this kind of trial is easy to put together in AI Router. Just change base_url to api.airouter.kz and keep the same SDK, code, and prompts. For a short run, that is convenient: you can compare routes faster, see where the tail drops, and avoid spending a week on wiring instead of measurement.

Frequently asked questions

What is p95 in simple terms?

p95 shows the response time that 95% of requests stay within. If p95 is 8 seconds, that means a noticeable share of users is waiting a long time, even if the average looks fine.

For chats, copilot interfaces, and chains of several LLM calls, this metric is often closer to the real experience than the average or p50.

When does hedging actually make sense?

This scheme makes sense where the main model is usually fast but sometimes stalls for several seconds. Then a second call with a delay can cut the tail and remove the worst pauses.

If one user request goes through several LLM steps in a row, the value is even higher: one slow step hurts the whole answer.

Should both models be launched at the same time?

Don’t rush it. Starting both models at the same time cuts the tail more aggressively, but it almost always drives up cost because you pay for two launches.

Most teams use a delayed duplicate: the main model starts first, and the backup only kicks in if the answer hasn’t started in time.

When does the second call just burn money?

The budget goes to waste when the duplicate runs on almost every request instead of only on rare stalls. It gets even worse if both models sit with the same provider and slow down together.

Long answers are another risk. If the losing call is not canceled right away, you pay for extra output tokens for no benefit.

How do you choose the duplicate launch threshold?

Base the launch threshold on your own data, not on guesswork. Look at p95 and p99 by task group and try a few thresholds, such as 800, 1200, and 1400 ms.

A good threshold trims the tail noticeably without sending too much traffic to the second model. For short tasks the threshold is usually lower; for long generation, higher.

How do you choose a backup model?

Look for a model that gives similar quality and the same response format, but lives in a different failure domain. If the backup breaks JSON, tool calling, or the response style, it creates new errors instead of providing insurance.

It’s better to compare the pair on real prompts with the same settings: the same system text, the same max tokens, and the same temperature.

Can I just take the first response that arrives?

The winner is not the fastest response, but the first usable one. Check at least four things: the model did not error out, the format is valid, the text is not empty, and it can be safely passed on.

If the faster model fails more often, users will start hitting retry, and all the savings disappear.

What should I do with the losing request?

Cancel it as soon as you choose the winner. Otherwise the losing branch will keep generating tokens, and the bill will keep growing.

Also log who won, how often hedging triggered, and how many tokens were spent on canceled calls. Without those numbers, you won’t understand the real cost of the scheme.

How do I know if the scheme pays off?

Don’t look only at the new p95. Also compare p99, the timeout rate, the share of requests with a second call, and the cost per 1,000 requests.

If the tail dropped noticeably and the cost increase stayed moderate, the scheme is working. If the gain is small and the duplicate fires too often, it’s better to stop the test.

How can I safely test hedging in production?

Start with one scenario where the tail is already bothering people, and enable hedging on only a small share of traffic. Usually 5-10% for several days is enough if you already set a p95 target and a spending cap.

Check the mechanics before you start: cancel the extra branch, use one request ID, track canceled calls, and set a daily budget limit. That gives you a clean test without unnecessary surprises.