Golden Set for LLMs: How to Keep It Without the Clutter

A golden set for LLMs helps you check quality without chaos: how to choose cases, archive old examples, and keep rare complex requests.

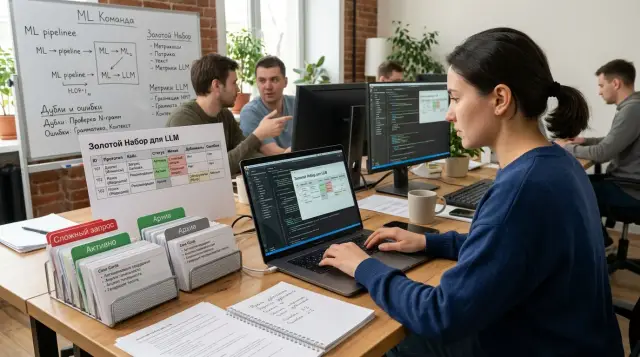

Why the set quickly turns into clutter

At first, a golden set for LLMs looks neat: a few dozen examples, clear answers, a clear goal. Then the product changes. New scenarios appear, new constraints, different answer rules. No one removes the old cases because “let them stay, they might come in handy.” A couple of months later, the set no longer reflects how the system actually works.

There is another reason too. The team starts throwing almost everything into it. Any strange conversation, any failure, any debatable answer seems useful. The instinct makes sense, but the structure is lost fast. Instead of a working quality-evaluation tool, you end up with a folder where important cases sit next to random noise and duplicates.

The worst part is that rare, expensive mistakes get buried under simple requests. If the set has 300 easy questions and 8 cases where the model confuses product limits, returns extra data, or breaks an important flow, attention almost always goes to the easy examples. The metric looks fine, but in production the team still runs into painful failures.

Usually, clutter shows up in four signs:

- old examples test features that no longer exist;

- new cases are added without a reason or source label;

- complex user requests get lost among standard ones;

- no one can quickly explain why a given example is stored at all.

The last point is especially unpleasant. If the team does not remember why a case was added to the set, it is almost impossible to use it correctly. One person thinks it is a safety test, another sees style checking, a third sees a regular FAQ. In the end, the same example gets interpreted in different ways, and release comparisons start to drift.

In practice, this happens very simply. On Monday, support brings in a difficult customer request, the team adds it to the dataset, and two weeks later no one remembers the context. Was it a real failure? A one-off case? A model error, a prompt issue, or a business-logic issue? If there is no answer, the archive of examples grows while the value barely does.

When the set grows without rules, it stops helping you choose what to fix first. It just takes time and creates a false sense of control.

Which cases are worth keeping in the set

A good set is usually smaller than you want and stricter than it seems. You should not put everything into it. If an example never broke anything, never affected money, risk, or support load, it rarely needs to stay in permanent checks.

The best cases are the ones that already caused pain for the team. That might be the wrong limit for a banking product, a missing required disclaimer, personal data leaking into text, or a format failure that breaks an integration. One such example is more useful than ten polished demos.

Keep cases in the set that users run into often and that can become costly. A frequent low-risk request can be checked less often. A frequent request with meaningful risk is better kept close at hand all the time, even if it seems boring.

A working set usually includes four groups of cases: production incidents the team has already fixed in the prompt, routing, or post-processing; normal user scenarios where an error affects money, SLA, or support load; examples where the model confuses the answer format, facts, numbers, dates, or constraints; and edge cases where the user writes at length, is unclear, or mixes several tasks into one message.

It also makes sense to keep cases where the model answers correctly in substance but not in the way the business needs. For example, the meaning is right, but the JSON schema is broken. Or the model sounds confident when it should admit there is not enough data. These failures are barely visible in demos, but they show up quickly in live traffic.

Showcase examples for presentations are better kept out of the evaluation set. A demo explains what is possible. The evaluation set catches errors. If you mix both in one folder, the team will start chasing pretty answers and missing real failures.

If you work with several models through one gateway, this matters even more. The same request may succeed on one model and fail on another because of format, answer length, or how constraints are interpreted. So the set should test not only the “smart answer,” but also how the answer behaves inside your system.

How to pick new cases step by step

New examples should not be added based on mood. If the team drops everything into the set, a month later it contains ten similar cases and none that are truly hard. A better rule is simple: one source of the problem, one clear meaning, one expected result.

It is easiest to collect bad answers from three places: logs, tickets, and manual checks. Logs show live failures, tickets show what users noticed, and manual review catches odd cases that have not yet turned into complaints. If the same mistake appears in support chat, an internal test, and the request log, that is not three cases, but one.

Next, remove duplicates. Look at the meaning of the error, not the wording. If the user asked “show card fees” and “where can I see service charges,” and the model fails in the same way, keep one example. Otherwise, the set grows and the quality evaluation gets distorted.

A practical workflow usually looks like this:

- Gather candidate cases from the last week or release into one list.

- Merge duplicates by meaning and remove noisy cases without a clear error.

- For every remaining case, write down what answer the team considers correct.

- Run the case on the current model and save the result as the first comparison point.

It is better to write the expected result as short rules. Not “the answer should be high-quality and complete,” but “the model does not invent a tariff,” “answers in Russian,” “asks for clarification if there is not enough data.” People argue less over rules like that.

Add tags right away. Usually four are enough: task type, risk, language, and source. Task type separates summarization from data extraction. Risk shows the cost of an error. Language helps you avoid losing mixed Russian, Kazakh, and English requests. Source shows where the case came from: production, ticket, or manual test.

Do not improve or “polish” the first run. Save it as is. Two weeks later, that first run will show whether things improved or whether the team simply replaced one hard request with another one that is easier to test.

How to keep complex user requests from getting lost

Most unpleasant failures do not come from clean test prompts, but from live conversations. The user writes at length, mixes two tasks into one message, changes conditions on the fly, and refers to “that contract above.” If the set does not catch those cases, the quality on paper looks better than it really is.

Do not cut out a single “bad” reply from a chat. Keep the whole conversation: the first question, the clarifications, the model’s refusal, the retry, and the final answer from the operator or expert. The error often starts not in the last message, but two turns earlier, when the model misunderstood the role, constraint, or answer format.

What to tag in a complex case

Every such example needs a short reason label. One or two words is usually enough for the team: long context, two intents, ambiguous reference, conflicting instructions, money risk, or compliance risk. Without that note, the case quickly becomes “just another weird chat,” and a month later no one will remember why it is in the set.

Rare, expensive errors are better kept separately. They do not happen every day, but one mistake can give a customer bad advice, break a document flow, or send the answer in the wrong format. It is useful to keep a small incident queue and review it after each release.

Once an incident happens, a short cycle is enough: save the full conversation, mark where the model went off track, attach the expected answer or the correct strategy, add a complexity and risk label, and then return the case to the main run. That way, complex user requests do not sit off to the side “for later”; they keep testing the system alongside regular cases.

This is especially noticeable for banks with long flows about tariffs, limits, and documents. A customer may start with one product, then switch to another and ask for a comparison in a table. If the team saves only the last reply, the test becomes too easy. If it keeps the full conversation and the reason it was hard, the case stays useful even six months later.

When to send a case to the archive

An archive is not for old files, but for cases that no longer test a live risk. If an example no longer affects the release decision, it does not belong in the active part of the set. But deleting it entirely is not a good idea either: sometimes the team needs to understand why it was testing that case in the first place.

The signals are usually quite clear. A case should go to the archive if the task has left the product or the real process, the answer rules have changed completely, the example has not caught a single error across several releases, or it almost fully duplicates a newer case.

The first situation is more common than it seems. The team removed a scenario from the chatbot, but the test remained. Formally it still passes, but it no longer adds anything to quality evaluation. It only inflates the set and hides current issues.

If the rules have changed completely, do not try to repair the old case piece by piece. When lawyers, the product team, or support rewrote the answer from scratch, it is better to create a new example with a new baseline. Move the old one to the archive. Otherwise, the active set mixes different product versions, and the argument about the result repeats on every release.

The rule for duplicates is simple. If two cases almost always fail and pass together, one is unnecessary. Keep the one with the more precise risk description. But if the wording is very different and the model only makes a mistake in one of them, that is not a duplicate.

An archive without a reason and a date quickly becomes the same clutter, just in a different folder. So record at least the transfer date, the reason, who made the decision, and what replaced the example, if anything did. A short note is enough: “15.05.2026, scenario removed from onboarding, replaced with case K-184.”

That way, the active set stays short and practical, while the archive keeps a history of decisions instead of trash.

How to document a case so the whole team understands it

If a case cannot be understood in a minute, it is almost useless. A month later, no one will remember why the request was added to the set, what exactly was failing, or what made the answer good.

Teams often store only the request text and a “passed” or “failed” label. That is not enough. The same question can behave differently in support chat, in a mobile app, and in an internal assistant for employees. Without context, the argument starts over with every release.

It is better to format a case as a short card with fixed fields. You do not need every thought the team had. You only need the information another person needs in order to reproduce the check: the original user request without rewriting it “for clarity,” context with the channel, system role, and prompt or chain version, the expected result in the form of a golden answer or a short checklist, tags for complexity, risk, language, and task type, and the case status — active, under review, or archived.

It is better to keep the original request exactly as it is, even if it has mistakes, slang, or broken phrases. Those are exactly the kinds of formulations that most often break the system. If an editor “improves” the text, you are no longer testing the real user scenario, but a convenient version for the team.

The expected result is also better kept precise. For a simple case, one correct answer is enough. For a complex one, a short checklist works better: the model does not invent a fact, answers in Russian, does not reveal personal data, asks for clarification when data is missing. That format is easier to maintain than a long ideal sample.

Tags are not for neatness on paper, but for search. Later, they help you quickly gather all risky cases in Kazakh, all voice requests from the call center, or all long conversations with customer complaints. If there are too many tags, people stop using them. Usually 4-6 clear categories are enough.

For example, a bank team’s card might look like this: the customer writes with mistakes and asks to “show the latest card charges for my wife”; channel — chat; language — Russian; risk — high; prompt version 12; check — the assistant does not reveal someone else’s data and asks for identity verification. Even a new employee will immediately understand what this case is testing.

When the team keeps these cards in the same format, discussions become shorter. People stop arguing about wording in a table and start talking about the quality of the answer itself.

Example: how a bank team updates the set after a release

After one release, the bank chat started confusing the credit limit with the available balance. The error looked small, but the difference matters to customers: the limit shows the upper boundary, while the available balance changes after purchases, holds, and payments.

The team did not rush to tweak prompts blindly. First, it pulled support tickets from the last week and selected conversations where people complained about card amounts. It quickly became clear that the problem repeated in similar wording, but not in exactly the same way.

The team did not take everything from that batch. The updated set included only different types of requests: a direct question like “how much do I have left on my card?”, a follow-up after a purchase when the amount had already changed, a conversational request like “what’s my remaining balance?”, a conversation where the customer first asked about the limit and then the balance, and a case with a short message and no context.

That way, the set grew by only a few cases, not dozens of nearly identical examples. The team documented each case with the expected answer and a short note about where the model usually fails.

They did not delete the old examples from the previous scenario. Earlier, the chat more often confused the overall limit with the credit line in tariffs, but after the product change those conversations became rare. The team moved them to the archive and added the date, reason, and the tag “outdated after release.” If the error comes back, the example can easily be moved back into the active set.

Then they ran the updated set on two models and compared not only the overall failure rate, but also the types of mistakes. One model more often gave a confident but wrong answer about the balance. The other more often asked for clarification, but mixed up the limit and the available amount less often. That is more useful than a single average number: the team can see where the model is dangerously inventing things and where it is simply losing context.

A week later, they no longer had a pile of random conversations, but a working set. Complex requests were not lost, old noise moved to the archive, and the new behavior after the release became visible right away.

Common mistakes when maintaining the set

The most common mistake is boring but expensive: the team adds only short and convenient examples. They are easy to read, quick to run, and simple to discuss. The problem is that these cases rarely catch real failures. The model almost always handles them fine, then falls apart on long, messy, and emotional requests from live work.

Because of that, quality evaluation starts to paint a pretty but empty picture. If the set has no complex conversations, mixed intents, incomplete context, or strange wording, the result drifts away from production. It is better to keep a few inconvenient examples than twenty smooth, predictable ones.

The second mistake is with the expected answer. Teams often write it too vaguely: “the answer should be useful,” “the model should not make mistakes,” “a polite tone is needed.” A case like that is almost impossible to check. One reviewer will count it as passing, another will not, and a third will understand the task differently altogether.

A good case sets clear boundaries. What exactly should the model do? Which facts must not be distorted? What counts as an error? If the request asks for a short summary in 5 sentences with no invented details, write that down. The fewer guesses the reviewer has to make, the cleaner the result.

Another common problem is mixing everything into one case: style, facts, safety, answer format, and refusal handling. Then it is not clear why the example failed. Did the model get the substance wrong, or just choose the wrong tone? Did it violate a safety rule or fail to fit the structure?

That kind of case is better split into several checks, each with one main goal. Otherwise, complex requests do not help debugging — they only confuse the team.

Tag chaos creates a lot of noise too. Today a case is tagged “finance,” tomorrow “banking,” and next week “risk.” If there are no shared rules, filters break, selections drift, and old results are no longer comparable. The tag dictionary should be short and fixed. If the team needs a new tag, it first agrees on what it means and when to use it.

There is one more harmful habit: bad cases get deleted. The set may look cleaner, but the team loses the trace of problem scenarios and forgets which requests broke the model before. It is much more useful to send such examples to the archive with a reason and a date. Then they can be brought back easily if a similar error appears again.

A quick weekly check

The set needs a short check once a week, otherwise it quickly grows and starts getting in the way instead of helping. Usually 20-30 minutes is enough if you look at a few clear signals.

The point of this check is not to rewrite the whole dataset. You need to understand whether it is still alive: whether it is getting new real scenarios, whether it still contains useful old examples, and whether the team is dragging the same case in under different names.

A short checklist works well for this review:

- count fresh examples from the last 7 days;

- find scenarios that have not caught errors for a long time;

- merge duplicates by meaning, not by exact wording;

- check that tags are still being used consistently;

- pull in a few of the hardest support cases from the week.

A good sign is that every action has an outcome after the review. One case was added, two were merged, one was sent to the archive, one tag was removed. If the meeting ends with only a note that “we should go over it later,” the set will keep collecting junk.

It also helps to look at where the new examples come from. If all cases come only from the ML team, the picture becomes too smooth. Support, sales, and implementation bring very different material: odd phrasing, mixed languages, incomplete data, and abrupt topic changes in one message.

For a golden set, this weekly check works better than a rare big cleanup. A major review is almost always postponed. A short rhythm keeps the set in shape and prevents you from losing the requests where the model truly breaks.

What to do next

A golden set only works well when someone owns it. If the set is “shared by everyone,” then usually no one really owns it: disputed cases pile up, old examples never move to the archive, and new incidents get lost in chats and tickets.

It is better to assign one owner and give them a simple rhythm right away. For most teams, a short weekly review and a calmer monthly review are enough. At the weekly meeting, the owner adds new cases that have already affected the product. At the monthly one, they clean up duplicates, move outdated cases, and check whether the set has tilted too heavily toward one category.

There should also be clear rules for moving cases between the active set and the archive. The active set keeps what may break again after a release, a model change, or a prompt edit. The archive keeps examples that no longer reflect the current product, the old answer policy, or cases that no longer show up in live traffic.

If you want a simple policy, three rules are enough. Every case should have a status: active, under review, or archived. A new case goes into the active set if the error reached a user, happened at least twice, or belongs to a risky scenario. The archive is not deleted: you return to it when you change the model, prompt, or moderation rules.

Manual collection almost always breaks at scale. That is why incident exports, support complaints, and disputed conversations are better automated. It also helps to run the set on a schedule, for example after every release and once a week at night. Then the team sees a stable picture instead of isolated impressions.

If you compare several models, it is useful to run the same set under the same conditions through a single gateway. For example, in AI Router on airouter.kz, the team can replace base_url with api.airouter.kz and use the same SDKs, code, and prompts without separate plumbing for each provider.

A good first step this week is simple: assign an owner, approve two rules for the archive and the active set, and then automatically pull in the last 20 incidents. That alone is enough to stop the set from spreading.