Enriching Product Listings with Small Models Without Extra Cost

Product listing enrichment can be handled by a small local model when you need attributes, tags, and short descriptions without complex generation.

Why product listings lose quality so quickly

Product card quality does not drop because of one big mistake. Catalogs are usually damaged by small inconsistencies that pile up every day. Today one supplier sent a clean spreadsheet, tomorrow another uploaded a set of fields with gaps, and a week later a manager added part of the data by hand.

The problem starts at the input. Suppliers send data in different formats: sometimes color lives in its own field, sometimes it is hidden in the name, and sometimes it is marked as “assorted.” The same attribute is written in different ways: “black”, black, black, blk. For a person, it is the same thing. For a catalog, it is not.

Very often, one product name mixes everything at once: brand, product type, color, size, material, and SKU. For example: “Nike Hoodie M Black Cotton 2024”. If nobody breaks that line into fields, the store gets a card where the attributes seem to exist, but filters and search cannot see them.

Manual entry quickly adds more chaos too. One manager writes “XL”, another writes “50”, and a third leaves the field empty because the size is already in the name. The same thing happens with materials, seasons, and style. Within a month, several rules are already living in the catalog, and none of them is fully followed.

This does not hurt only the product cards. A shopper opens the color filter and does not see part of the assortment. A search for “blue linen shirt” misses items where the color stayed in the name and the material was never filled in. The store loses impressions not because the product is missing, but because the catalog is described unevenly.

There is also a less visible problem. Errors rarely show up right away. A card looks acceptable: the photo is there, the price is there, the name looks normal. But when the assortment grows to thousands of SKUs, gaps and inconsistency start breaking search results, analytics, and ad feeds.

That is why product quality depends not on a pretty template, but on data discipline. If the input is dirty and different people fill the fields the way they prefer, the catalog almost always drifts apart.

What a small local model does well

A small local model works well where the product does not need to be understood deeply, but simply broken down according to clear rules. If the name and specifications already contain a signal, the model can quickly pull out the brand, material, volume, weight, size, and other simple attributes.

For product cards, that covers the most boring and repetitive part of the work. The team does not rewrite thousands of items by hand and instead gets clean fields for filters, search, and description templates.

A good example is apparel and household goods. From a line like “Women’s T-shirt cotton 95%, elastane 5%, size M, black”, the model usually assembles a clean set of fields without trouble: women’s T-shirt, size M, material “cotton 95%, elastane 5%”, color “black”.

It behaves just as reliably in categories where tags are built by simple rules. If a product belongs to the “coffee” category, the model can assign tags like “whole bean”, “250 g”, and “medium roast” when those traits appear in the text or table.

Short descriptions also sit within its comfort zone. This is not about a long sales pitch, but about one or two sentences without invention: what the product is, what it is for, what it is made of, and how it differs in shape or volume.

Normalization of small details that damage the catalog every day is especially useful. The model turns “ml”, “ml”, and “milliliters” into one form, turns “size”, “sz.”, and “size” into a single size format, evens out brand and series abbreviations, and removes noise like extra symbols and duplicates in the name.

On large batches, such a model is usually noticeably faster than a large one. That matters when you need to process not 500 cards, but 50,000 overnight, without waiting in line for an expensive external model.

If the team needs in-country storage and low latency, this setup is especially convenient with locally hosted open-weight models. AI Router has its own hosting for such models, so the flow with attributes, tags, and short descriptions can stay closer to internal systems without complicating the setup for no reason.

In short, a small model is best where the task is more like careful sorting than product expertise.

Where a local model starts making mistakes

A local model is useful as long as the task is narrow: take explicit data and put it in order. Problems begin when people expect guesses from it. If the feed does not contain material, volume, composition, or exact compatibility, the model will not recover the truth. It will supply the most likely option.

You can see that clearly in a simple example. A jacket card contains only the name “men’s winter jacket with a hood” and an SKU. The model may add “water-repellent fabric”, “synthetic insulation”, or “temperature rating down to -20”. The text sounds believable, but that is already invention. For a store, that means extra returns and complaints.

Rare products break the picture even faster. If the model has barely seen soldering kits, medical consumables, or narrow B2B items, it leans toward familiar templates. As a result, tags become too generic and attributes drift into the wrong category. For mass-market apparel, the mistake may be tolerable. For a rare niche, it hurts search and filters.

Complex categories also suffer. Small models often write short descriptions as if they were universal text for any card: “comfortable”, “practical”, “suitable for everyday use”. That can still pass for a T-shirt. For auto parts, cosmetics with active ingredients, or children’s goods, such text is almost useless. It explains nothing to the buyer and helps search results very little.

Another weak point is long compatibility rules. When you need to account for model year, revision, mount size, regional version, and exceptions, the model loses part of the conditions. It may shorten a sentence in a way that changes the meaning. For example, instead of “fits models 2021–2023, except Pro version”, you end up with just “fits models 2021–2023”.

Warning signs usually look like this: the card now has characteristics that were not in the source data; a rare item received the same tags as a mass category; the description sounds like it could be pasted into a thousand other cards; the compatibility block became shorter than the original specification.

If you build product enrichment on a small model, it is better to give it extraction and normalization, not guesses. Anything that affects the promise to the buyer should be checked against the source or handled by separate rules.

What data to pass in as input

Raw data almost always hurts the result more than the model itself. Even a good local model for attributes will produce a weak outcome if you send it only a two-word product name and noisy supplier text.

A minimal set is usually this: product name, full description, short image captions or alt text, if they exist. That is already enough for the model to extract basic attributes, assign tags, and assemble a short description without unnecessary imagination.

Category and brand are also better passed explicitly as separate fields. That narrows the word choice a lot. If the model knows it is looking at not just “Nike Air”, but “sneakers” from Nike, it is less likely to mix up the product type, gender, season, and style.

An even better approach is not a free-form request, but a strict framework. Give the model a list of allowed attributes and pre-approved values. For apparel, that might be color, composition, season, fit, sleeve length, and closure type. For color, a list like “black, white, beige, blue” is more useful than asking it to “figure out the color itself”. That reduces mistakes before launch, not after.

A set of examples helps too. For each category, show 5–10 good examples in the response format you want. One example for shoes, one for T-shirts, and one for bags is a weak setup. The model holds style and structure better when the examples are similar to the current product.

If you have an apparel flow, give the model this context: product name “Women’s oversize shirt”, category “shirts”, brand, supplier description, image caption “long sleeve, cotton, white color”, and a list of allowed values for color, material, and silhouette. In that format, it usually returns clean JSON and does not start guessing things that are not in the card.

Before launch, the input data should be cleaned up. Remove duplicates, HTML fragments, service SKUs in the description, junk words like “new top hit”, and merged fields from exports. If your catalog has three identical descriptions with different SKUs, the model will start repeating old mistakes quickly and at scale.

The cleaner the input and the tighter the dictionary, the less manual correction you will need later. For a small store, that saves hours. For a large catalog, it saves days.

How to launch it step by step

At first, do not try to fill everything at once. It is better to choose 3–5 fields where the answer can be checked against the source in a minute. For the first launch, attributes with a clear set of values usually work well: color, material, season, product type.

Start by asking the model to fill only the attributes. A local model usually performs more consistently if it has a list of allowed options and a strict response format. Add tags in the second step, and the short description in the third, once the basic fields are ready and the text has something to rely on.

One response format removes a lot of unnecessary manual work. If the model returns JSON for one product, plain text for another, and mixes attributes with descriptions for a third, the pipeline will start to fall apart. It is better to lock in one schema from the start and use it for all categories, even if some fields remain empty for now.

{

"attributes": {"color": "", "material": "", "season": ""},

"tags": [],

"short_description": "",

"needs_review": false

}

After that, test everything on a small sample. Do not take 10 perfect cards from one supplier. Take 100–200 products with different data quality so you can see the real errors before you launch into the catalog.

From there the process is simple: run the test sample through the same prompt, check the results by hand, note the common mistakes, add rules for ambiguous cases, and only then enable mass processing. Answers that look questionable are better sent straight to manual review.

It is easier to catch borderline answers with simple rules. If a value is not in the dictionary, conflicts with the source text, or the model left the field empty, a person checks the card. That is cheaper than cleaning the catalog later because of wrong filters and odd descriptions.

Do not look only at accuracy. It is useful to measure how many cards went to manual review and how much time the team saved in one flow. If the model reliably covers most simple fields and keeps the format stable, the launch can already be considered successful.

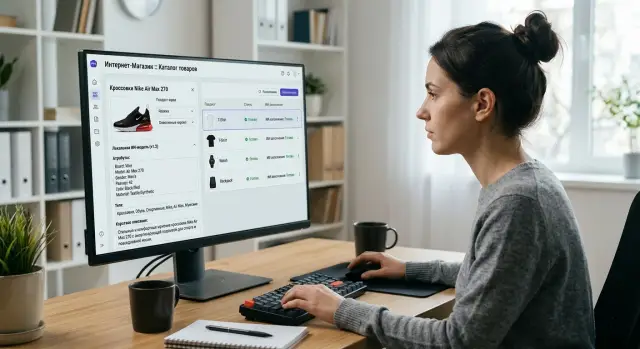

Example from an apparel flow

Apparel has many repetitive cards, and this is exactly the kind of case where a small local model can often be used without unnecessary risk. A supplier often sends a messy line instead of proper fields: SKU, fragments of words, abbreviations, sometimes a mix of Russian and English.

For example, the export may contain: “Women’s tee oversize milky 95cot 5el L art 1842”. A person understands the meaning, but you should not put that line into the catalog as is. The model breaks it into simple attributes and places them in the right fields.

The store then gets not raw text, but a structure: gender “women’s”, material “cotton 95%, elastane 5%”, color “milky”, size L, and product type “T-shirt”.

After that, the same model can assign catalog and search tags. Usually a short, no-nonsense set is enough: “women’s clothing”, “T-shirt”, “oversize”, “cotton”, “basic”. That is better than a long list of nearly identical tags that only clutters filters.

A short description can also be generated locally if the task stays narrow. A normal version would sound like this: “Women’s loose-fit cotton T-shirt with a touch of elastane. Soft fabric, milky color, size L.” It has meaning, but no ad noise, no empty promises, and no random words about “premium”.

This kind of flow works well in a semi-automated mode. The model processes the entire supply batch at once, and the operator only checks the borderline items. Those are usually products with empty fields, rare abbreviations, or conflicts in the data, such as one color in the line and another in the supplier table.

For apparel, the effect is visible from the first batch. The team does not spend a day filling in hundreds of cards by hand, search works better with material and cut, and the catalog looks cleaner. If the model runs locally, the store also does not send product data to external services unless necessary.

Mistakes teams make most often

The most common mistake is trying to get too much from the model in one call. You give it the product name, a couple of supplier lines, and ask it to return attributes, tags, a short description, SEO text, and even the category. In that mode, a small model starts mixing tasks: where it should extract a fact, it guesses; where it should write text, it drags raw fields into the description.

The second mistake is a free-form answer with no framework. If you do not define the allowed values, the model invents variants on its own: “dark blue”, “blue dark”, “navy”, “blue-black”. For a person, those are almost the same. For a catalog, they are four different values, broken filters, and garbage in analytics.

Problems grow quickly when attribute extraction is mixed with long-text generation in one prompt. Attributes require precision and a strict format. Short descriptions require natural language and allow a little freedom. If you combine both modes, quality usually drops in both directions.

Many teams also test the result too lightly. They look at 20 random cards, see that it is “mostly fine”, and launch the flow. Then it turns out the model breaks on duplicates, abbreviations, and seller jargon. “cotton” and “cotton”, “men” and “men’s”, “48 RU” and “L” often drift into different fields even though they mean the same thing.

There is also a less obvious mistake: after every miss, someone rushes to edit the prompt. The next day it changes again. A week later nobody knows which version performed best or why the catalog started behaving inconsistently. For product cards, that is especially unpleasant because old and new records start differing in style and structure too.

A simple discipline usually helps. Separate attribute extraction and text generation into different requests, define dictionaries of allowed values and the response format, test the model on duplicates, abbreviations, and dirty supplier data. It is better to fix the prompt version and change it only after testing on the same set. And always measure not just overall accuracy, but also the share of empty, borderline, and invented values.

If a small model makes mistakes rarely, that still does not mean the process is assembled correctly. One extra percent of guesswork in attributes will later cost more than the savings from a cheaper launch.

Quick check before launch

Before a mass rollout, it is better to do a short run on a small set. In a catalog, one tiny mistake quickly spreads across all cards: a wrong tag, an empty attribute, or overly generic text then has to be fixed manually.

Build a gold set of at least 100 products. Do not take only the easy items where everything is obvious; use a mixed slice of the catalog: products with incomplete data, similar names, and different description formats. That kind of set quickly shows where the model is guessing and where it is getting confused.

For each field, predefine the allowed values. If the “color” field is only supposed to accept values from your dictionary, the model should not write “light graphite with a cool undertone” when the system only has “gray”. Leave free text only where it is truly needed, such as for a short product description.

What to check in the test

After the run, look not only at successful examples, but also at the error summary. Usually five metrics are enough: how many fields the model left empty, how many answers were outside the allowed values, how many cases the team marked as borderline, what share of cards went to manual review, and how much it costs to process 1,000 cards including reruns.

Borderline answers are better counted separately. They are not always clear errors. For example, the model may assign the tag “sport” to a basic T-shirt, and formally that is not a defect, but for your taxonomy that tag may interfere with filters and search.

Define the manual review path right away. Who checks suspicious cards, how much time is spent on one, and at what threshold you send the result to a person. If a reviewer spends even 20–30 seconds per card, that is already a noticeable cost line on a large flow.

You should calculate cost in advance, not after the pilot. The total includes not only the model call, but also retries, validation, business rules, and manual cleanup. If you are testing local and external models through a single gateway like AI Router, this is easier to calculate: you can see which model gives the needed quality without extra cost.

If your test set has few empty fields, the share of borderline answers is manageable, and the price per thousand cards works for you, you can move on. If not, it is better to narrow the value list once more and clean the input data than to fix the catalog after launch.

What to do next

Do not try to automate the whole catalog right away. To start, one category and two or three fields are enough, especially where a mistake does not break the sale: material, style, season, short description. That way you quickly see where the small model gives a stable result and where it already lacks context.

It is most useful to check this on the same product set. Take, for example, 200 manually labeled cards and run them through both a small local model and a larger external one. Look not only at average accuracy, but also at the type of errors. Often the small model fills attributes and tags well, but gets confused by ambiguous names, mixed bundles, and marketing copy.

After that test, the setup usually becomes simple: the small local model handles the mass flow, the larger external one gets only the complex cases, a person reviews borderline cards from the queue, and extraction rules are updated based on real errors.

That is cheaper than running the whole catalog through an expensive model, and clearer for the team. For most stores, this path is more practical than trying to find one “perfect” model for every case.

If you have a mixed setup where some tasks go to local open-weight models and others to external APIs, it is better not to build a separate wrapper for each route. It is easier to keep one unified entry point for both sides. In that scenario, you can use AI Router or api.airouter.kz: the service provides one OpenAI-compatible endpoint that makes it easy to split traffic between local models and external providers without changing the SDK, code, or prompts.

And one practical step that teams often postpone: keep an error log. Save the prompt, input data, model output, and the editor’s final correction. Within a month, it will become clear which fields fail most often, which rules are outdated, and where short descriptions are better built from a template rather than free text.

Review those rules once a month. Not for reporting, but to remove recurring failures and avoid paying for them again.

Frequently asked questions

Which products is a small local model best suited for?

It works best for mass, easy-to-read categories where the attributes are already present in the product name, description, or supplier table. Apparel, shoes, household goods, and products with simple characteristics usually fit well.

In these tasks, the model does not guess; it splits the text into fields: color, size, material, weight, volume, and product type.

When is it better not to trust a local model with descriptions and specifications?

Do not ask it to invent what is not in the source data. If a card does not include composition, compatibility, exact material, or usage conditions, the model will often fill in a plausible but wrong option.

Anything that changes the promise to the buyer is better taken from the source or checked with a separate rule.

Which fields should you automate first?

For a start, pick fields that are easy to verify against the source in a minute. Usually that means color, material, season, size, product type, and short search tags.

Leave long descriptions and complex compatibility for later, once you have seen the typical mistakes on real product cards.

What must you definitely pass to the model as input?

At minimum: product name, full description, category, and brand. If you have image captions or alt text, pass those too: they often contain color, shape, sleeve length, and other useful details.

The cleaner the input, the more consistent the result. Before you launch, remove HTML, duplicates, junk words, and merged fields from the export.

Do you need an allowed-values dictionary for attributes?

Yes, a dictionary cuts the noise dramatically. Without it, the model will start producing close but different values like “black”, “Black”, black, and navy, and your filters will fall apart.

If you predefine allowed colors, materials, and size formats, the catalog stays cleaner and you need less manual editing.

How do you quickly tell that the model has started making things up?

A warning sign is when the response contains facts that were not in the card. Another one is when a rare product suddenly gets overly generic tags, or the description sounds like it could fit any other card.

Also check abbreviations. If the compatibility block or conditions become shorter than the source text, the model may have dropped an important exception.

How can you launch such a flow safely on a catalog?

Start with one category and a test sample of about 100–200 products with different data quality. Run them through the same prompt, compare the answers manually, and only then open mass processing.

Do not try to fill everything in one call. Extract attributes separately, add tags separately, and build the short description separately.

When should a product card be sent straight to manual review?

Send the card to a person if the model returns a value outside the dictionary, leaves an important field empty, or gives an answer that conflicts with the source text. The same applies to products with rare abbreviations and conflicts between different sources.

This filter is cheaper than cleaning the whole catalog later because of wrong attributes and broken filters.

What is more cost-effective for a catalog: one large model or a mixed setup?

If the flow is large, a mixed setup is usually more profitable. A local small model handles the mass of simple tasks, while a larger external model gets only complex and borderline cards.

That way you do not pay for an expensive model where careful extraction is enough. If you need one entry point for local and external routes, it helps to keep them behind a single OpenAI-compatible API.

Which metrics should you look at after the pilot?

Do not look only at accuracy. It is useful to track the share of empty fields, the number of out-of-vocabulary answers, the volume of cards sent for manual review, the team’s time per batch, and the cost of processing one thousand SKUs.

If the model preserves the format, covers most simple fields, and does not create many borderline values, the pilot can already be considered successful.