Annotator disagreement: how to align labeling guidelines and arbitration

Annotator disagreement slows model training and pollutes the dataset. Learn how to write clear labeling guidelines, run arbitration, and update evaluation rules on time.

Why annotators disagree

Even a good annotator does not read text like a machine. They rely on experience, habits, and how they understood the task. That is why people often interpret the same example differently.

Imagine a support message: "The money was charged, but the order never appeared." One person will assign the label "payment," another - "order issue," and a third - "urgent incident." All three decisions can be reasonable if the rules do not say which label is primary in that situation.

Most of the time, the problem is not the people but the rubric. If the categories overlap, are described too broadly, or sound similar, annotators start filling in the meaning themselves. That is how gray areas appear: one person treats the reply as a rejection, another as a neutral tone, even though both are looking at the same text.

Exceptions break the process the most. While the examples are simple, the team stays aligned. Then mixed cases arrive: sarcasm without rude words, a partly correct answer, a complaint that ends with thanks. Even experienced people get confused if the rule is just a general principle plus a long list of "except when..."

Usually, disagreements grow from four causes: there is too little context in the text, labels overlap in meaning, the rule does not set priority between two valid options, and rare cases are described too briefly.

Disputed answers are harmful not only on their own. They quickly pollute the whole dataset because they create a noisy pattern for later decisions. If similar examples are labeled differently today, tomorrow the model or analyst will inherit that confusion. Then the team spends time not on improving labels, but on figuring out why the system behaves unevenly.

There is a simple sign that the rules are the real problem: different people calmly argue, give clear reasons, but still choose different labels. That is not one employee’s mistake. It is a signal that the category definitions are not yet strong enough for real examples.

Where disputes usually start

Disputes rarely begin with inattention. Usually the text can honestly be read in two ways, and both seem reasonable.

The first common source is borderline cases between two labels. A complaint can easily look like a request for help, while a neutral question can hide dissatisfaction. If the rule does not explain where the boundary is, people rely on their own experience instead of a shared standard.

The second risk area is long answers where several meanings are mixed together. A user may first say thanks, then complain, and end by asking for a refund. One annotator chooses the label based on the main idea, another on the last sentence, and a third on the most urgent action. Everyone is being logical, but the results differ.

Text with an implied meaning often causes disputes too. Sarcasm, hints, dry wording, and weak context break simple rules. The phrase "Yeah, the service is just great" may look like praise on paper only. In a live conversation, it is often frustration.

There is also a quieter reason: rare examples that are not covered in the rules. When the dataset is small, these cases are almost invisible. Later, a few unusual messages appear, and everyone handles them differently.

A dispute usually starts in one of these places: two labels sound too similar, one answer contains two or three tasks, tone changes the meaning more than the words themselves, the text is impossible to understand without previous messages, or the example appears for the first time and is not described in the rubric.

If the labeling guidelines do not show what to do in these places, people start guessing. That hurts both consistency and the way the team later evaluates data labeling quality. The most common disputes almost always live not in obvious errors, but in the gray zone between "looks similar" and "clearly fits."

How to build a clear rubric

A clear rubric is not needed for a report. It is needed so that two people read the same example and more often arrive at the same label. If that is not happening, annotator disagreement usually comes not from inattention, but from vague rules.

Start with short definitions. Each label should have one simple idea: what belongs to it and what does not. It is better to write: "Complaint - the user is clearly unhappy and asks for a fix," than to give a broad description over half a page.

A good rubric usually includes five things: a simple definition of each label in one or two sentences, several examples that clearly fit, one or two counterexamples for similar cases, a rule for when context is missing, and a priority order if two similar labels apply.

Examples and counterexamples remove a lot of disputes. If you have labels like "information request" and "complaint," show a borderline case. The phrase "Where is my order?" may be a normal question, while "Where is my order, I’ve been waiting for a week" is already closer to a complaint. It is easier for people to compare against an example than to guess from an abstract description.

Write separately what to do when context is missing. An annotator should not be asked to guess the author’s motive, tone, or intent. Give one clear action: assign a "not enough context" label, send the example to arbitration, or open a neighboring document if the rules allow it. The fewer guesses, the higher the data labeling quality.

Similar labels need an explicit order of choice. If a text fits two categories at once, the rubric should answer the question: "Which label do we choose first?" It is useful to write this as a short rule. For example: if the customer explicitly asks for a refund, choose "refund" even if the message also contains a complaint about the service.

Common exceptions are best moved into a separate block. Otherwise they get lost among the general rules. This usually includes sarcasm, mixed requests, template auto-replies, short messages like "doesn't work," and cases where there is too little context.

If the rubric grows faster than people can read it, that is a bad sign. Two pages of clear rules are better than a long document with questionable wording. An annotator should open the rubric and understand which label to use within a minute.

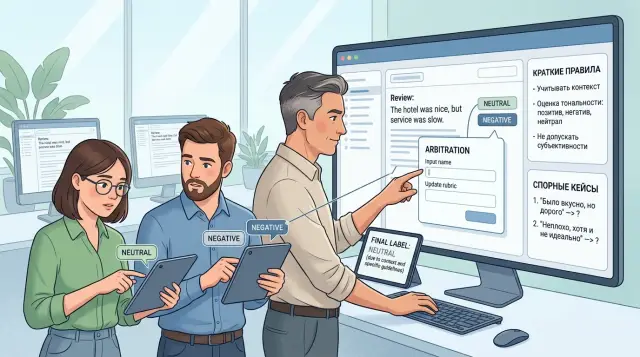

How to run arbitration step by step

Arbitration is needed so the team makes the same decisions in similar cases. When annotator disagreement piles up in chats and private messages, the process breaks quickly: people argue from memory instead of from the rules.

It is better to keep all disputed examples in one queue. This can be a separate table or board with fields for the example itself, the two annotators’ labels, the rubric item, and the final decision. Then you can see not just one case, but a repeating type of dispute.

First, gather all conflicts in one place and remove duplicates. If the same case showed up ten times, the rule is probably too vague.

Then ask each annotator to explain their choice in one or two sentences. A short explanation helps because the person relies on the text of the rules, not on a feeling.

After that, compare the example with the rubric. Do not ask, "How do we usually label this?" Look at which rule fits best and where the boundary between labels lies.

Record the result immediately. Write down not only the label, but also the reason for the decision. In a few days the team will forget the details, and a short note will prevent the same dispute in the next round.

If a dispute repeats, update the rules right away. Sometimes one sentence, a couple of clarifications, or a new example is enough to make the dispute disappear.

A small example. One annotator marks a support reply as "helpful" because it contains instructions. Another marks it as "incomplete" because the answer does not fully solve the customer’s problem. The arbiter does not choose the one who sounds more confident. They open the labeling guidelines and check what should count as a solution: the presence of a step, or the customer’s question being fully resolved.

After the decision, do not leave the conclusion "in the senior’s head." Write the result next to the example and add a short comment: why this label was chosen, which rule it was based on, and what to do in a similar case. Then annotation arbitration stops being a one-off argument and becomes the team’s working memory.

If a dispute drags on for more than a few minutes per case, the problem is often not the people but the wording of the rules. Good arbitration does not stretch the discussion. It helps the team close the next similar case calmly and consistently.

When it is time to review the rules

If the same dispute comes back every week, the problem is no longer the people. It means the rule leaves too much room for guessing. Annotators keep running into the same unclear case and spend time not on work, but on guessing what the instruction writer meant.

A bad sign is when arbiters make different decisions on similar examples. That means even the people who are supposed to settle disputes do not have a shared reference point. In that situation, you cannot just "remind the team of the rules." The rules are no longer working as one standard.

Another common sign is that new data no longer fits the old labels. This happens when the product changes, users write differently, or new cases appear in the flow that were rarely seen before. If annotators start forcing new examples into old categories, quality drops quickly.

Usually, a rules review is needed if you see at least two signs: the team keeps asking the same question in chat or on a call, agreement between annotators barely improves after training, arbitration accumulates the same disputed cases, one label becomes a dumping ground for everything unclear, or people keep personal notes just to work with the current instruction.

One moment is especially telling: you ran training, reviewed mistakes, gave examples, and a week later everything came back. That rarely means the team was not listening. More often the reason is simpler - the rubric has no clear boundary between labels, and training cannot fix the structure itself.

A review does not require rewriting the whole document from scratch. Often three things are enough: add disputed examples, clarify the rule for choosing between two similar labels, and state explicitly what to do in a borderline case. If arbiters then start making the same decisions and repeated questions decrease, you are moving in the right direction.

The worst thing is to delay edits for too long. Then the team gets used to different personal versions of the rules, and it becomes much harder to bring back a unified approach.

A simple support example

A team labels customer requests with three labels: "complaint," "billing question," and "cancellation." There are almost no disputes on simple messages. "Where is my March invoice?" goes to billing. "I want to delete my account" goes to cancellation.

Problems begin with mixed phrases. For example: "Charge the money and close the account." The old instruction often breaks exactly here, because one message contains both a payment action and a request to stop the service.

One annotator chooses "billing question." They see words about charging money and decide that is the main topic. Another chooses "cancellation," because the customer directly asks to close the account. Both read the text normally. They disagree not because of inattention, but because the rule does not say what counts as the main intent.

In such a case, the arbiter does not guess the right answer by feeling. They look at the goal of the request. What does the customer want to get in the end? If the person is asking to close the account and charging the money is only part of that step, the main label is "cancellation." If the customer is disputing the charge and does not ask to stop the service, then it is a "billing question" or a "complaint," depending on the text.

After this kind of review, the team should not disagree on the same messages again. The arbiter’s decision is better turned into a short rule immediately. For example, if a message contains several actions, choose the label based on the customer’s final goal. If closing the account is mentioned explicitly, "cancellation" has priority over billing. If the customer is disputing a payment without asking to close the account, use the billing or complaint label.

That is how annotator disagreement starts working in your favor instead of slowing the process down. One disputed case creates a rule for dozens of similar requests. After a couple of rounds like that, the team spends less time arguing and keeps data labeling quality more even.

How to tell the rules are clearer

After edits, do not trust the feeling that "now everything is clear." Watch how the work changes on a new sample. If annotators are matching more often even before arbitration, the rules really are easier to read.

It is useful to measure three things: the share of exact matches, the share of cases sent to arbitration, and the time per item. Sometimes matches rise from 62% to 79%, and disputed cards become almost twice as rare. That is a good sign: people are not guessing the logic, they understand it the same way.

But averages can be misleading. Break down which label pairs are still disputed most often. If "complaint" and "refund request" are still mixed up in a third of cases, the rule is still weak there, even if the overall percentage went up. A table like that quickly shows weak spots: where examples are missing, where wording is too broad, and where one label needs a simple priority over another.

Check the changes on a fresh sample. Do not reuse the same examples the team already argued about. Otherwise the annotators will simply remember the arbiter’s decision. It is better to collect a new set of 100-200 items with both common and borderline cases.

Also look separately at frequent and rare cases. Common categories almost always look better because people get used to them quickly. Rare labels show the real clarity of the rules. If common labels reach 90% agreement and rare ones only 45%, the process is still not stable.

A short check after each round of edits usually comes down to four questions: did agreement before arbitration improve, did the number of repeated disputed label pairs go down, did the result hold on a fresh sample, and are rare cases clearer too, not just the common ones?

If the same examples keep coming back for three rounds in a row, the problem is almost always the rules, not the people. Good labeling guidelines remove the dispute before it reaches the arbiter.

Mistakes that quickly break the process

The most common break starts quietly: the team adds a new label but does not add examples for it. The name seems clear, but in practice everyone reads it differently. A few days later, one annotator uses the label only in obvious cases, another applies it to every similar request, and data labeling quality drops without an obvious reason.

It gets worse when rules are changed verbally. The team agreed in a meeting to treat some requests as a separate category, but the document was never updated. New people learn from the file, experienced colleagues work from memory, and annotator disagreement grows on its own. Then the team argues about discipline, even though the real problem was version control.

Annotation arbitration quickly loses meaning if the arbiter resolves disputes from memory. The phrase "we used to do it this way" does not help if the current rubric says something else or says nothing at all. Annotators should see that the decision is based on the rule text, an example, and the edit date. Otherwise arbitration turns into guesswork.

An old version of the rubric also breaks the process faster than it seems. The document gets updated, but the test for new annotators, the cheat sheet in chat, and the training examples remain the same. A person works carefully, but according to the old logic. On cases like that, you can lose not one day, but a whole week.

Simple limits help. Add a new label only together with examples and counterexamples. Record every edit with a date and version number. The arbiter cites the rule they used. The team updates training on the same day the rubric changes.

There is another trap: people mix up a dispute about the data with a dispute about business logic. These are different questions. If a customer writes "the money was charged twice," the annotator has to decide what is in the text: a complaint, a payment error, a fraud signal, or none of these. They should not invent a label just because the business finds it convenient to send such a case to a separate queue.

For teams launching LLM workflows in support, banking, or retail, this is especially noticeable. The model learns from what you give it. If the rule cannot be understood from the text in a minute, the labeling guidelines are still rough, and disputes will increase, not decrease.

A quick check before the next round

Before the next labeling round, it is better to spend 15 minutes on a review than a week sorting out disputes later. Annotator disagreement often starts not with people, but with small holes in the rules: one label is described vaguely, a disputed case was not listed separately, the arbiter does not know when to decide alone and when to call the task owner.

The minimum check is simple. Each label should have a short description without vague words. Repeated disputed cases need a separate note in the rubric. The arbiter should understand the boundary of their decisions and when to escalate. The whole team should work from one current version of the rules. And you need to decide in advance how disputed answers are counted.

A short label description should help make a decision within seconds. If the wording allows two readings, the annotator will start filling in the meaning themselves. It is better to write not "undesirable answer," but exactly what belongs to the label: an insult, a factual error, avoiding the request, leaking personal data.

A separate rule for disputed cases saves a lot of time. If the team evaluates model answers, disputes usually repeat in the same places: sarcasm, partially correct answers, refusal with a helpful hint, or a mix of two labels. These examples should not stay in memory or be discussed again in chat. It is better to move them into the rubric as short precedents.

You also need a simple agreement with the arbiter. They can resolve routine conflicts on their own, but they should not quietly rewrite the meaning of the labels. If a dispute changes the logic of evaluation itself, the arbiter calls the task owner. Otherwise, in a couple of days the team already has two rubrics: one in the document and another in the arbiter’s head.

The rule version should be visible to everyone. One file, update date, a short list of recent edits. Old screenshots, messenger notes, and verbal agreements almost always damage data labeling quality.

The last point is often forgotten: how disputed answers are counted. Decide this in advance. Usually one of two options works: either send them to a separate queue and do not mix them with clean examples, or send all disagreements to arbitration and count the result only after the decision. If the order is not chosen, the metrics will swing even with the same rubric.

What to do after the first rule update

Right after the first rule update, do not expect a miracle. Annotator disagreement usually drops not on the same day, but after a short check on new data. It is better to take 30-50 fresh examples that the team has not seen and run them through the updated rubric without hints from the team lead.

The point of such a test is simple: you are not checking how well people memorized the new wording, but how they apply it in real life. If disputes still keep circling around the same cases after the update, the rule is still too general.

It is useful to look not only at the overall match rate, but also at the disputed cases before and after the update. For example, two annotators may have disagreed on complaints with a rude tone: one used "toxicity," the other "emotional complaint without insults." If, after clarifying the examples and category boundaries, these cases start being labeled the same way, the edit worked.

Usually four steps are enough: take a new small batch without old examples, collect the cases where opinions split again, compare them with the previous disputed set, and note which wordings are still being read in two ways.

After that, set the next date for the rules review. Not "when questions pile up," but a specific day in a week or two. Otherwise small failures build up, the team gets used to reading the rules in its own way, and you end up fixing a much bigger imbalance later.

If you are building an LLM service, after the first rule update you should check not only the labeling, but also the control loop itself. Keep logs of disputed answers, mask PII before sending data to the model, and run the same set of examples through different models. On the same prompt they often make different mistakes, and that quickly shows where the rule is weak and where the model itself is the problem.

For checks like this, many teams find it convenient to use one shared gateway to models. For example, AI Router on airouter.kz gives one OpenAI-compatible endpoint for working with different models, plus audit logs and PII masking. That is handy after updating the rules: you can more quickly test the same set of cases across several models and see whether the rubric is failing or the model itself.

Frequently asked questions

Why do two good annotators assign different labels to the same text?

Most often the problem is not the people but the rules. If labels overlap, there is no priority between similar options, or rare cases are described too briefly, each person relies on their own experience and reads the text differently.

Which cases cause the most disputes?

Disputes usually start with borderline cases, long messages with several meanings, sarcasm, and texts without enough context. Another common reason is new rare examples that are not covered in the rubric.

What must a good rubric include?

Give each label a short definition, several exact examples, and one or two similar cases that do not belong to it. Also write down what to do when context is missing and which label to choose first if two fit.

What should you do if a message lacks context?

Do not ask the annotator to guess the author’s intent. It is better to define one clear action right away: assign a separate label for missing context, send the case to arbitration, or open a neighboring document if that is allowed.

What should you do if a text fits two labels at once?

Set the order of choice in advance. For example, use the label based on the customer’s final goal, not on a random phrase inside the message. That way, mixed cases are handled the same way every time, not by gut feeling.

How do you run arbitration so it does not turn into an endless argument?

Collect all conflicts in one queue and ask annotators to briefly explain their choice using the rules. Then the arbiter checks the case against the rubric, records the decision next to the example, and writes down the reason so the same dispute does not return a week later.

When should you change the rules instead of just explaining them again to the team?

It is time to update the document if the same dispute keeps returning, arbiters disagree on similar examples, or one label turns into a catch-all for anything unclear. At that point, repeating the training rarely helps without changing the rules themselves.

How can you tell that the rubric became clearer after edits?

Check a new sample that the team has not seen before. If agreement before arbitration improves, the number of disputed cards drops, and rare cases become easier to read, the change worked.

Which mistakes most often damage labeling quality?

The process breaks when people make verbal agreements, change rules without writing them down, add a new label without examples, or leave old cheat sheets untouched. Then part of the team works from the file and part works from memory, and disagreements quickly grow.

Will another model or tool help if the team still disagrees?

Changing the model alone will not solve the problem. First remove ambiguity from the rubric, then compare models on the same set of cases. One shared gateway to different models is useful for this kind of check because the team can run the same examples through several options and more quickly see whether the rule or the model is failing.