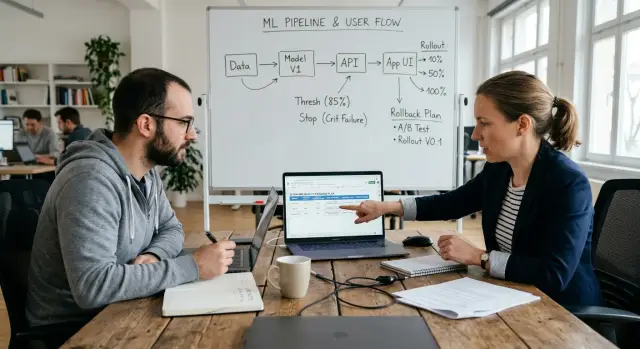

AI Feature Quality Criteria: the Product and ML agreement

AI feature quality criteria help teams agree on the usefulness threshold, stop scenarios, and rollback plan in advance so they do not argue about results after release.

Where the conflict really starts

Conflict around an AI feature usually starts not after release, but much earlier. It often happens right after a successful demo, when the team feels the main risk is already behind them. In the demo, the model answers clean examples: the question is phrased clearly, the context is complete, and a person is nearby who can fix the prompt in a minute. In real work, that almost never happens.

Product looks at value for the person and the business. If a feature saves an operator 20 minutes a day, reduces manual edits, or helps avoid missed requests, that is already a good result for the product. ML usually looks at accuracy, recall, and the share of correct answers on a test set. Both sides are right. They are just discussing different things.

Because of that, the dispute usually starts after the first demo. The ML team says the model scored 86% on validation, so the quality is fine. Product answers that users still do not trust the answers and recheck every second one. There is a metric, but no value.

Expectations break especially fast in real scenarios. Suppose the team is building AI suggestions for support. On the demo everything looks convincing: short questions, clear categories, polite tone. After launch, long complaints arrive, a mix of Russian and Kazakh, fragments of chats, and cases where a single mistake in a paragraph changes the meaning of the whole answer.

Usually the problem grows in three places. Product expects less manual work, while ML measures only text quality. ML tests on neat data, while users write chaotically. The demo shows the average case, even though rare but expensive mistakes create the pain.

An oral agreement almost always fails here. Everyone remembers a convenient version of the promise. Product hears that the feature will help the team already in the first version. ML hears that we will test the hypothesis first and improve later. When metrics dip or complaints rise, it turns out there was no shared definition of success.

The written agreement is not for bureaucracy. It is needed so that before development starts, the team can name things plainly: what value is expected, what level of errors is acceptable, where the feature is dangerous, and at what point it must be rolled back without long arguments.

What counts as value for the user

AI feature quality criteria start not with a model metric, but with a human action. If the team cannot name one concrete task, the quality debate will begin before the first test.

The wording should be simple: who exactly does what faster, more accurately, or with fewer manual steps. Not "help the manager work with clients," but "suggest the support agent an answer to a common pricing question so they can send it without searching the knowledge base."

Next comes a second layer: what the user does after the model answers. This is a good filter against vague ideas. If after the answer the person still opens three tabs, rewrites the text from scratch, and asks a colleague to check the facts, there is still no value.

A useful answer leads to a clear action. The user either sends the response to the customer, chooses one of the options, saves the result to the record, or passes the task on without manual rework.

After that, set the lower bound for value. Not the ideal result, but the minimum at which it already makes sense to launch the feature on live scenarios. For example, for a support reply draft, the minimum could be this: the operator takes the model text and gets it ready to send in under 30 seconds in 7 out of 10 cases. If editing takes two minutes every time, the feature may look smart, but it does not make work easier.

It helps to separate must-have from nice-to-have right away. Must-have includes what is tied to value and risk: the answer is on topic, facts do not conflict with internal rules, and the user can immediately take the next step. Everything else can be improved later. A more lively style, brand tone, long explanations, and rare extra details are pleasant, but they should not delay release.

This approach removes half the unnecessary arguments. Product does not look at the model's "smartness," but at whether it helps finish the task. ML understands what level of quality is already considered useful and what is not yet.

How to set the quality threshold

Quality disputes do not start because of the model, but because expectations are vague. Product says, "this is already useful." ML replies, "the test looks fine." Then the team spends weeks figuring out who is right. To avoid that, AI feature quality criteria are best reduced to a few numbers before development starts.

Usually 2 to 4 metrics are enough. More almost always gets in the way: people start arguing about which one matters more and delay the decision. A workable set looks like this: one metric for user value, one safeguard metric, one offline metric on a labeled set, and, if the feature is time-sensitive, one operational metric such as latency.

The easiest way is to keep them in one table. Then Product, ML, and the risk owner look at the same document instead of different dashboards.

| Metric | Where we measure | Launch threshold | Stop threshold |

|---|---|---|---|

| Share of user tasks successfully resolved | Online | 72% | below 65% |

| Share of correct answers on the test set | Offline | 85% | below 80% |

| Share of dangerous or forbidden answers | Online and manual review | no more than 1% | above 2% |

| P95 response latency | Online | up to 6 sec | above 8 sec |

This format removes a common mistake: offline evaluation lives separately from production numbers. When the table is one, it becomes obvious that a high test score alone does not give permission to launch. The user does not care how pretty the metric was in a notebook if the feature makes mistakes or slows down in the real flow.

The launch threshold and the stop threshold should not be the same. If you set one number, the team will start bouncing around with every fluctuation. It is better to leave a gap. For example, launch the pilot at 72% successfully resolved tasks, and stop scaling only if it falls below 65%.

And one more important point. It should be written in advance who approves the numbers. Product approves the value metric and the launch threshold. ML is responsible for the measurement method and the quality of the test set. Risk, security, or the process owner approves the safeguard thresholds. One person, usually the feature owner or product lead, records the final version and signs it before development begins.

If this is not on paper, the quality threshold quickly turns into a memory dispute after the first bad pilot week.

Which stop scenarios must not be missed

Stop scenarios should be named before the first line of code. These are the cases where an error does not just spoil the answer, but breaks the process, creates financial risk, leads to data leakage, or violates rules. In such an agreement, they matter more than a beautiful average metric.

It is better to gather them not from general fears, but from real user actions. Where can the model do the most harm? Usually the list appears quickly: wrong financial advice, swapping facts in a customer record, missing an important symptom, sending personal data to an open channel, or incorrectly labeling AI content.

It helps to split stop scenarios by risk level. For critical risk, the answer must not be shown to the user under any circumstances: the system blocks output, saves a log, and calls a person. For high risk, the answer can be used only as a draft: the system does not send it automatically and asks for review. Operational risk is not dangerous by itself, but it breaks the process, so the system falls back to a simple backup path based on a template or rule. If the risk repeats in series, the system reduces traffic to the model or temporarily turns the feature off.

Each scenario should have one clear action. Not "we will figure it out later," but a specific rule: block it, ask for clarification, route it to an agent, revert to the old algorithm, disable auto-publishing. When there are several options, the team will start choosing based on mood.

A good check is very simple. Take this scenario: a bank bot confused the fee type and gave the customer the wrong promise. What does the system do? If the answer sounds like "it depends," the agreement is not ready yet. You need a clear route: do not send the answer, show a safe placeholder, create a ticket for the feature owner.

Separately, you should agree on what counts as a repeated error. For example, 3 critical cases in a day, 5 failures per 1,000 requests in one process, or 2 incidents for one large customer. After that threshold, the system does not wait for a weekly call. It automatically rolls back the release, switches traffic to the previous version, or turns off the problematic scenario.

In sensitive domains the list is almost always broader. For Kazakhstan, this often includes PII, audit logs, and mandatory AI content labels. If such checks exist both at the application level and at the LLM gateway level, dangerous scenarios are easier to catch immediately by event rather than by user complaints.

A normal stop-scenario list is usually short. If it has fewer than three items, you almost certainly missed something dangerous. If it has twenty, you mixed real bans with ordinary bugs.

How to agree on the rollback process

Rollback also needs to be described before the first line of code. If the AI feature starts producing harmful results, the team should not argue in chat about what to turn off and who does it. You need a clear scenario: what keeps working, what gets disabled, and how the product gets through that period.

First, define the manual fallback. If AI was writing a customer reply, who does it without the model: a support agent, a template reply, or a queue for manual review. If AI was tagging tickets, you can temporarily revert to rules or send disputed cases to an employee. The manual process is almost always slower and more expensive, but it keeps the service running.

It helps to split rollback into two levels. Partial rollback is needed when not the whole feature breaks, but one risk area does. For example, you can keep the reply draft but forbid auto-send. Or disable one model and route traffic to a more predictable one. If the team works through a single gateway, such a switch is often faster and requires no changes in client code. For companies in Kazakhstan, this is especially convenient when you need to switch providers quickly, keep audit logs, and avoid moving sensitive data out of the country. AI Router on airouter.kz can help here: it provides one OpenAI-compatible endpoint, routing between providers, PII masking, rate limits, and data storage within Kazakhstan.

A full rollback is needed when the error is already hurting money, data, or trust. The triggers are better written down in advance:

- the model outputs personal data or internal text that should not go to the user;

- the share of dangerous or false answers is above the agreed threshold for two measurements in a row;

- complaints, manual corrections, or action cancellations after the AI response have increased;

- the team cannot quickly explain the cause of the error and narrow the scope of the problem.

Each trigger should have an owner. Usually the on-call engineer or ML owner decides on a partial rollback, while Product or the service lead confirms a full rollback. The document also needs a response time: for example, 15 minutes for a partial rollback and 1 hour for a full one. If there are two responsible people, state in advance who acts first if the second is unavailable.

A real-world test quickly brings everyone back to earth. The team should be able to roll back the feature in hours, not days. To do that, before launch it is worth running a dry failure once: disable the model in a test environment, switch the flow to manual mode, check logs, alerts, and the support message. If that rehearsal reveals manual config edits, token hunting, or coordination in five chat groups, the rollback plan is still immature.

Example with one feature

Let's take a simple AI feature: a draft reply for a customer in a banking chat. The user writes: "Yesterday my card was charged 18,500 tenge twice for a subscription. I never signed up for it. Refund the money and turn off auto-pay." On just one request like this, you can already see where the team’s expectations diverge.

One request, three answers

If the agreement between Product and ML is working, the team does not look at a "general impression," but at a concrete answer.

A good answer: I understand you are seeing a double charge. I will check the transaction. Please confirm the charge date and the last 4 digits of the card. After the check, we will tell you whether auto-pay can be turned off and what the refund timeline is. This text does not invent facts, does not promise too much, and moves the conversation forward.

A questionable answer: I will cancel the subscription now and pass the refund request on. For the user this sounds convenient, but the system has already promised an action it may not be able to perform. An operator can still edit the text, but sending this automatically is risky.

A bad answer: The money has already been refunded, expect it to be credited within 3-5 days. This is a clear mistake. The answer invented the result of a check and created a false expectation. For a financial scenario, that is a stop signal.

The value boundary here is fairly clear. An answer is useful if the operator can send it with almost no edits and the customer understands the next step after reading it. If the operator spends more time fixing it than writing the reply from scratch, the AI value threshold has not been met. If the text promises an action that did not happen or states an unverified fact, that is no longer a "questionable" case, but a failure.

What the team does in each case

After this breakdown, AI feature quality criteria stop being abstract. Each class needs its own next step.

The team counts a good answer as a pass in the test set and allows it into the pilot. A questionable one is marked edit needed: the prompt, rules, or model are changed, and until it is fixed the feature stays in draft-only mode for the operator. A bad one goes into the stop-scenario list, gets added to regression tests, and triggers the rollback plan for the AI feature to a template reply or manual handling.

That moves the debate out of taste. Product sees where there is value for the customer, while ML understands which class of errors must never reach even an early launch.

The working order before development starts

Before the first backlog item, the team needs not a model choice, but a clear frame. Without it, Product expects noticeable value for the user, while ML improves a metric that later satisfies nobody.

A good working order fits on one page. If the agreement cannot be written briefly, the dispute will almost certainly show up after launch.

First, describe one specific scenario. Not "AI helps the operator," but "AI suggests a draft reply to an incoming email." Right next to it, write the cost of error: wasted time, wrong advice, financial risk, customer complaint, or a policy violation.

After that, choose metrics that are truly tied to value. Usually two or three are enough: share of accepted answers, average number of edits, time to result, share of dangerous errors. Each metric needs a threshold and a review volume. The phrase "quality should be good" is not enough.

Then gather stop scenarios from real examples. Better 20 short cases with the expected bad outcome than a long description in general terms. For example, the feature must not invent a tariff, promise a refund without grounds, or expose personal data in the answer.

Then approve rollback. Who turns off the feature, where the flag sits, what the user sees after shutdown, who makes the decision at night or on a weekend. If the team routes multiple models through one gateway, it is worth naming the primary model, the fallback route, and the condition under which the system switches to manual mode in advance.

At the end, sign a short working document. It should include the feature owner on the Product side, the responsible person on the ML side, the review date, thresholds, stop scenarios, and the rollback process. Such a sheet often saves weeks of arguments.

A small example usually brings the conversation back to earth quickly. If AI writes a draft reply for support, the team might agree like this: the feature saves the operator at least 20 seconds per conversation, does not suggest actions outside company policy, and is disabled after two consecutive days with manual edits rising above the set threshold.

If even one of these items cannot be checked on an example, it is better not to start development. That means the agreement between Product and ML is not ready yet.

Mistakes that get expensive

The most expensive mistakes happen even before release: the team agrees too vaguely on what "normal quality" means. Then Product expects clear value for the user, while ML shows an average metric that looks decent only on a slide.

An average score almost always hides the tail. If the feature helps in 85% of simple cases, but in the remaining 15% it twists the meaning, leaks extra data, or recommends the wrong action, the user is not better off. For support, a bank, or a clinic, one bad class of requests damages trust more than a hundred good answers. That is why you need to look not only at the average, but also at slices: rare request types, long messages, mixed language, and requests without context.

The second expensive mistake appears when stop scenarios are written only after a successful demo. At that point, the team has already fallen in love with the idea and starts defending the feature instead of checking it soberly. Stop scenarios need to be fixed before the first integration: when the model confidently invents a fact, violates policy, fails to mask PII, or sends the user in circles. If this is not written down in advance, the argument will begin in production.

Another common failure is confusing a pilot with production. In a pilot, you can keep manual review, a narrow user segment, and slow error analysis. In production, those same assumptions break. If the team did not separate the conditions for the pilot from the conditions for a wide launch, the AI usefulness threshold will end up being fake.

The rollback plan for the AI feature also often exists only in words. Everyone agrees that "if anything happens, we will roll back," but nobody is assigned as the owner. When quality drops on a Friday evening, Product waits for ML to act, ML waits for the platform team to decide, and support is already replying to customers. Rollback needs an owner, a clear trigger, and a simple way to turn the feature off without long calls.

There is also a quiet mistake: quality is checked on easy examples. The team takes short requests that it invented itself. Real users write differently. They mix Russian and Kazakh, send fragments of sentences, contract numbers, names, anger, and sarcasm. For teams in Kazakhstan, that is an ordinary workday, not a rare edge case.

Before launch, it helps to answer four questions:

- which group of requests breaks trust even when the average result is good;

- which cases immediately stop the launch;

- how the pilot differs from production in metrics and risk;

- who personally rolls back the feature and who tells neighboring teams about it.

If these answers appear only after the first complaint, AI feature quality criteria have already cost too much.

Short checklist before launch

Before launch, you do not need a pile of expectations, but one short document that Product, ML, and business all read the same way. If the value statement is vague, the team almost always starts arguing after the first demo, when changing the approach is more expensive.

Before launch, check five things:

- The value is described in one sentence without double meaning. Not "make answers smarter," but, for example, "reduce an operator's request handling time from 6 minutes to 4 without increasing complaints."

- The launch threshold is stated in numbers. The team knows at what result the feature can go live and at what result it cannot.

- The stop-scenario list is collected in advance. It includes errors after which the feature cannot remain in production even if the average metric looks good.

- A specific person is responsible for rollback. They have the authority to stop the feature if it affects risk, money, or user experience.

- There is one document for all three sides. Product, ML, and business see the same version instead of retelling it from memory.

These are the working criteria for AI feature quality. They are not for reporting. They save weeks of arguments when the model has already been built into the product and it turns out that each side expected a different result.

If you have multiple models and strict data requirements, it is better to do this check before development. You need to know in advance which model is the default, where the logs go, how auditing works, where PII masking is enabled, and whether data can stay inside the country. For teams in Kazakhstan, these questions are often solved already at the architecture stage. If you need a single OpenAI-compatible gateway with audit logs, AI content labels, key-level restrictions, and local hosting for part of the models, AI Router can cover that.

A good readiness signal is very simple: any team member can answer in two minutes what value the feature provides, where the AI usefulness threshold is, what counts as a stop scenario, and who presses rollback. If even one point gets the answer "we'll figure it out as we go," it is better to move the launch back by a couple of days. Otherwise, you will end up fixing not the model, but the agreement between Product and ML.